You’ve been running JMeter for a while now. Maybe you inherited a test suite from the engineer who left six months ago, or maybe you built it yourself from a blank .jmx file. Either way, it’s been solid – until recently. The heap filled up at 3,000 virtual users. The distributed setup across five worker nodes took two and a half engineering days to stabilize. Your CI pipeline needs load tests that finish in under ten minutes, and JMeter’s infrastructure provisioning cycle doesn’t cooperate.

This guide exists for that moment.

What follows is two things in one article: first, a genuinely deep, practitioner-level JMeter reference covering architecture, test plan construction, plugins, distributed testing, and CI/CD integration with specific configurations and metrics. Second, an honest, evidence-backed answer to the question more performance engineers are quietly asking: “Have I outgrown JMeter?”

Everything here draws on Apache’s own official documentation [1][2][3], DORA’s research on CI/CD and delivery performance [4], and head-to-head feature evidence – not vendor marketing copy.

- What Is Apache JMeter? (And Why 90,500 People Search for It Every Month)

- JMeter Architecture: How It Actually Works Under the Hood

- Setting Up Your First JMeter Test: A Step-by-Step Walkthrough

- JMeter’s Plugin Ecosystem: Extending What the Tool Can Do

- JMeter Distributed Testing: Architecture, Setup, and the Real Complexity Cost

- When Teams Outgrow JMeter: An Honest Evaluation Framework

What Is Apache JMeter? (And Why 90,500 People Search for It Every Month)

Apache JMeter is an open-source, Java-based load testing and performance measurement application developed under the Apache Software Foundation. As the ASF’s official project page states, it is “an open-source Java application designed to load test functional behavior and measure performance” [1]. Originally created by Stefano Mazzocchi in 1998 to test Apache JServ (a precursor to Tomcat), it has evolved into a multi-protocol performance platform supporting HTTP/HTTPS, JDBC, LDAP, SOAP/REST, JMS, FTP, TCP, and SMTP.

With approximately 90,500 monthly searches and roughly 2,900 downloads per month across versions, JMeter maintains dominant open-source mindshare in the performance testing category – 11.7% according to PeerSpot’s 2026 data, the largest share among all tools in the category.

Equally important is what JMeter is not. It does not render JavaScript, execute CSS, or maintain a real browser DOM. It operates at the protocol level, sending HTTP requests and measuring server responses. If you need real-browser simulation to measure client-side rendering performance (Core Web Vitals, for instance), JMeter isn’t the tool. For a comprehensive official reference, see the Apache JMeter Official Best Practices Guide.

JMeter’s Core Purpose: Load Testing vs. Performance Testing vs. Stress Testing

Engineers frequently conflate these terms – and the confusion leads to poorly scoped tests. Per the ISTQB Performance Testing Certification & Methodology syllabus, here’s how these test types differ and how JMeter supports each:

- Load testing: Validates system behavior under expected concurrent user volumes. JMeter’s Thread Groups handle this natively – set 500 threads, a 120-second ramp-up, and a 30-minute hold period.

- Stress testing: Pushes the system beyond expected capacity to find the breaking point. Use JMeter’s Stepping Thread Group plugin to incrementally add users until error rates spike above your threshold (e.g., >2% HTTP 5xx).

- Soak (endurance) testing: Runs a sustained load for extended periods (4–72 hours) to detect memory leaks and connection pool exhaustion. JMeter supports this via the scheduler with a fixed-duration configuration, though heap management becomes critical at multi-hour durations.

- Spike testing: Simulates sudden traffic surges. JMeter’s Synchronizing Timer can release a batch of threads simultaneously, simulating a flash sale or breaking-news event.

Who Uses JMeter? A Realistic Picture of Its User Base

JMeter’s user base spans a wide spectrum, and different profiles hit its limits at different points:

A solo QA engineer at a 50-person startup typically runs single-node tests against staging environments with 50–200 virtual users. JMeter handles this comfortably; the friction is mostly learning curve – Groovy scripting for dynamic parameterization, understanding correlation for session tokens.

A performance team lead at a 500-person enterprise runs 5,000–20,000 VU regression suites against production-mirror environments, often on a weekly cadence integrated into release pipelines. This is where JMeter’s distributed setup complexity, memory ceilings, and lack of vendor support become tangible operational costs.

JMeter Architecture: How It Actually Works Under the Hood

Most guides list JMeter’s components as a glossary. Here’s how the execution model actually functions – and why the order matters.

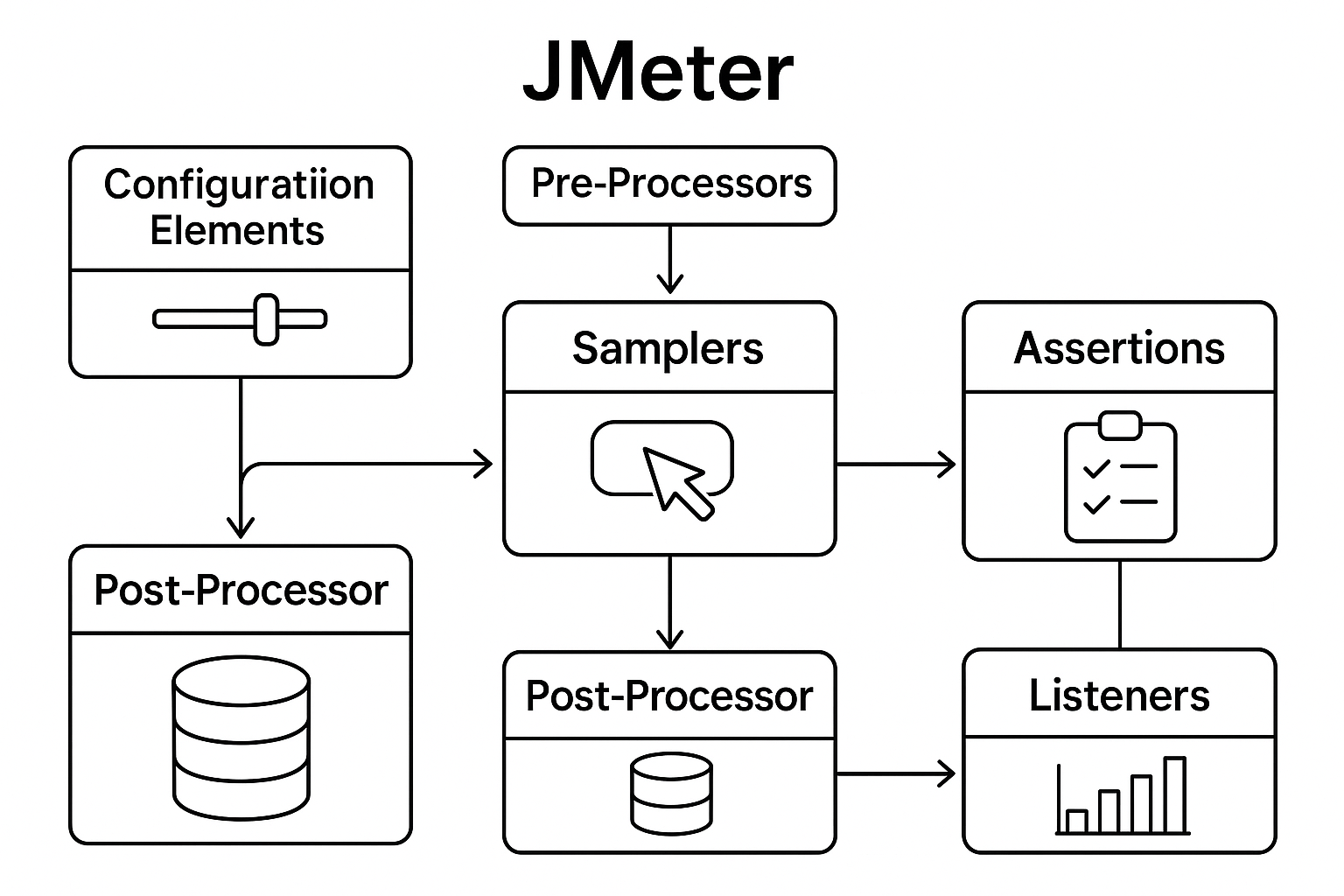

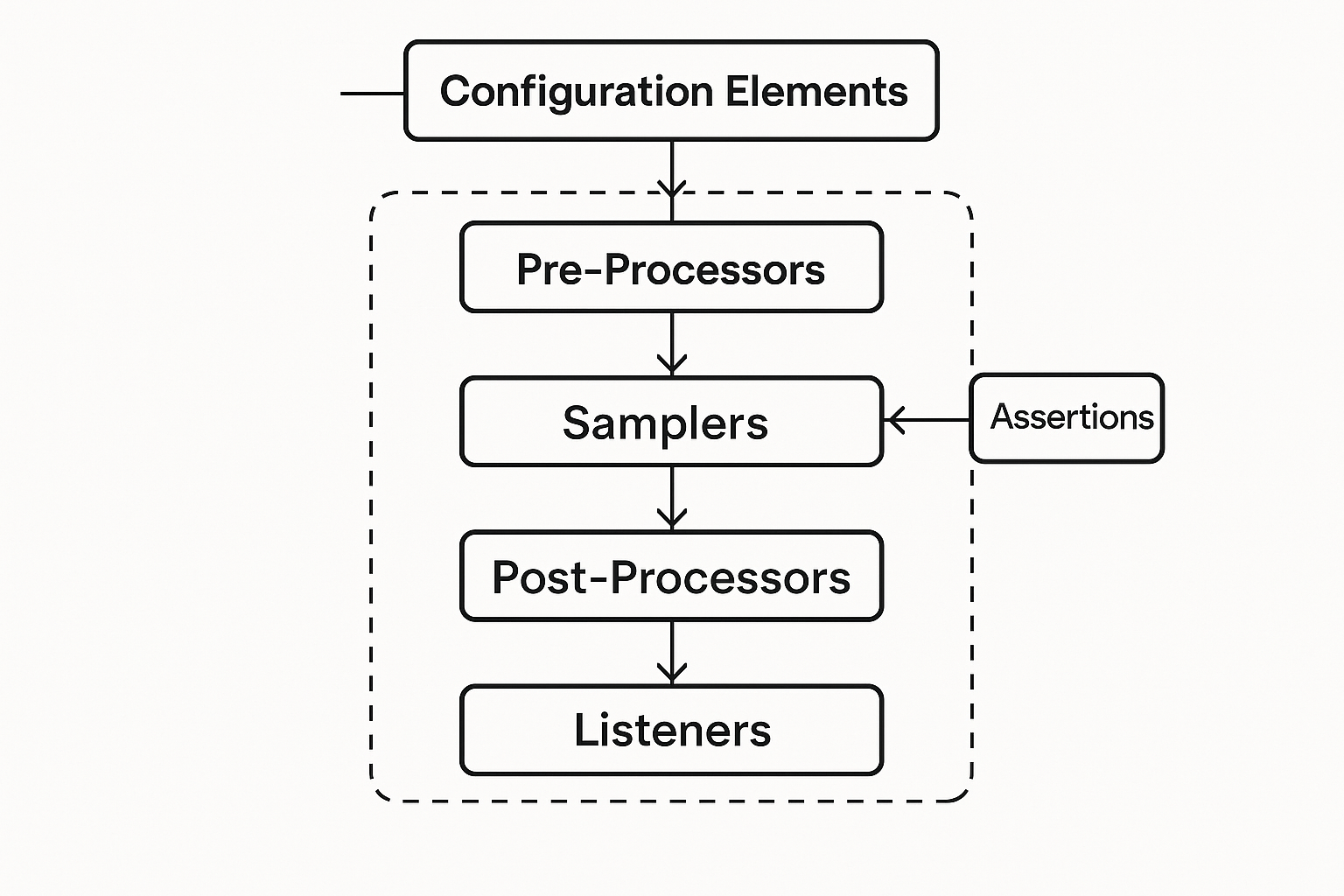

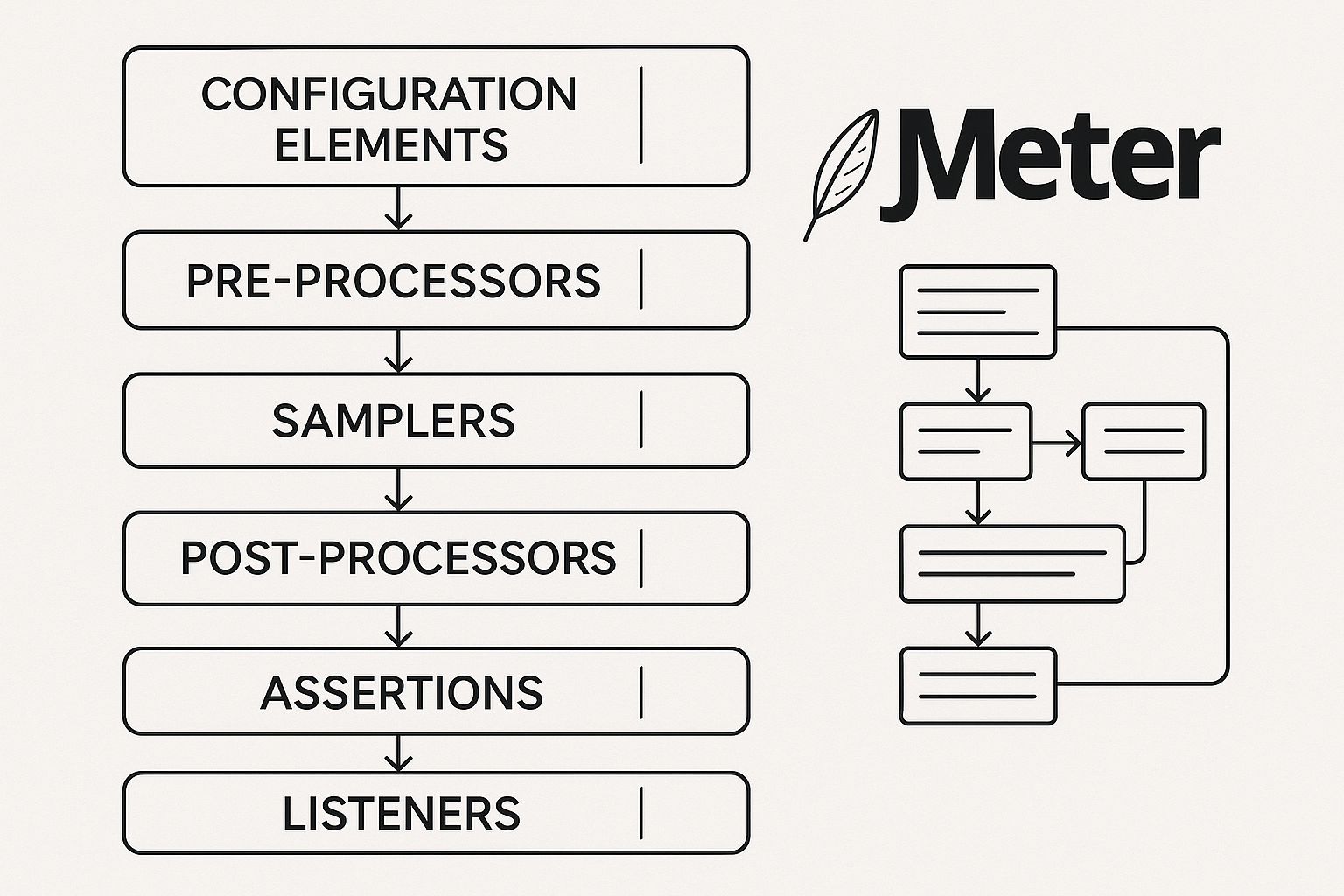

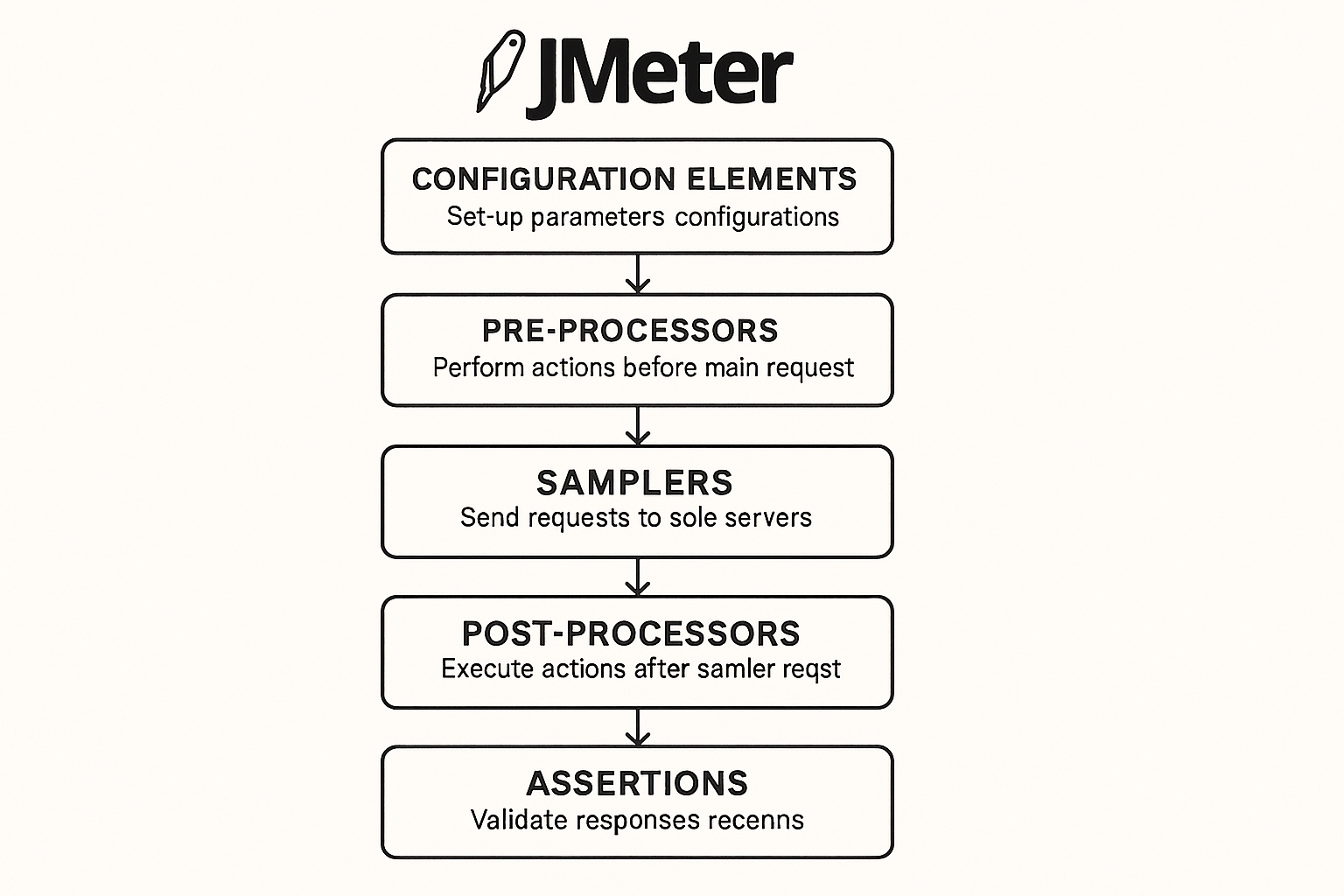

JMeter processes elements in a strict hierarchy within each thread: Configuration Elements → Pre-Processors → Samplers → Post-Processors → Assertions → Listeners. Misconfiguring this order causes real failures. Place a CSV Data Set Config after the HTTP Request sampler that references its variables, and every request fires with unresolved ${variable} strings instead of actual data.

One critical insight the official Best Practices guide [2] makes explicit: JMeter’s GUI mode is for test plan development only. Running actual load tests in GUI mode consumes substantial CPU and memory on the controller machine, degrading both the tool’s throughput capacity and the accuracy of your measurements. Non-GUI (CLI) mode is mandatory for real test execution.

Test Plans and Thread Groups: The Concurrency Engine

The Test Plan (.jmx file) is JMeter’s root configuration container. Thread Groups are its concurrency mechanism, with four key parameters:

- Number of Threads: Virtual users to simulate (e.g., 100)

- Ramp-Up Period: Seconds to start all threads (e.g., 60 seconds → one new thread every 0.6 seconds)

- Loop Count: Iterations per thread, or “Infinite” with a scheduler duration

- Scheduler: Duration-based execution (e.g., 600 seconds for a 10-minute sustained test)

The ramp-up math matters: threads ÷ ramp-up seconds = thread-start rate. Set 100 threads with a 50-second ramp-up, and JMeter starts 2 new threads per second. Set ramp-up to 0, and all 100 threads fire simultaneously – which frequently causes false failures because the application under test receives an instantaneous spike rather than a realistic ramp, and JMeter itself experiences thread-scheduling contention. For guidance on designing realistic concurrency patterns, see this guide on how to load test concurrent users.

Samplers, Controllers, and the Request Execution Flow

Samplers generate the actual requests: HTTP Request (by far the most used), JDBC Request, FTP, SMTP, TCP, JMS Publisher/Subscriber. Logic Controllers shape test flow: If Controller, While Controller, Loop Controller, Random Controller, Interleave Controller, and Throughput Controller.

Here’s how they compose in practice. An e-commerce checkout scenario might use:

- A Loop Controller (3 iterations) wrapping product-browse HTTP Requests to simulate catalog browsing

- An If Controller (

${__groovy(vars.get("userType") == "registered")}) routing authenticated users through a login flow and guests through a guest checkout path - A Random Controller selecting from five product-detail-page samplers to simulate varied browsing patterns

A detail that surface-level guides omit: the HTTP Sampler’s “Follow Redirects” and “Use KeepAlive” settings materially affect results. Disabling KeepAlive forces a new TCP connection per request, which measures connection overhead but doesn’t reflect modern browser behavior (which reuses connections via HTTP/1.1 persistent connections or HTTP/2 multiplexing).

Listeners, Assertions, and Making Sense of Results

Listeners collect and display results. The Apache JMeter FAQ explicitly warns: “Listeners like View Tree Results are useful for debugging your test, but they are too memory intensive to remain in your test when you ramp up the number of simulated users and iterations” [3]. This is the single most common JMeter performance mistake – engineers leave View Results Tree enabled during a 1,000-user run and wonder why JMeter itself runs out of memory.

For production load tests, use Aggregate Report (summary statistics) plus a Backend Listener configured for InfluxDB + Grafana. This externalizes time-series metrics from JMeter’s JVM entirely, giving you live dashboards without degrading test execution. Understanding which performance metrics matter most – throughput, latency percentiles, error rates – is essential for interpreting these dashboards correctly.

Assertions validate responses: Response Assertion (check HTTP status or body content), Duration Assertion (flag responses exceeding a threshold, e.g., >2000ms), JSON Assertion (validate API response structure), and Size Assertion.

Setting Up Your First JMeter Test: A Step-by-Step Walkthrough

Installation Prerequisites: Java, Download, and Initial Configuration

JMeter 5.x requires Java 8 or later. Download the binary from the official Apache mirrors, extract, and inspect the startup script (jmeter.bat on Windows, jmeter.sh on Linux/macOS). The Apache JMeter FAQ [3] documents the default heap configuration: set HEAP=-Xms256m -Xmx256m. This 256MB default is inadequate for any non-trivial load test. For a 500-user HTTP test, increase to at least -Xms1g -Xmx4g; for 2,000+ users on a single node, -Xms2g -Xmx8g is a reasonable starting point, depending on your test plan’s listener and sampler complexity.

Teams without Java in their standard environment face a real friction cost here – installing and maintaining JDK version compatibility is an ongoing operational consideration.

Building a Test Plan: HTTP Load Test From Scratch

A practical starting configuration for a web application load test:

- Thread Group: 100 threads, 60-second ramp-up, scheduler enabled with 600-second duration

- HTTP Request Defaults (Config Element): Set the base server name and port once – prevents hard-coding across dozens of samplers, which becomes a maintenance nightmare in a 50-sampler test plan

- HTTP Cookie Manager (Config Element): Required for any session-based application. Without it, JMeter doesn’t maintain session cookies between requests, and your test simulates 100 separate anonymous first-visits rather than authenticated user flows

- HTTP Request samplers for each endpoint in your user journey

- Response Assertion: Verify HTTP 200 status on critical requests

- Aggregate Report listener for summary metrics

Running Tests in Non-GUI Mode and Interpreting Results

The CLI invocation for production test execution:

jmeter -n -t testplan.jmx -l results.jtl -e -o /report-outputWhere -n = non-GUI mode, -t = test plan file, -l = results log, -e = generate report dashboard, -o = output directory for the HTML report. This is the officially recommended approach [2].

Focus on these metrics in the generated report:

- Throughput (requests/second): Your system’s actual processing rate under load

- p90/p95/p99 response times: p99 < 500ms means 99% of requests complete within 500ms. That still means 1 in 100 users experiences a response slower than 500ms – which, at 10,000 concurrent users, is 100 degraded experiences per request cycle

- Error rate: Anything above 0.1% in a well-configured test warrants investigation; above 1% signals a likely bottleneck or misconfiguration

Average response time alone is misleading. A mean of 200ms could mask a p99 of 3,000ms – one user in a hundred waits 15x longer than the average suggests.

JMeter’s Plugin Ecosystem: Extending What the Tool Can Do

The JMeter Plugins Manager (available at jmeter-plugins.org/install/Install/) is the standard extension mechanism. Five plugins that meaningfully improve test quality:

- jp@gc Concurrency Thread Group: Unlike the standard Thread Group – which starts all threads during ramp-up and terminates them when their loop count exhausts – this plugin maintains a target concurrency level throughout the test. If a thread finishes its iteration, a new one starts to maintain the count. This is how real traffic behaves.

- jp@gc Stepping Thread Group: Adds users in configurable steps (e.g., add 50 users every 30 seconds, hold for 60 seconds) for progressive ramp analysis.

- jp@gc Transactions per Second: Visualizes throughput over time – critical for identifying throughput plateaus.

- PerfMon Server Agent: Collects server-side CPU, memory, disk I/O, and network metrics alongside JMeter’s client-side data. Requires deploying a separate agent process on each target server. If response times degrade at 500 concurrent users but server CPU is at 40%, the bottleneck is likely application-layer (thread pool exhaustion, database connection limits) rather than hardware capacity.

- Dummy Sampler: Generates configurable fake responses for debugging test plan logic without hitting real servers – saves hours when building complex controller flows.

Be aware: plugin ecosystem maintenance is community-dependent. Some plugins lag behind JMeter’s release cycle or become unmaintained. This version-compatibility risk is a real operational concern for teams requiring stable, long-running test infrastructure.

JMeter and CI/CD Integration: Shift-Left Performance Testing

The DORA 2023 State of DevOps Report [4] found that “continuous integration, loosely coupled teams, and fast code reviews significantly improve software delivery and operational performance.” Integrating load testing into CI/CD transforms performance from a late-stage gate into a continuous signal – as recommended by DORA State of DevOps Research and explored further in this practical guide on integrating performance testing in CI/CD pipelines.

A practical CI pattern:

- On every PR merge: Run a smoke-scale JMeter test (10 VUs, 2-minute duration) against the critical user journey. Pipeline fails if p95 response time exceeds 800ms OR error rate exceeds 1% for the checkout-flow sampler.

- On release candidates: Trigger a full regression load test (500–2,000 VUs, 30-minute duration) with a stricter threshold: p99 < 500ms, error rate < 0.5%.

In Jenkins, this is a shell step invoking the CLI command; in GitHub Actions, the maven-jmeter-plugin or a direct CLI invocation in a container step works. GitLab CI follows the same pattern with a .gitlab-ci.yml stage.

The honest friction: full-scale distributed JMeter tests (10,000+ VUs) are difficult to run in CI pipelines because provisioning, configuring, and tearing down 5–10 worker nodes per pipeline run adds substantial infrastructure overhead. This is where managed or cloud-native solutions offer a practical advantage.

JMeter Distributed Testing: Architecture, Setup, and the Real Complexity Cost

JMeter’s distributed architecture uses a Controller node (runs the test plan, aggregates results) and Worker nodes (execute load generation). The official documentation [2] states the per-node ceiling: “A single JMeter client running on a 2-3 GHz CPU (recent CPU) can handle 1000-2000 threads depending on the type of test.” For a 10,000-user test, you need 5–10 worker nodes.

Step-by-Step: Configuring Controller and Worker Nodes

Per the Apache JMeter Distributed Testing Documentation [2]:

- Start

jmeter-serveron each worker node - Edit

jmeter.propertieson the controller:remote_hosts=192.168.1.10,192.168.1.11,192.168.1.12 - Configure RMI SSL or disable for trusted networks:

server.rmi.ssl.disable=true - Open firewall port 1099 (RMI registry) and dynamic RMI ports (4000+) on all nodes

- Launch:

jmeter -n -t test.jmx -r -l results.jtl(the-rflag triggers remote execution)

Critical constraints documented by Apache [2]: “RMI cannot communicate across subnets without a proxy; therefore neither can JMeter without a proxy.” And: “Make sure you use the same version of JMeter and Java on all the systems. Mixing versions will not work correctly.” Java version mismatch between controller and workers is the single most common cause of distributed test failures.

The Hidden Costs of Manual Distributed Setup (And When They Become a Problem)

A typical initial distributed JMeter setup – provisioning 5 worker nodes, configuring RMI, validating firewall rules, and debugging version mismatches – routinely takes 1–3 engineering days. For teams running weekly regression load tests, this setup-to-value ratio is a real friction cost that compounds over time.

Additional hidden costs include:

- Result aggregation bottleneck: Worker nodes stream results back to the controller over RMI. At high throughput (>10,000 requests/second across workers), this channel can saturate, causing result loss or controller instability.

- No native auto-scaling: JMeter’s distributed model requires manually provisioned, pre-configured worker pools. There’s no built-in elastic scaling tied to test demand.

- Version drift: As JMeter, Java, and OS patches evolve, keeping all nodes synchronized requires deliberate configuration management – Ansible, Docker images, or similar automation.

This is an area where commercial and managed solutions offer a genuine, practical advantage – not as a vendor pitch, but as an honest engineering trade-off between self-managed infrastructure overhead and paid operational simplicity.

When Teams Outgrow JMeter: An Honest Evaluation Framework

JMeter works well within its design envelope. The decision to evaluate alternatives isn’t about JMeter being “bad” – it’s about recognizing when your requirements have expanded beyond what a community-maintained, protocol-level tool was designed to handle. For a structured approach to this decision, see this guide on how to choose a performance testing tool.

Consider evaluating commercial alternatives when:

- Your standard test requires 5,000+ virtual users and the distributed setup overhead exceeds 10% of your total test cycle time

- You need real-browser testing for client-side performance (Core Web Vitals, JavaScript rendering) alongside protocol-level load

- Your organization requires vendor SLA-backed support rather than community forums for troubleshooting production-blocking issues

- You need integrated server-side diagnostics (APM-style correlation between load generator data and application-tier metrics) without bolting on separate monitoring tools

- CI/CD pipeline velocity demands elastic, auto-provisioned load generation infrastructure

| Evaluation Criterion | Open-Source (JMeter) | Commercial Solutions |

|---|---|---|

| Licensing cost | Free | Subscription/perpetual license |

| Per-node VU ceiling | 1,000–2,000 threads | Typically 5,000–10,000+ |

| Distributed setup | Manual RMI configuration | Auto-provisioned cloud/on-prem pools |

| Real-browser support | None native | Native or integrated |

| Vendor support | Community forums | Dedicated engineering support with SLAs |

| CI/CD integration | CLI + custom scripting | Built-in pipeline integrations |

| Script maintenance | Groovy scripting | Auto-correlation, AI-assisted scripting |

WebLOAD by RadView, for example, addresses several of these gaps with auto-correlated script generation, native JavaScript-based scripting, and elastic cloud load generation that provisions and tears down injectors automatically. Its integrated analytics dashboard correlates client-side response data with server-side metrics without requiring a separate PerfMon agent deployment. For teams whose testing requirements have scaled beyond what a self-managed JMeter infrastructure can efficiently support, RadView’s platform offers a reduce-the-operational-overhead alternative while maintaining protocol-level depth.

Other commercial options in this category include cloud-based SaaS platforms that wrap JMeter’s engine with managed infrastructure, and enterprise suites that offer broader protocol coverage. The right choice depends on your team’s specific scale requirements, protocol diversity, support expectations, and budget constraints.

Frequently Asked Questions

How many virtual users can a single JMeter instance realistically handle before results become unreliable?

Apache’s official documentation states 1,000–2,000 threads per node on a modern 2–3 GHz CPU [2], but this ceiling drops significantly with complex test plans. Each JSR223 PostProcessor with Groovy compilation, each regex extractor, and each active listener consumes heap and CPU cycles per thread. In practice, monitor your JMeter node’s own CPU (should stay below 80%) and GC pause times during the test. If JMeter’s GC pauses exceed 500ms, your response-time measurements are contaminated by the tool’s own overhead, not the system under test.

Is 100% load test coverage of all API endpoints worth the investment?

Not always – and chasing it often produces diminishing returns. Focus coverage on endpoints that carry the highest traffic volume, the highest revenue impact, or the highest architectural risk (e.g., endpoints that hit shared database connections or external service dependencies). A 20-endpoint test covering 85% of production traffic patterns produces more actionable data than a 200-endpoint test that takes three days to maintain and four hours to execute.

Can JMeter replace dedicated APM tools for production performance monitoring?

No. JMeter measures system behavior from the client perspective under synthetic load. It cannot instrument application code paths, trace distributed transactions across microservices, or monitor production traffic in real time. Use JMeter for pre-production load validation; use APM tools (with distributed tracing) for production observability. The two are complementary, not interchangeable.

What’s the most reliable way to handle dynamic session tokens (correlation) in JMeter?

Use a Regular Expression Extractor or JSON Extractor as a Post-Processor on the response that contains the token, storing it in a JMeter variable. Reference that variable in subsequent requests. For complex applications with multiple tokens across redirects, the process becomes tedious – each token requires manual identification, extractor configuration, and variable substitution. This manual correlation workflow is one of the primary productivity differences between JMeter and commercial tools that offer auto-correlation engines.

Should I run JMeter load tests in Docker containers?

Yes, with caveats. Docker simplifies version consistency across distributed nodes (solving the version-drift problem), but container resource limits (CPU shares, memory cgroups) can artificially constrain JMeter’s throughput. Always set explicit --memory and --cpus flags on the container that match or exceed what you’d allocate on a bare-metal node, and validate that container networking doesn’t add latency overhead that contaminates your measurements.

Tool capability claims in this article are attributed to specific documentation versions. JMeter version numbers and default JVM heap values (-Xms256m -Xmx256m) may differ across releases – readers should verify against their installed version’s official documentation. The WebLOAD comparison reflects publicly available capability documentation and community review data current as of the article’s last update; commercial licensing terms and feature availability should be confirmed directly with RadView.

References and Authoritative Sources

- Apache Software Foundation. (N.D.). Apache JMeter™. Retrieved from https://jmeter.apache.org/

- Apache Software Foundation. (N.D.). Apache JMeter – Apache JMeter Distributed Testing Step-by-step. Apache JMeter User’s Manual. Retrieved from https://jmeter.apache.org/usermanual/jmeter_distributed_testing_step_by_step.html

- Apache Software Foundation. (N.D.). JMeterFAQ. Apache JMeter Wiki, Apache Software Foundation Confluence. Retrieved from https://cwiki.apache.org/confluence/display/JMETER/JMeterFAQ

- DORA Team, Google Cloud. (2023). Accelerate State of DevOps Report 2023. DORA (DevOps Research and Assessments). Retrieved from https://dora.dev/research/2023/dora-report/