A QA engineer types “which is better, JMeter or Selenium?” into a search engine and finds a dozen articles that rank tools against each other without answering the actual question. The reason? The question itself conflates two fundamentally different categories of testing. JMeter is a protocol-level load testing tool. Selenium is a browser-level functional automation tool. Comparing them head-to-head is like comparing a cardiac stress test to an MRI – both examine the same patient, but they measure entirely different things.

This confusion is understandable. Both tools are open-source, both show up in QA job postings, and both can technically “test a web application.” But the similarity ends there. Their architectures, resource profiles, output metrics, and appropriate use cases diverge so sharply that choosing between them is the wrong framing altogether. The right framing is: which testing layer am I targeting, and which tool belongs there?

This article provides a structured decision framework – not a vague “it depends” – for mapping JMeter, Selenium, and enterprise-grade alternatives to the correct layer of your testing strategy. You’ll get a concrete decision matrix, integration patterns for combining both tools, and a clear-eyed look at where open-source options plateau and enterprise solutions pick up.

- JMeter vs Selenium: They’re Not Competing – They’re on Different Layers

- What JMeter Does Best: Load and Performance Testing at Protocol Scale

- What Selenium Does Best: Browser-Level Functional Validation

- The Decision Matrix: When to Use JMeter, When to Use Selenium, When to Use Both

- The Integration Pattern: Running Selenium Scripts at Load Testing Scale

- Six Mistakes Teams Make When Choosing Between JMeter and Selenium

- References and Authoritative Sources

JMeter vs Selenium: They’re Not Competing – They’re on Different Layers

The ISTQB Foundation Level Syllabus draws a hard line between functional testing (validating what a system does) and non-functional testing (validating how a system performs under conditions like load, stress, and concurrency) [1]. JMeter lives squarely in the non-functional category. Selenium lives in the functional category. Conflating them isn’t a preference issue – it’s a taxonomy error.

Ham Vocke’s widely cited “Practical Test Pyramid” on MartinFowler.com reinforces this distinction: Selenium and the WebDriver protocol automate tests “by automatically driving a (headless) browser against your deployed services, performing clicks, entering data and checking the state of your user interface” [2]. That’s UI-layer functional validation – not load simulation.

What Protocol-Level Testing Actually Means (And Why It Scales)

JMeter generates HTTP, REST, SOAP, WebSocket, JDBC, and other protocol-level requests directly, bypassing the browser entirely. There’s no DOM rendering, no CSS painting, no JavaScript execution. Each virtual user is essentially a lightweight socket connection issuing requests and parsing responses.

The resource implications are dramatic. A single JMeter thread typically consumes 1 – 2MB of heap memory. A Selenium browser session – even headless – requires 150 – 300MB of RAM for the rendering engine, V8 JavaScript runtime, and DOM environment. On a machine with 16GB of available heap, JMeter can sustain roughly 5,000 – 8,000 concurrent virtual users. Selenium tops out around 50 – 80 sessions on the same hardware.

For server-side validation – measuring p95 response time, throughput under sustained concurrency, error rates at capacity – JMeter’s protocol-level approach delivers the data you need without the overhead you don’t. A typical test plan might configure a thread group ramping from 0 to 500 users over 60 seconds, sustaining load for 300 seconds, with HTTP samplers targeting a REST API endpoint and response assertions validating both HTTP 200 status and latency under 500ms. The Apache JMeter User Manual documents the full component library for building these plans, and for a deeper look at JMeter’s architecture and when teams outgrow it, see our guide to Apache JMeter.

What Browser-Level Testing Actually Means (And Why It Can’t Easily Scale)

Selenium communicates with real browsers through the WebDriver protocol, executing genuine DOM interactions, triggering JavaScript event handlers, and rendering pages exactly as a user would see them. This fidelity is why Selenium is the industry default for functional regression suites – it catches bugs that exist only at the intersection of HTML, CSS, JavaScript, and browser-specific rendering behavior.

That fidelity carries a cost. Each Selenium session is a full browser process. A 32-node Selenium Grid running headless Chrome typically requires ~32 cores and ~48GB RAM to run 100 parallel sessions reliably. Scaling to 500 concurrent sessions means 5x the infrastructure, with diminishing reliability as browser processes compete for CPU time. As Vocke notes on MartinFowler.com, teams should “write very few high-level tests that test your application from end to end” [2] – the pyramid narrows at the top by design, not by accident. The Official Selenium Documentation details Grid architecture and node configuration for teams optimizing parallel execution.

Why Teams Conflate Them (And the Hidden Cost of That Confusion)

Both tools appear in QA toolchain discussions, both are free, and job postings routinely list “JMeter/Selenium experience required” as a single line item. The conflation feels natural – until it produces real failures.

A team attempting a 500-user load test with Selenium sessions will typically exhaust a 64GB RAM server in under 3 minutes, producing infrastructure failure data rather than application performance data. Conversely, a team using JMeter to validate a JavaScript-heavy SPA checkout flow will get clean HTTP 200 responses and sub-200ms latency while the actual user interface fails to render the cart total – because JMeter never executed the JavaScript that computes it.

The ISTQB classification isn’t academic pedantry. It’s a guardrail against wasting infrastructure budgets on the wrong tool and missing production-impacting bugs because the right tool was never deployed [1].

What JMeter Does Best: Load and Performance Testing at Protocol Scale

A deep-dive into JMeter’s genuine strengths – the scenarios where it is genuinely the right tool for the job. Covers its protocol support breadth, its architecture for simulating concurrent users at scale, and its role in validating server-side performance. Also honestly addresses its limitations – no JavaScript execution, no rendering validation, complexity at distributed scale – so readers trust the guidance is objective, not promotional.

JMeter’s Protocol Support: HTTP Isn’t the Whole Story

Modern applications are multi-protocol. A single user action – say, submitting an order – might hit a REST API (HTTP), trigger a message queue write (JMS), execute a database query (JDBC), authenticate against a directory (LDAP), and transfer a receipt document (FTP). JMeter natively supports all of these through dedicated samplers, plus SOAP/XML-RPC, WebSocket, SMTP, and TCP. Testing only the HTTP layer misses database query latency spikes, message queue throughput degradation, and authentication service bottlenecks – all of which manifest as user-facing slowdowns.

Scripting in JMeter: Thread Groups, Samplers, and Assertions Explained

A JMeter test plan follows a structured component model. A realistic example for load testing a checkout API:

- Thread Group: 200 users, 45-second ramp-up, 10-minute sustained duration

- HTTP Header Manager: Authorization bearer token, Content-Type application/json

- HTTP Sampler: POST /api/v2/checkout with parameterized cart payload

- JSON Extractor: Capture orderId from response body

- Response Assertion: HTTP status 200 AND response time < 500ms

- Aggregate Report Listener: Capture p50, p90, p95, p99 latency and throughput

The GUI is powerful but dated. Newcomers often struggle with dynamic correlation – extracting session tokens from response headers and injecting them into subsequent requests. This learning curve is genuine and worth acknowledging; it’s one of the friction points that drives teams toward commercial platforms with automated correlation engines.

JMeter’s Real Limitations: Where It Hits a Wall

JMeter’s protocol-level architecture means it has zero visibility into client-side behavior. Consider a React SPA where the server returns a 150ms JSON response containing product data, but a client-side rendering bug causes the price display to take 3.2 seconds – or to render incorrectly. JMeter reports a passing test with sub-200ms latency. The user sees a broken page.

Additional friction points at enterprise scale: distributed testing across multiple load generators requires manual JMX file distribution and result aggregation; the built-in reporting generates functional but visually limited HTML dashboards; and extending protocol support beyond native samplers requires JMeter plugin development in Java – not trivial for teams without JVM expertise. For a broader look at the different types of performance testing and where JMeter fits, understanding the full taxonomy helps teams avoid tool-objective mismatches.

What Selenium Does Best: Browser-Level Functional Validation

Covers Selenium’s genuine strengths with the same depth and objectivity applied to JMeter. Focuses on cross-browser functional automation, DOM interaction, JavaScript execution validation, and UI workflow testing. Explains why Selenium is the industry default for functional regression suites and why its resource intensity is a design trade-off, not a flaw – it’s buying you real-browser fidelity. Honestly addresses scaling limitations.

Selenium is irreplaceable for testing scenarios where the correctness of user-visible behavior depends on client-side execution. A concrete example: an e-commerce site where promotional pricing is computed client-side – the server returns a base price of $99.99, and JavaScript applies a 20% discount after a coupon code triggers an event handler. Selenium validates that the displayed price updates to $79.99, that the “Apply” button disables after use, and that the cart total recalculates correctly. JMeter’s HTTP sampler would see a 200 response with the base price JSON and report success – completely missing the rendering logic that the customer actually experiences.

Selenium’s Scaling Ceiling: Why Browser Sessions Are Expensive

Each Selenium session instantiates a complete browser runtime. Even with headless Chrome, each instance consumes ~120 – 180MB RAM and measurable CPU. Selenium Grid distributes sessions across nodes, but the economics are unfavorable for load simulation: running 1,000 concurrent browser sessions requires infrastructure that costs 50 – 100x what a protocol-level tool needs for equivalent virtual user counts.

This resource cost is justified for what Selenium is designed to do – parallel functional regression across browsers and environments. It’s not justified for simulating 5,000 concurrent users hitting a login endpoint. Treating Selenium as a load tool doesn’t just waste money; it produces unreliable data because browser-process resource contention distorts timing measurements.

The Decision Matrix: When to Use JMeter, When to Use Selenium, When to Use Both

DORA’s research identifies test automation as the primary technical capability enabling continuous delivery, with teams that implement comprehensive automated test suites showing “improved software stability, reduced team burnout, and lower deployment pain” [3]. The question isn’t whether to automate – it’s which tool maps to which test objective.

| Testing Scenario | Recommended Tool | Key Metric to Capture |

|---|---|---|

| Validate REST API handles 1,000 concurrent users | JMeter | p95 latency, throughput (req/s), error rate |

| Test checkout flow across Chrome, Firefox, Safari | Selenium | Pass/fail per browser, DOM state assertions |

| Measure database query performance under load | JMeter (JDBC sampler) | Query execution time at p99, connection pool saturation |

| Validate JavaScript-rendered price calculations | Selenium | Displayed values vs. expected after JS execution |

| Simulate Black Friday traffic spike on API layer | JMeter | Max throughput before error rate exceeds 1% |

| Confirm SPA renders correctly under 200 concurrent sessions | WebLOAD w/ Selenium scripts | Browser-rendered p95 latency, client-side error count |

Use JMeter When: Server-Side Performance Is the Question

Four scenarios where JMeter is the clear choice:

- Pre-launch capacity validation: Confirm that /api/search returns results within p95 < 400ms under 1,000 concurrent users before a product launch.

- API SLA regression testing: Verify that a deployment hasn’t degraded /api/checkout response time beyond the 300ms SLA threshold under 500 sustained users.

- Database stress testing: Use the JDBC sampler to measure query execution time when 200 concurrent connections hit the order-history table.

- Message queue throughput: Validate JMS consumer processing rates under peak message volumes.

Use Selenium When: User-Facing Behavior and Browser Reality Are the Question

Selenium is the right tool when correctness depends on what the user sees, not just what the server returns. The canonical failure scenario: a server returns HTTP 200 with valid JSON, but a JavaScript rendering error causes the shopping cart to display empty. JMeter passes this test. Selenium catches it.

Use Selenium for cross-browser regression testing suites, JavaScript-triggered workflow validation, visual state verification after AJAX content loading, and any test where the assertion requires DOM inspection rather than HTTP response parsing.

Use Both When: Full-Stack Coverage Is the Goal

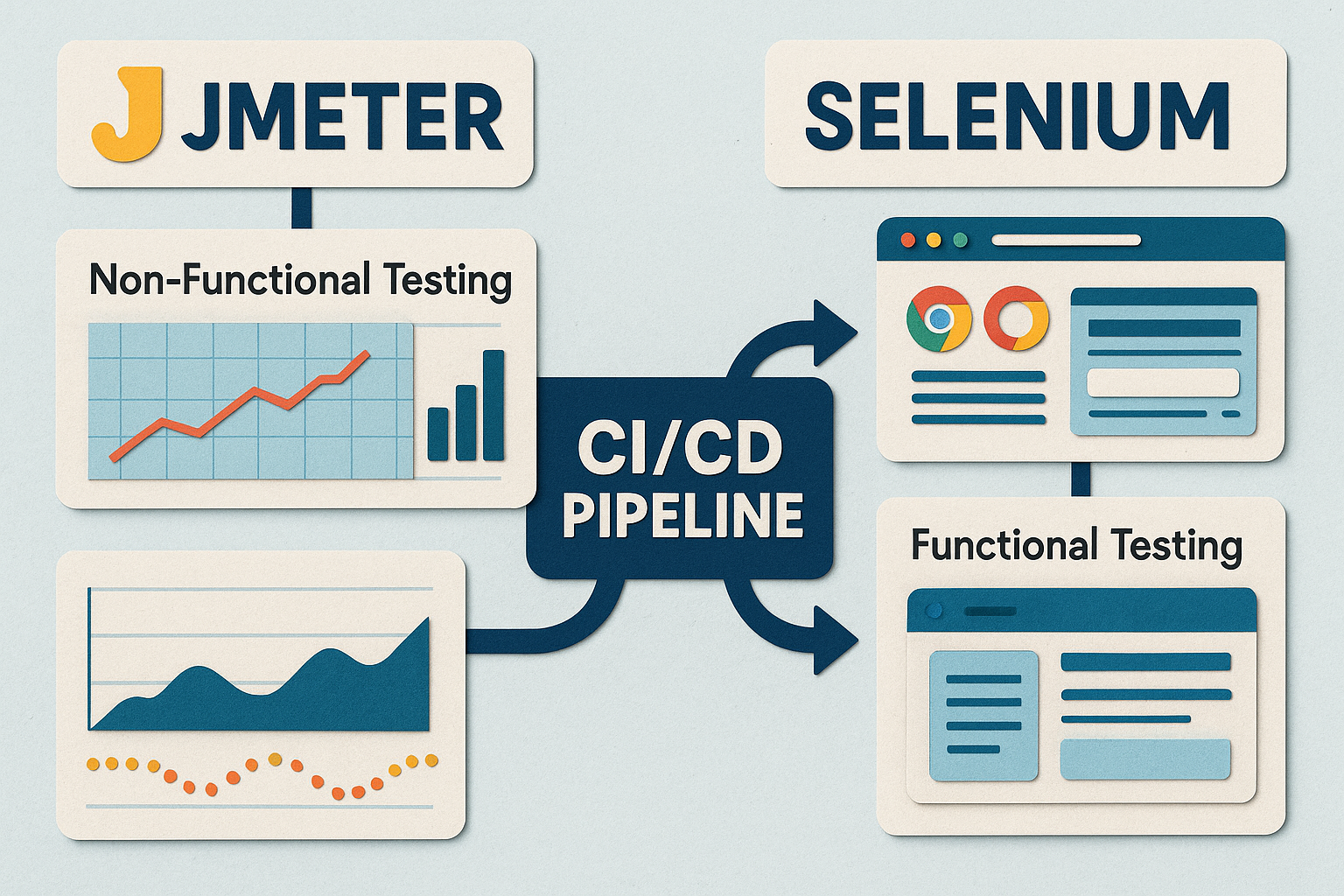

DORA’s continuous delivery research explicitly places “nonfunctional tests such as performance tests” inside the deployment pipeline alongside functional tests [4]. A concrete dual-tool pipeline:

- Stage 1 (Merge to main): Selenium runs 150 functional regression tests. Gate: 100% pass rate required.

- Stage 2 (Merge to release branch): JMeter runs a 500-user load test against the API tier. Gate: p95 < 300ms, error rate < 0.5%.

- Stage 3 (Pre-staging): Both gates must pass before deployment proceeds.

This layered approach aligns with The Practical Test Pyramid (Martin Fowler): many fast protocol-level tests at the base, fewer browser-level tests at the top, each catching failures the other layer cannot. For practical guidance on embedding these gates into your CI/CD workflow, see our guide on integrating performance testing in CI/CD pipelines.

The Integration Pattern: Running Selenium Scripts at Load Testing Scale

For JavaScript-heavy SPAs – React, Angular, Vue applications where the client does significant rendering and computation – server-side metrics alone are insufficient. A React-based e-commerce site where cart totals, promotional pricing, and inventory availability are computed client-side can show 150ms server response times while actual browser render time degrades to 2.3 seconds under concurrent load. Only browser-level load testing surfaces this degradation. DORA’s test automation research reinforces the principle: “effective test suites are reliable – tests find real failures and only pass releasable code” [3].

Why You’d Want to Run Selenium Scripts as Load Tests in the First Place

Explains the business case for browser-level load testing specifically: SPAs and JavaScript-heavy applications may pass all JMeter performance thresholds while still delivering degraded user experiences under load due to client-side rendering bottlenecks. Describes the scenarios where this matters – e-commerce checkout flows, real-time dashboards, React/Angular/Vue applications where the client-side JS is doing significant work.

How WebLOAD Executes Selenium Scripts at Scale: The Technical Approach

WebLOAD natively imports existing Selenium WebDriver scripts and executes them as load test scenarios with controlled concurrency, ramp-up patterns, and integrated performance metric collection – capturing both server response time and browser-rendered latency in the same test run. Teams that have invested weeks building Selenium functional suites can repurpose those scripts for performance validation without rewriting them in a different tool’s scripting language.

A practical example: a team with 80 Selenium scripts for functional regression configured WebLOAD to run 50 concurrent browser sessions with a 30-second ramp-up, collecting p95 and p99 browser-render latency alongside server response time. The conversion effort was measured in hours, not the weeks that rewriting from scratch in JMeter would have required. For the full technical walkthrough, see Executing Selenium Scripts in WebLOAD and How QA Teams Extend Selenium for Scalable Load and Functional Testing.

Resource Optimization: Making Browser-Level Load Testing Economically Viable

Addresses the cost and infrastructure concerns head-on. Covers strategies for reducing the resource cost of browser-based load testing: headless Chrome vs. full browser execution (40-60% RAM reduction), cloud-based load injectors for elastic scaling, hybrid approaches that combine browser-level scripts for critical user journeys with protocol-level simulation for background load, and test scope management (which flows need browser validation vs. which can use JMeter).

Six Mistakes Teams Make When Choosing Between JMeter and Selenium

A deliberately different structural pattern for this section – a numbered mistake list with a one-paragraph explanation for each, rather than the deep narrative structure used elsewhere. This format serves the reader who has absorbed the conceptual framework and wants quick, actionable ‘watch out for this’ guidance. Covers the most common errors observed in practice, from using Selenium for load testing to ignoring protocol-level opportunities entirely.

References and Authoritative Sources

- ISTQB. (N.D.). ISTQB Certified Tester Foundation Level Syllabus – Glossary: Functional Testing, Non-Functional Testing. International Software Testing Qualifications Board. Retrieved from https://glossary.istqb.org/

- Vocke, H. (2018). The Practical Test Pyramid. MartinFowler.com (Thoughtworks). Retrieved from https://martinfowler.com/articles/practical-test-pyramid.html

- DORA / Google Cloud. (N.D.). Capabilities: Test Automation. DevOps Research and Assessment. Retrieved from https://dora.dev/capabilities/test-automation/

- DORA / Google Cloud. (N.D.). Capabilities: Continuous Delivery. DevOps Research and Assessment. Retrieved from https://dora.dev/capabilities/continuous-delivery/