Shift left testing is the practice of integrating testing activities, including performance and load testing, as early as possible in the software development lifecycle, moving quality validation from late-stage environments like staging and UAT toward requirements, design, and coding phases where defects cost a fraction of their post-release price.

That fraction is not a figure of speech. According to NIST-commissioned research, a defect caught after release costs 30 times more to remediate than one caught during requirements gathering [1]. Multiply that across every slow database query, every unindexed API endpoint, and every memory leak that slips through to production, and the cumulative cost becomes the single largest controllable expense in most engineering budgets.

If you’re a QA lead, DevOps manager, or performance engineer who has ever watched a p99 latency spike surface for the first time during a pre-launch load test, or worse, from a production incident alert at 2 a.m., you already understand the problem viscerally. The question is how to structurally prevent it.

This guide delivers the implementation specifics that most shift left resources skip. You’ll find: the CMU Software Engineering Institute’s four-type taxonomy for shift left testing, a side-by-side comparison of traditional versus shift left approaches with quantified cost differences, a six-step implementation playbook with measurable success criteria for each step, concrete CI/CD pipeline integration patterns with CLI triggers and automated regression gates, and a FAQ section that addresses the scenarios where shifting left is not the right call. Jump to the section you need, or read through for the full picture.

- Shift Left Testing, Defined: One Sentence That Changes How You Build Software

- The Late-Defect Tax: What Late-Stage Bug Discovery Is Actually Costing Your Team

-

The Four Types of Shift Left Testing (CMU SEI Framework Explained)

- Type 1. Traditional Shift Left: Structured Testing Earlier in the Waterfall

- Type 2. Incremental Shift Left: Testing in Sync with Agile Sprints

- Type 3. Agile/DevOps Shift Left: Continuous Testing Baked Into the Pipeline

- Type 4. Model-Based Shift Left: Testing Against Specifications Before Code Exists

- Step-by-Step: How to Implement Shift Left Testing in Your SDLC

- How WebLOAD Enables Shift Left Performance Testing: CI/CD CLI Triggers and Regression Gates in Practice

- Frequently Asked Questions

- References and Authoritative Sources

Shift Left Testing, Defined: One Sentence That Changes How You Build Software

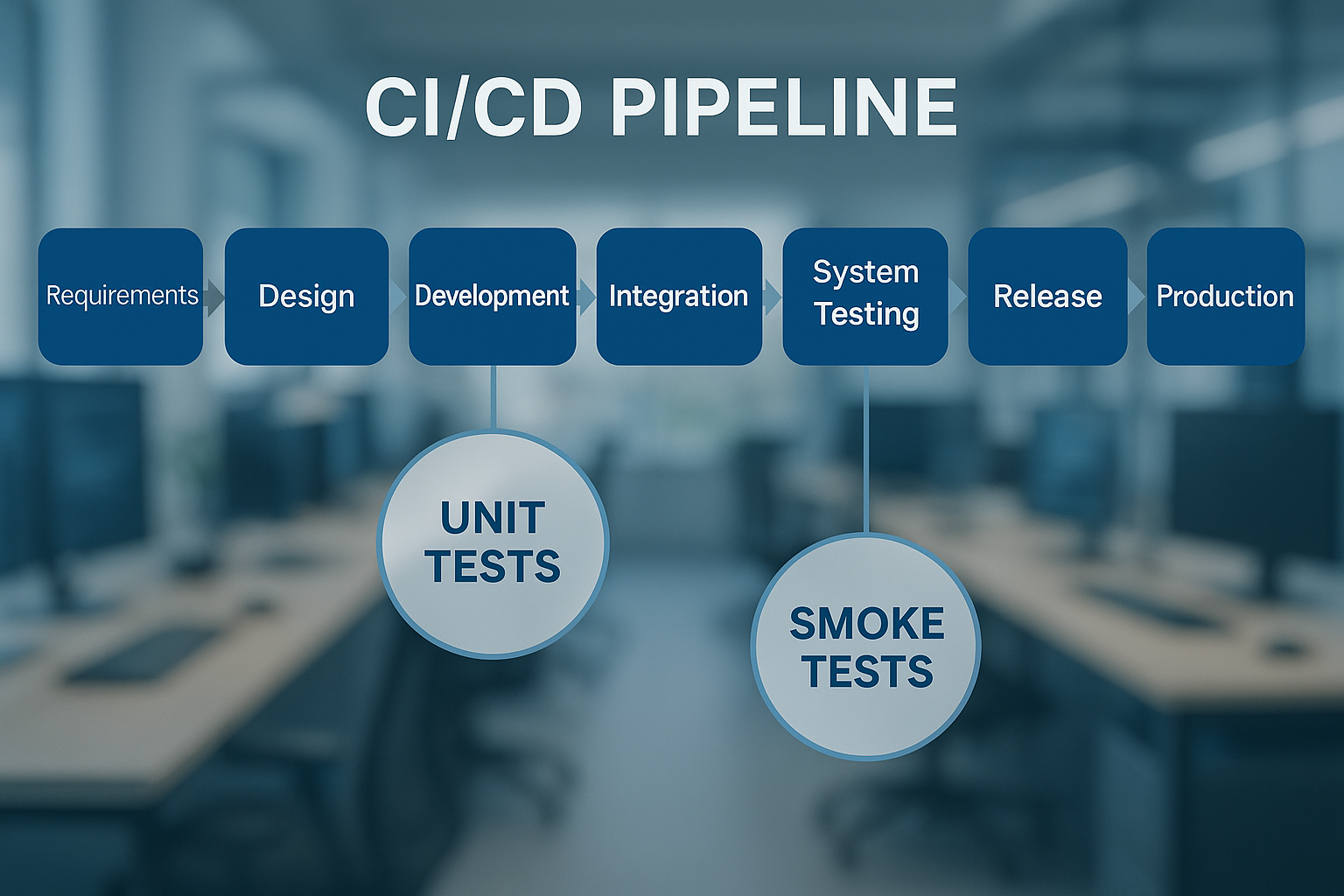

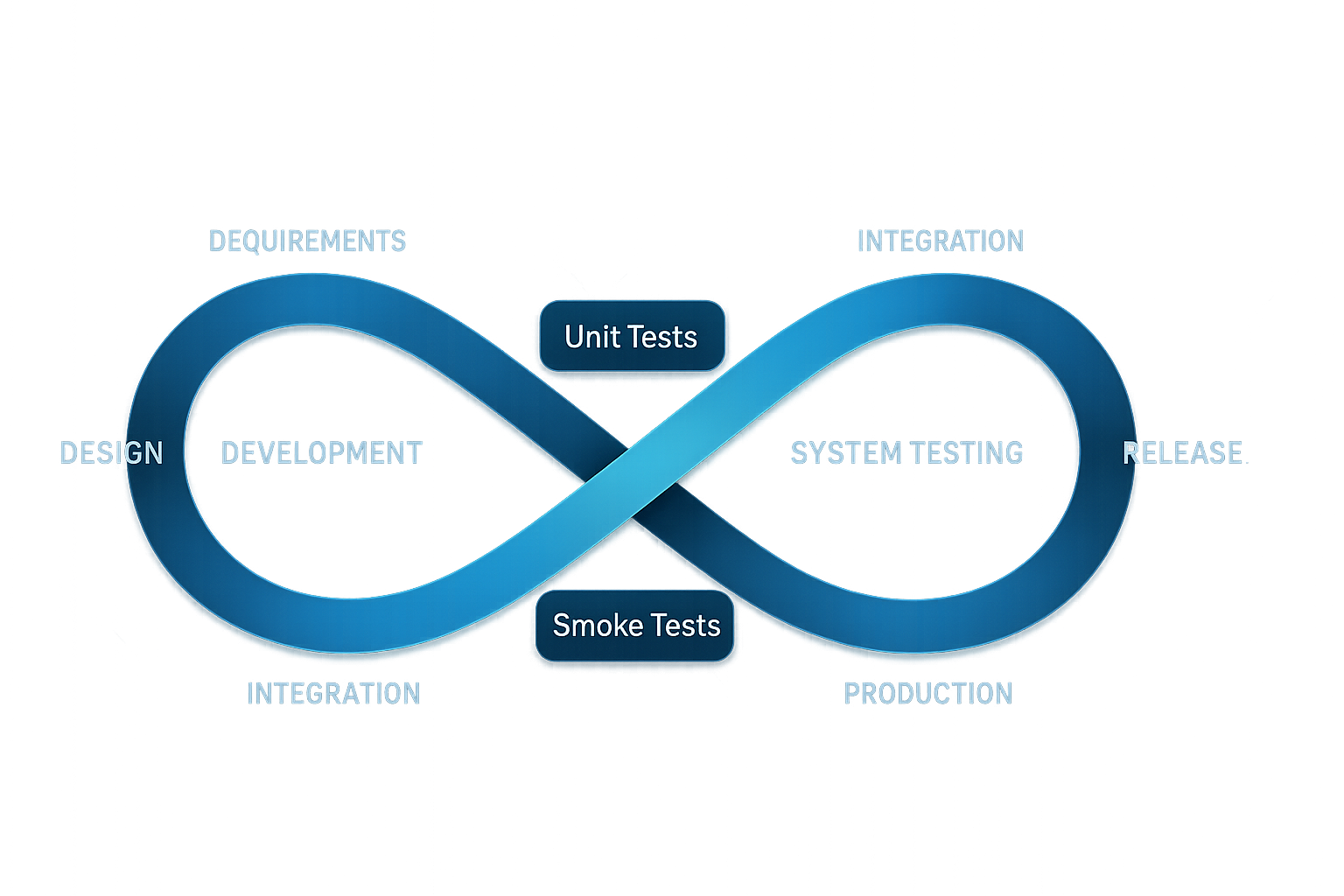

The definition above is designed to stand alone, but context matters. The term “shift left” references the conventional way teams visualize the SDLC as a left-to-right timeline: Requirements → Design → Development → Integration → System Testing → UAT → Release → Production. In this model, testing has historically clustered on the right, the integration, system testing, and UAT phases. Shifting left means pulling specific quality activities toward the requirements and development phases on the left side of that timeline.

The Carnegie Mellon Software Engineering Institute (SEI) formalized this concept into a four-type taxonomy. Traditional, Incremental, Agile/DevOps, and Model-Based, each representing a different degree and method of moving testing earlier. We’ll unpack all four types in detail below. But first, it’s worth establishing what “left” actually means structurally and how it relates to its complement: shift right testing.

The SDLC Timeline Visualized: What ‘Left’ Actually Means

Consider the standard eight-stage pipeline that NIST SP 800-204C describes as the foundation of modern CI/CD workflows [2]:

- Requirements (leftmost)

- Design

- Development / Coding

- Integration / Build

- System Testing

- UAT / Staging

- Release

- Production (rightmost)

In a traditional model, the primary testing entry point sits at stages 5–6: system testing and UAT. That means defects introduced at stages 1–3 travel silently through the pipeline for weeks or months before detection.

In a shift left model, the testing entry point moves to stages 2–3: design reviews with testable acceptance criteria, unit and API contract tests during development, and automated performance smoke tests triggered at integration. The defect’s travel distance, and therefore its remediation cost, shrinks dramatically.

Shifting left is not about doing all testing at once. It’s about matching specific test types to the earliest stage where they can meaningfully detect the defect categories that stage introduces.

Shift Left vs. Shift Right: Two Sides of the Same Quality Coin

Shift left catches defects before they reach production. Shift right validates system behavior in production using real user traffic, canary releases, feature flags, and chaos engineering, an approach explored in more detail in Shift-Left/Right in Performance Engineering. They are complements, not competitors.

| Dimension | Shift Left | Shift Right |

|---|---|---|

| Primary goal | Prevent defects from reaching production | Detect production-only behaviors under real conditions |

| Example activity | Performance smoke test on every pull request | Canary release with p99 latency monitoring on 5% traffic |

| When it runs | Pre-merge, pre-deploy | Post-deploy, in production |

NIST SP 800-204C Section 3.3.4 explicitly covers automation of both pre-deployment testing and production-monitoring activities within CI/CD pipelines [2], validating the dual approach. The most mature engineering organizations treat both directions as pipeline-native activities, not afterthoughts.

The Late-Defect Tax: What Late-Stage Bug Discovery Is Actually Costing Your Team

The business case for shift left testing is not theoretical. NIST commissioned the Research Triangle Institute (RTI) to quantify the economic impact of inadequate software testing infrastructure. The resulting Planning Report 02-3 estimated the national annual cost at $59.5 billion, and identified the primary driver as defects discovered too late in the SDLC to be fixed cheaply [1].

The report’s Table 5-1 presents the relative cost multipliers, and these numbers are the foundation of every credible shift left ROI argument.

Defect Cost Multipliers by SDLC Stage: The Numbers You Need for Stakeholder Buy-In

| SDLC Stage When Defect Is Found | Relative Cost to Fix |

|---|---|

| Requirements | 1X |

| Coding / Unit Test | 5X |

| Integration | 10X |

| Beta / Early Customer | 15X |

| Post-Release / Production | 30X |

Source: NIST Planning Report 02-3, Table 5-1 [1]

To make this concrete: if your team’s average unit-test-stage bug fix costs $500 in developer time (investigation, fix, re-test), the same bug discovered in production costs approximately $15,000 when you factor in incident response, emergency patching, regression testing of the hotfix, deployment coordination, and potential SLA penalties. For performance defects specifically, slow queries, connection pool exhaustion, memory leaks under sustained load, the post-release multiplier often exceeds 30X because remediation triggers additional infrastructure scaling costs and user churn that functional bugs typically don’t.

Practitioner Insight: Performance defects are uniquely punishing when caught late. A functional bug produces a wrong answer; a performance defect produces a slow answer, or no answer, for every concurrent user simultaneously. The blast radius and urgency multiplier for performance regressions make early detection disproportionately valuable compared to functional defects alone.

Traditional Testing vs. Shift Left Testing: A Side-by-Side Comparison

This table maps the structural differences across six dimensions that matter most to teams evaluating the transition:

| Dimension | Traditional Testing | Shift Left Testing |

|---|---|---|

| Testing entry point | System testing / UAT (stages 5–6) | Requirements review / Development (stages 2–3) |

| Defect discovery timing | Post-integration or later | At commit or pull request stage |

| Typical cost to fix | 10X–30X (NIST scale) | 1X–5X (NIST scale) |

| Team collaboration model | QA receives a build to test; siloed handoff | QA participates in sprint planning, design review, and code review |

| CI/CD compatibility | Low, manual test execution, batch-oriented | Native, automated triggers, pipeline-integrated gates |

| Time-to-release impact | Testing is the bottleneck; releases wait for test cycles | Testing runs in parallel with development; releases blocked only by failing gates |

NIST SP 800-204C frames the transition from large-batch release cycles to CI/CD as requiring “changes in the structure of a company’s IT department and its workflow” [2], not just tool adoption. The shift left comparison table above reflects that structural transformation.

The Four Types of Shift Left Testing (CMU SEI Framework Explained)

The Carnegie Mellon Software Engineering Institute categorizes shift left testing into four types based on how aggressively and structurally testing is moved earlier. None of the top-ranking competitor articles reference this taxonomy, which makes it a significant depth differentiator and a direct signal of practitioner-level knowledge.

Type 1. Traditional Shift Left: Structured Testing Earlier in the Waterfall

Traditional shift left takes a phase-gated testing approach but moves its entry point earlier within the same model. Teams still follow a sequential lifecycle, but test planning, test case authoring, and test environment preparation begin during design rather than after coding.

Before: Integration test cases are written after the code freeze, based on whatever documentation developers produced during development.

After: Integration test cases are authored during the technical design review, based on design documents and API contracts. When code arrives, tests are already waiting.

This is the most modest shift and suits teams transitioning from classic waterfall models that cannot yet adopt continuous delivery. Even this level of shift catches defects at the 5X–10X cost point rather than 30X, a meaningful ROI without requiring full DevOps transformation [1].

Type 2. Incremental Shift Left: Testing in Sync with Agile Sprints

Incremental shift left aligns testing cadence with iterative delivery. Each sprint or iteration includes its own testing activities, so defects are caught within the sprint that introduced them rather than accumulating across multiple sprints.

A typical sprint workflow looks like:

- Sprint planning includes test scenario identification alongside feature stories

- Developers write unit tests (TDD or test-alongside) during implementation

- API contract tests validate inter-service communication for the sprint’s features

- Sprint review includes a testing summary with defect metrics and coverage data

This prevents the common failure mode where a regression introduced in Sprint 1 surfaces only during Sprint 5’s regression cycle, by which point the original developer has lost context, and the fix costs 3–5X more in investigation time alone. NIST SP 800-204C’s CI/CD model directly enables this cadence by supporting continuous integration with automated feedback mechanisms [2].

Type 3. Agile/DevOps Shift Left: Continuous Testing Baked Into the Pipeline

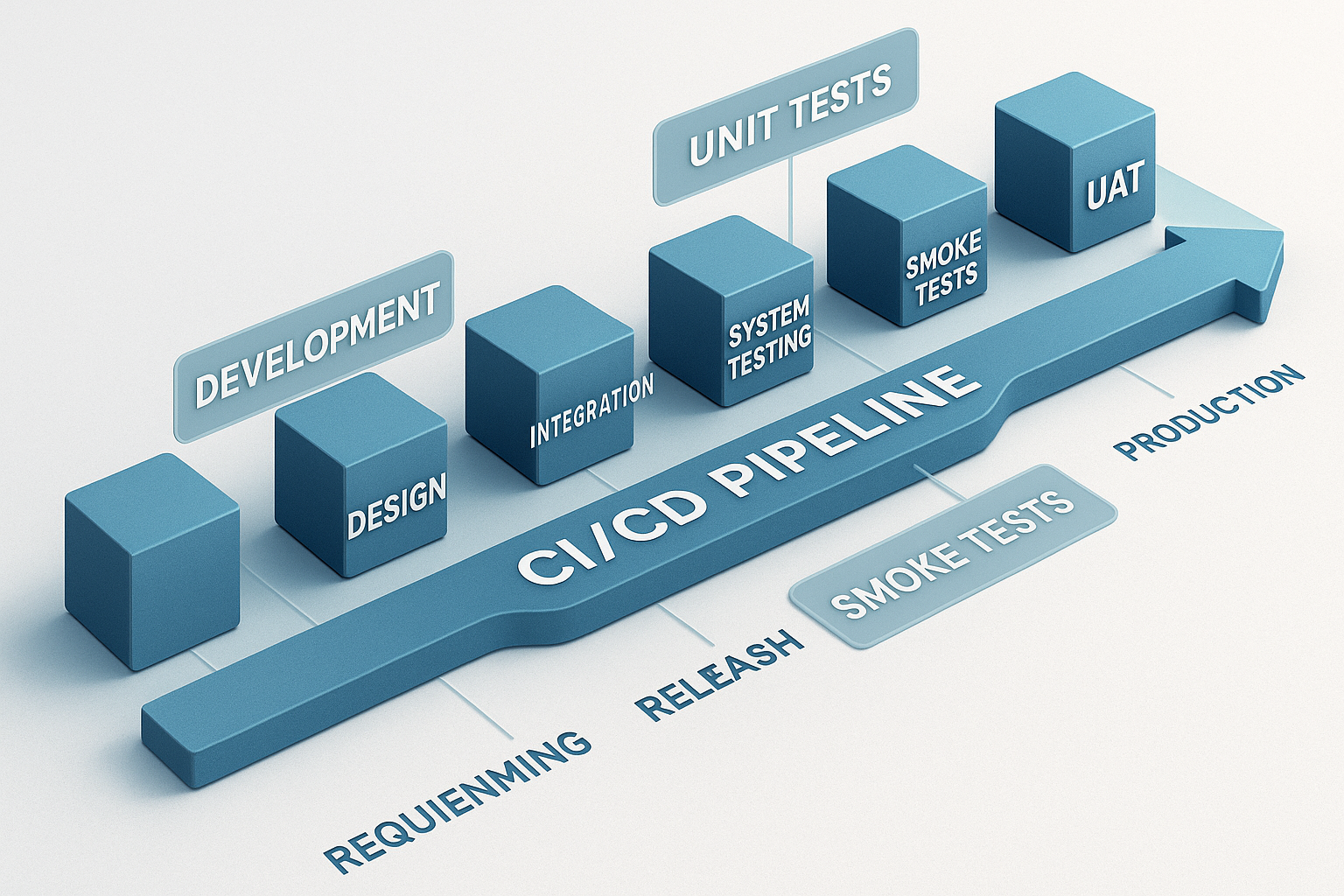

This is the operationally mature form: every code commit triggers automated test execution within the CI/CD pipeline, and quality gates prevent progression to the next stage if thresholds are not met. The pipeline sequence typically looks like:

Commit → Build → Unit Tests → API/Contract Tests → Performance Smoke Test → Quality Gate Decision → Deploy to Staging (if passed)NIST SP 800-204C defines CI/CD pipelines as “workflows for taking the developer’s source code through various stages, such as building, testing, packaging, deployment, and operations supported by automated tools with feedback mechanisms” [2]. Section 3.3.4 of the same publication explicitly identifies “testing of services that are resource-intensive and likely to be performance bottlenecks” as a target for automation within these pipelines [2].

WebLOAD by RadView is built for this type, its CLI interface triggers load test scenarios directly from pipeline definitions in Jenkins, GitHub Actions, or Azure DevOps, returning exit codes that feed directly into quality gate decisions. We’ll detail the mechanics in the implementation section below.

Type 4. Model-Based Shift Left: Testing Against Specifications Before Code Exists

Model-based shift left is the most advanced type: tests are generated and executed against formal models, specifications, or contracts before implementation code is written. For API-first development, this means writing consumer-driven contract tests against an OpenAPI/Swagger specification during design, then validating that the implemented API conforms to the contract at build time.

This approach requires the highest team maturity and tooling investment, but it catches defects at the 1X cost point on the NIST multiplier scale, the requirements and design stage [1]. NIST SP 800-204C Section 4.8 covers SAST (static analysis) tools as a complementary shift-far-left technique that pairs naturally with model-based approaches: validating code against security and quality rules before it ever executes [2].

For readers exploring formal specification-driven development further, the NIST Secure Software Development Framework (SSDF) provides the federal standards context for defining testable requirements early in the development process.

Step-by-Step: How to Implement Shift Left Testing in Your SDLC

This section delivers the concrete playbook. Each step includes a specific action, the artifact or tool category involved, and a measurable success indicator.

Steps 1–3: Foundation. Team Structure, Test Strategy, and Requirements Instrumentation

Step 1: Restructure team roles so QA participates in requirements and design.

Reassign QA engineers as voting members of sprint planning. Their job at this stage is to identify untestable requirements and flag missing acceptance criteria before development begins. NIST SP 800-204C acknowledges that CI/CD adoption “requires changes in the structure of a company’s IT department and its workflow” [2], this is the first structural change.

Success indicator: 100% of sprint planning sessions include a QA engineer; zero user stories enter development without at least one testable acceptance criterion.

Step 2: Map specific test types to specific SDLC stages.

Define which tests are blocking (must pass to proceed) versus advisory (reported but non-blocking). Example mapping:

- Commit stage: Unit tests (blocking), static analysis (advisory)

- Build stage: API contract tests (blocking), dependency vulnerability scan (advisory)

- Integration stage: Performance smoke test, 50 VUs for 3 minutes (blocking if p95 > 500ms)

Success indicator: A documented test strategy matrix exists, reviewed quarterly, and enforced in pipeline configuration.

Step 3: Instrument requirements with testable acceptance criteria.

Convert vague requirements into executable test conditions at the story level, following the principles outlined in the non-functional requirements (NFRs) guide for performance testing.

- Before: “The page should load quickly.”

- After: “The product listing page must return HTTP 200 with p95 latency ≤ 800ms under 100 concurrent users, measured against the staging environment’s baseline dataset.”

Teams that define performance acceptance criteria at the requirements stage achieve the 1X defect cost point on the NIST scale for performance regressions, the most leveraged intervention available [1].

Success indicator: ≥80% of user stories include at least one quantified, measurable acceptance criterion.

Steps 4–6: Pipeline Integration. Automating Tests, Gates, and Feedback Loops

Step 4: Instrument the CI/CD pipeline to trigger tests automatically on every PR.

Configure your pipeline to execute the test types defined in Step 2, triggered by pull request events, a practice covered in depth in the guide to integrating performance testing in CI/CD pipelines. For performance testing, this means invoking a load testing tool via CLI from within the pipeline definition.

Success indicator: Zero PRs merged without at least unit test and API contract test execution; performance smoke tests run on all PRs touching API or database layers.

Step 5: Define automated quality gates with quantified pass/fail thresholds.

Quality gates are the enforcement mechanism. Example thresholds for a performance gate:

- p99 response time must remain below 200ms

- Error rate must stay below 0.5%

- Throughput must not degrade more than 10% from the baseline established on the previous passing build

RadView’s platform enforces these thresholds natively: the CLI returns exit code 0 for pass and non-zero for failure, enabling the pipeline to block a merge automatically when performance regresses.

Practitioner Insight: Gates that enforce zero tolerance for any p95 deviation create alert fatigue and get bypassed within weeks. Gates that enforce a regression budget (e.g., no more than 5% latency increase from the established baseline) are sustainable and respected by development teams. Tune your thresholds based on historical variance, not aspirational targets.

Success indicator: Quality gates block at least one PR per sprint that would have introduced a measurable performance regression, confirming they’re both active and calibrated correctly.

Step 6: Design feedback loops that deliver results within minutes, not hours.

Developers must see test results fast enough to act on them while the code is still in working memory. Target: pass/fail feedback within 5–10 minutes of commit for fast-feedback stages (unit, API, performance smoke). Achieve this through test parallelization, selective test execution (only test what changed), and direct notification to the PR author via pipeline comments or chat integrations.

Success indicator: Median time from commit to test feedback ≤ 10 minutes for the fast-feedback test suite.

Practitioner Insight: The most common implementation failure is adding shift left test stages without removing equivalent late-stage redundant testing. This creates pipeline bloat and slower total cycle time, the opposite of the shift left goal. For every test you add to an early stage, audit whether the same defect category is still being caught at a later stage and consider removing or reducing the late-stage duplicate.

How WebLOAD Enables Shift Left Performance Testing: CI/CD CLI Triggers and Regression Gates in Practice

Consider this scenario: a developer opens a pull request that modifies a product search API endpoint. The CI/CD pipeline builds the branch, runs unit and contract tests, and then triggers a WebLOAD performance smoke test, 50 virtual users, 3-minute duration, targeting the modified endpoint on an ephemeral test environment. WebLOAD detects that p99 latency spiked from 180ms (baseline) to 340ms, an 89% regression caused by a missing database index on the new query path. The CLI returns a non-zero exit code, the pipeline blocks the merge, and the developer receives feedback within 8 minutes of the push.

That defect was caught at the 5X cost point (coding/integration) instead of the 30X post-release point [1], a 6X cost avoidance on remediation, before any customer was affected.

Triggering Load Tests Automatically: WebLOAD CLI Integration with Jenkins, GitHub Actions, and Azure DevOps

WebLOAD’s CLI interface accepts parameters that map directly to pipeline variables:

webloadcmd -script ./tests/perf/search_api.wlp \

-env $TARGET_URL \

-vu 50 \

-duration 180 \

-thresholds ./tests/perf/thresholds.yamlKey parameters: script (path to the load test scenario), env (target environment URL), vu (virtual user count), duration (test length in seconds), and thresholds (SLA definition file specifying pass/fail criteria for p95, p99, error rate, and throughput).

In a GitHub Actions workflow, this integrates as a step:

- name: Run performance smoke test

run: |

webloadcmd -script ./tests/perf/search_api.wlp \

-env ${{ env.STAGING_URL }} \

-vu 50 -duration 180 \

-thresholds ./tests/perf/thresholds.yamlIf the thresholds are breached, the step exits non-zero, and the pipeline prevents the PR from merging. The same pattern works with a Jenkins shell step or an Azure DevOps command task.

Performance testing becomes a first-class pipeline citizen, running on every PR that touches performance-sensitive code paths, just like unit tests.

Designing Automated Regression Gates: Defining Pass/Fail Thresholds That Stick

The threshold file referenced in the CLI command above is where the performance contract lives. A practical example:

thresholds:

p95_response_ms: 400

p99_response_ms: 800

error_rate_pct: 0.5

throughput_rps_min: 120

baseline_deviation_pct_max: 10The baseline_deviation_pct_max parameter is particularly important: it compares the current run’s metrics against the last known-good baseline, allowing the gate to detect relative regressions even when absolute thresholds are still met. A query that slows from 50ms to 150ms is still “fast” in absolute terms but represents a 200% regression that may compound under higher load.

Frequently Asked Questions

When should you NOT shift left?

Shifting left is counterproductive when: (1) your team lacks the test infrastructure to produce reliable results at early stages, flaky tests that block PRs without real signal erode trust faster than late testing does; (2) the cost of maintaining early-stage test environments exceeds the cost of the defects they catch, which is common for full-stack end-to-end tests that require production-scale data; (3) the defect category you’re targeting is fundamentally a production-only phenomenon (e.g., behavior under real geographic traffic distribution, third-party API rate limiting under peak load). In these cases, shift right testing, canary releases, synthetic monitoring, chaos engineering, is the appropriate strategy. The goal is not maximum leftward shift; it’s optimal defect detection placement per defect category.

How do you measure shift-left effectiveness?

Track four metrics: (1) Defect escape rate, percentage of defects found in staging or production versus total defects found; target a quarter-over-quarter decrease. (2) Mean time to detect (MTTD), average elapsed time between defect introduction (commit) and defect detection; shift left should compress this from days/weeks to minutes/hours. (3) Pipeline gate block rate, percentage of PRs blocked by automated quality gates; a healthy rate is 5–15% (lower means gates are too loose or test coverage is thin; higher means gates are too aggressive or code quality is systemically poor). (4) Defect cost ratio, average remediation cost for defects caught pre-merge versus post-release; use this to quantify ROI for stakeholder reporting.

What’s the minimum viable shift left for performance testing?

Start with a single automated performance smoke test that runs on every PR touching API endpoints or database queries. Configuration: 25–50 virtual users, 2–3 minute duration, targeting a single critical user flow (e.g., login → search → checkout) on a dedicated test environment. Set pass/fail thresholds at 2X your production p95 baseline to avoid false positives during initial rollout. This takes one sprint to implement and catches the highest-impact performance regressions, unindexed queries, N+1 patterns, missing cache configurations, before merge. Scale from there.

Is 100% shift left coverage worth the investment?

No. Diminishing returns set in quickly. Unit tests and API contract tests are cheap to shift left, they run in milliseconds, require no infrastructure, and catch the most common defect categories. Full end-to-end performance tests under realistic load profiles are expensive to shift left because they require production-like environments, realistic test data, and longer execution times. The practical sweet spot for most teams: shift unit, contract, and smoke-level performance tests fully left (blocking on every PR); run comprehensive load and stress tests on a nightly or per-release cadence at the integration stage; reserve production-scale capacity tests for release candidates only. Optimize for signal quality per pipeline minute, not coverage percentage.

What role does AI play in shift left testing today?

AI-assisted capabilities in modern load testing platforms, including intelligent script correlation, anomaly detection in test results, and predictive baseline adjustment, reduce the manual effort required to maintain shift left pipelines. For example, anomaly detection can automatically flag a latency distribution shape change that a static threshold would miss. However, AI does not eliminate the need for human review of test design, threshold calibration, and result interpretation. Treat AI as a toil-reduction layer, not a replacement for engineering judgment.

Performance benchmarks and defect cost multipliers cited in this article are drawn from NIST-commissioned research and industry studies; actual results will vary based on team size, SDLC maturity, application complexity, and tooling configuration. This article includes references to WebLOAD by RadView as an illustrative implementation example; it is not a substitute for evaluating tools against your organization’s specific requirements.

References and Authoritative Sources

- RTI for NIST. (2002). The Economic Impacts of Inadequate Infrastructure for Software Testing (NIST Planning Report 02-3). National Institute of Standards and Technology, U.S. Department of Commerce. Retrieved from https://www.nist.gov/system/files/documents/director/planning/report02-3.pdf

- Chandramouli, R. (2022). Implementation of DevSecOps for a Microservices-based Application with Service Mesh (NIST Special Publication 800-204C). National Institute of Standards and Technology, U.S. Department of Commerce. Retrieved from https://nvlpubs.nist.gov/nistpubs/SpecialPublications/NIST.SP.800-204C.pdf