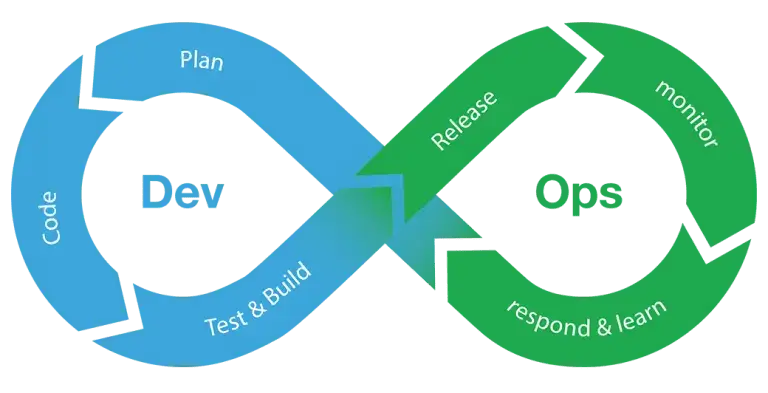

There is a growing recognition that performance testing needs to be an integral part of DevOps, as part of your CI/CD pipeline. This makes sense – leaving performance to the end of your release cycle puts you right back in the days of the waterfall approach. Isolating the root cause becomes more difficult as more changes are made, and any fix can trigger a chain reaction of additional QA cycles. If performance is not up to speed at that point, it will very likely throw off the entire release schedule.

With that in mind, what does it take to make continuous performance testing part of DevOps? Here are some tips to get you started.

Related: Download the eBook

7 Performance and Load Testing Mistakes (and how to avoid them)

1. Walk before you run

Like any test, your performance tests will evolve over time throughout the development cycle. At the beginning of your project, performance testing may be limited to your APIs only. As additional services are developed and your flows become functional, you can add them to the scope of your tests.

Load testing all of your APIs or flows in a continuous manner is probably an unrealistic goal as these tests can take a long time and delay your pipeline. APIs and flows that are frequently used (e.g., login) and those that are part of critical user processes (e.g. completing a purchase) should probably be tested with each check-in, while others may be tested at a lower frequency.

2. Redefine success criteria as you go

There is no single pass/fail rule, as each test has its own success criteria. As your project progresses, performance success goals should be adjusted to reflect the evolution of the application. For example, a new activity added to the flow may require additional processing time, while improvements made to other functions may speed things up — changes that require a reevaluation of your success criteria.

Related: Read our Guide to Continuous Performance Testing.

3. Use your successful tests as a baseline

Once you have a successful continuous performance test, save the results as a baseline for future tests. You may use these results to redefine your success criteria, or just add them as additional comparative metrics

4. Compare apples to apples

Continuous changes to your test environment may result in performance variations. Failing to account for such changes could lead to misguided conclusions. For example, after installing a new server, your previous baseline results may no longer be relevant (which means it’s time to create a new baseline).

5. Don’t confuse load generation with performance measurement

When conducting a load test, you simulate hundreds or thousands of users accessing your system to create stress on the application. However, it’s essential to understand that measuring the response time or performance based solely on these simulated users won’t give you an accurate assessment of the application’s behavior.

To properly gauge the results:

- Load Generation: Use one machine solely to generate the load. This machine will simulate the massive influx of users to put pressure on the system.

- Performance Measurement: You need a second machine acting as a probing client. This client will simulate a single user under normal conditions, allowing you to measure its response times. This measurement can then be compared to predefined performance criteria, offering more precise insights into how well your application performs under load.

Using a separate probing client ensures that you are not simply measuring the performance degradation caused by the load-generating machine itself but are accurately capturing the system’s behavior from a user’s perspective.

6. Test concurrently, but carefully

Running multiple tests in parallel can save time and help you accelerate your release cycles. However, concurrent-running tests may affect each other, and therefore skew the results. When running concurrent tests, make sure that your tests have no dependencies — not only using separate machines and load generator but also ensuring they are not calling the same APIs.

Closing thoughts

Treating your performance tests as an afterthought can be detrimental to your DevOps success. It will end up slowing down your release cycle, or even worse off — you may end up with a sub-par user experience that doesn’t meet market expectations and business requirements.

Integrating load testing into your DevOps doesn’t have to be difficult if you treat it like any part of your continuous development process — test often, fail fast, and iterate until you reach the desired results. WebLOAD offers a Continuous Integration Performance Testing Solution. To learn more, contact RadView to Book a Demo or start your Free Trial.