It’s 11pm. Your checkout API just collapsed under a flash-sale surge that your load test never predicted. The dashboard is a wall of 503s, the on-call channel is on fire, and the post-mortem will eventually reveal the culprit: a load test that validated a fiction — smooth traffic, single protocol, static tokens — instead of the bursty, multi-protocol, auth-stateful reality your API actually faces.

This guide exists so that post-mortem never happens on your watch.

What follows isn’t a tool-comparison listicle or a glossary of testing types you already know. It’s a protocol-level engineering playbook covering the four dominant API paradigms — REST, GraphQL, gRPC, and WebSocket — with distinct load testing strategies for each. More importantly, it tackles the operational hard problems most guides skip entirely: OAuth token behavior when hundreds of virtual users run concurrently, rate limiting validation under bursty traffic, streaming connection durability, and a root-cause diagnostic framework for when your load test reveals degradation but not where. The article maps across six areas: why conventional load tests produce false confidence, protocol-specific testing strategies with concrete thresholds, auth token management under concurrency, rate limit validation patterns that prevent cascading failures, a bottleneck diagnosis framework, and a CI/CD integration playbook.

- Why Most API Load Tests Lie to You (And What to Do Instead)

- Protocol-by-Protocol: How to Load Test REST, GraphQL, gRPC, and WebSocket the Right Way

- REST API Load Testing: Baselines, Thresholds, and the Scenarios That Matter

- GraphQL Under Load: Query Complexity, the N+1 Problem, and Demand Control Testing

- gRPC Performance Testing: Low-Latency Validation, Protobuf Serialization, and Streaming Channels

- WebSocket and Streaming API Testing: Persistent Connections, Message Frame Rates, and SSE Validation

- Auth Token Management Under Load: The Security Problem Your Load Test Is Probably Ignoring

- Rate Limiting Under Load: Testing Patterns, Adaptive Strategies, and Avoiding Cascading Failures

- Diagnosing API Performance Bottlenecks: A Root-Cause Framework

- Embedding API Performance Testing in CI/CD Pipelines: The Shift-Left Playbook

- Frequently Asked Questions

- References and Authoritative Sources

Why Most API Load Tests Lie to You (And What to Do Instead)

The uncomfortable truth about most API load tests is that they validate an artifact that bears only a passing resemblance to production traffic. Teams configure a linear ramp-up of virtual users against a single REST endpoint, observe a p95 under their SLA, and declare the API production-ready. Then real traffic arrives — bursty, authenticated, multi-protocol, and deeply stateful — and the API folds.

This isn’t a tooling problem. It’s a test design problem. Netflix demonstrated the stakes clearly: their migration to edge computing microservices delivered a 70% improvement in API performance [1], but that gain was only measurable because their testing methodology mirrored actual traffic topology. A senior SRE will tell you the first question isn’t which tool to use — it’s whether your test scenario mirrors your actual traffic shape. If your production traffic is 80% cached reads with 20% write bursts, your test should be too.

When your load test omits protocol diversity, ignores auth state, and uses uniform ramp-ups, it tells you what your API does under conditions that will never exist. The rest of this guide fixes that.

The Uniform Traffic Trap: Why Smooth Ramp-Ups Miss Real-World Failures

A linear ramp to 1,000 virtual users over 10 minutes looks nothing like 800 users hitting your checkout API simultaneously at 9:01am when a flash sale goes live. The peak instantaneous requests-per-second in the flash-sale scenario can be 6–8x higher than the ramp-up equivalent, because real traffic correlates — users arrive in waves triggered by external events, not in the orderly queue your load profile assumes.

The standard load test taxonomy — baseline, stress, soak, peak, and spike — exists precisely to address this, yet most implementations default to baseline or stress profiles and never exercise the spike scenario. For a deeper look at each type, see this overview of different types of performance testing explained. Uniform ramp-up patterns miss the thundering-herd effect where 2,000 concurrent users all request a fresh OAuth token within the same 500ms window, spiking your auth server to 400% CPU before your API server even sees application load.

Traffic shape is a first-class test design concern. If your production analytics show that 60% of daily traffic arrives in two 45-minute windows, your load profile needs to replicate those two peaks with realistic inter-arrival distributions — not a smooth sine wave.

Protocol Blind Spots: When Your REST Test Tells You Nothing About Your gRPC Service

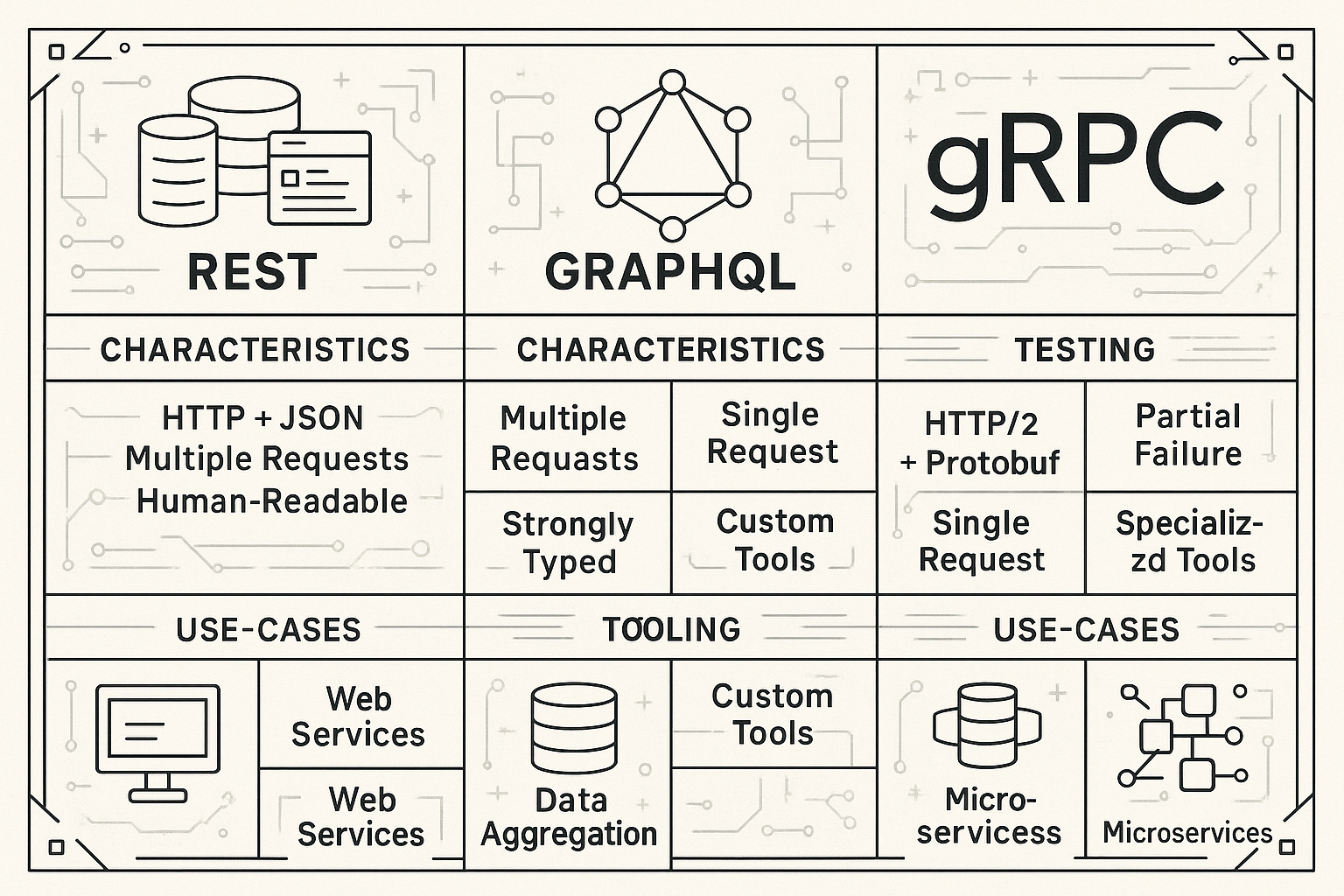

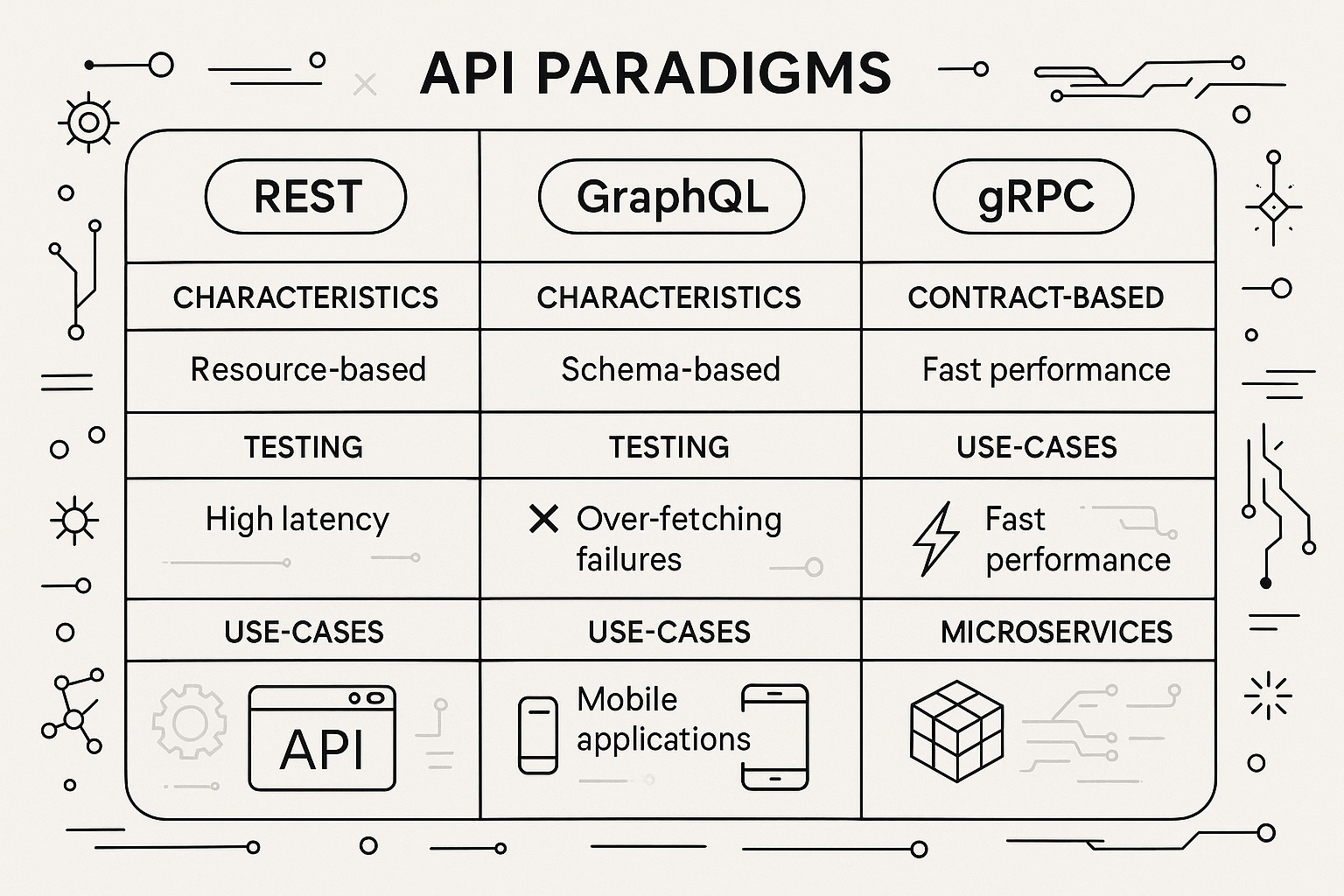

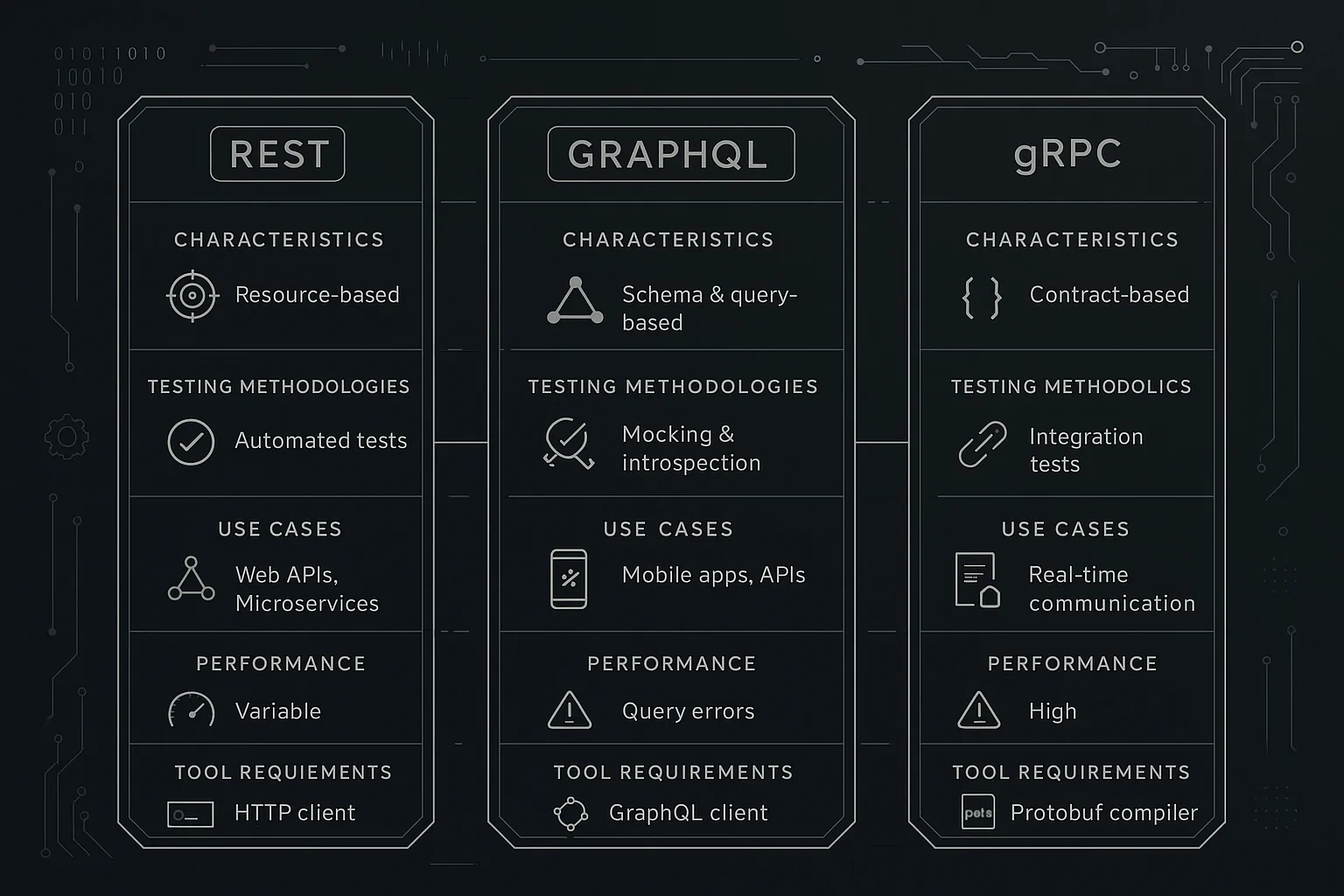

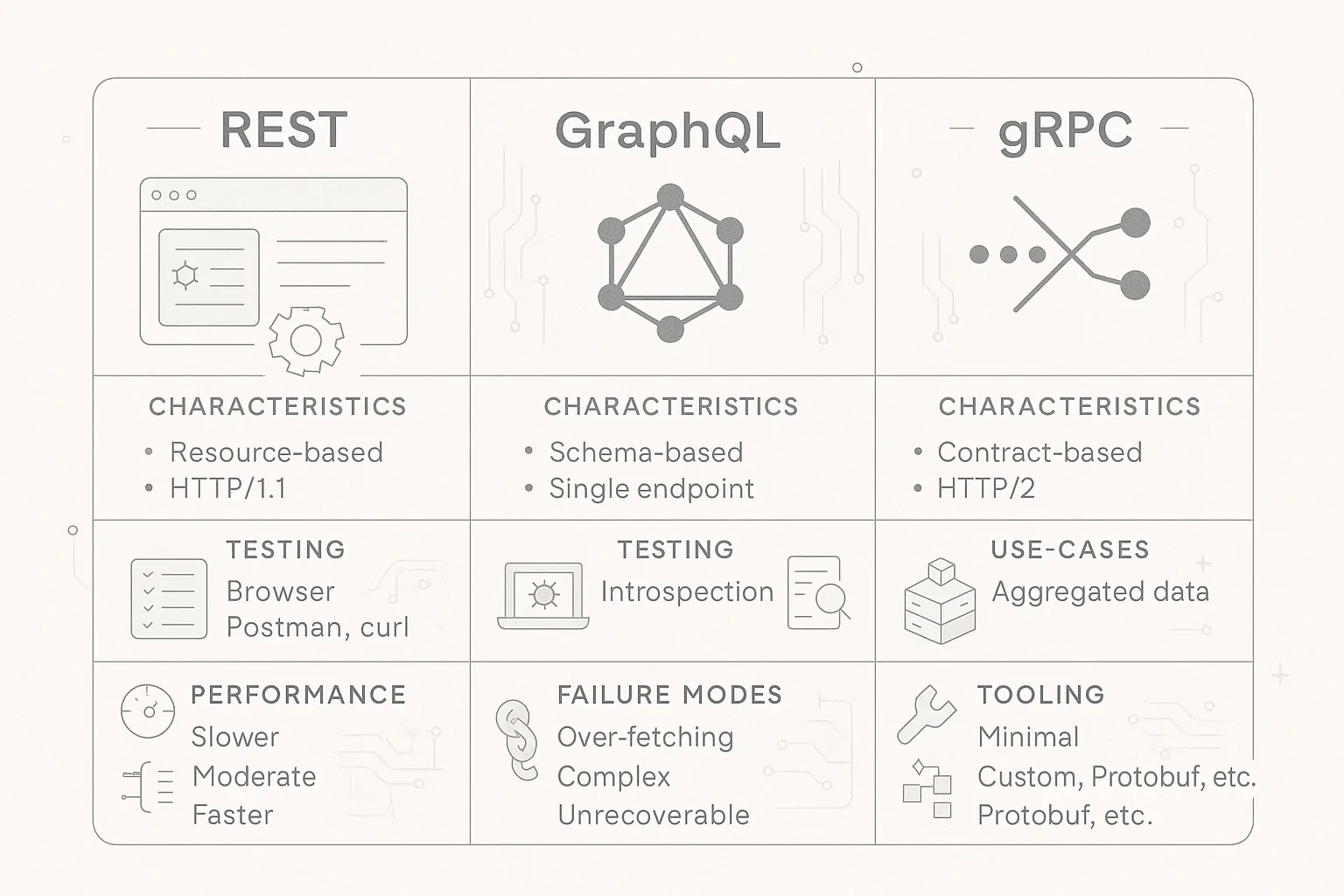

Every competitor article in this space treats load testing as protocol-agnostic. That assumption is dangerously wrong. REST, GraphQL, and gRPC have fundamentally different performance characteristics, failure modes, and measurement requirements.

A GraphQL endpoint that responds in 45ms for a simple query can balloon to 2,800ms under load when a client sends a deeply nested query that triggers the N+1 problem across 12 database calls — a failure mode that a REST-centric load test designed for flat resource endpoints would never surface. The GraphQL Foundation’s own documentation identifies this directly: “Without additional consideration, a naive GraphQL service could be very ‘chatty’ or repeatedly load data from your databases” [2].

Meanwhile, gRPC’s architecture diverges from REST at the transport level. As the Official gRPC Technical Guides (CNCF) documentation explains: “gRPC largely follows HTTP semantics over HTTP/2 but we explicitly allow for full-duplex streaming. We diverge from typical REST conventions as we use static paths for performance reasons during call dispatch as parsing call parameters from paths, query parameters and payload body adds latency and complexity” [3]. If your load testing tool can’t natively serialize Protobuf and manage HTTP/2 multiplexed streams, you’re benchmarking HTTP overhead, not gRPC performance.

Protocol-by-Protocol: How to Load Test REST, GraphQL, gRPC, and WebSocket the Right Way

This is the core of the playbook. Each protocol demands a different test design philosophy, different tooling requirements, and different benchmark expectations. A performance engineer choosing the right protocol isn’t just making an architecture decision — it’s a testability decision.

REST API Load Testing: Baselines, Thresholds, and the Scenarios That Matter

REST remains the most common API paradigm, and paradoxically, the one teams most often misjudge as “covered.” The reality is that REST load testing done well requires specific threshold definitions, scenario selection aligned to engineering questions, and tooling that handles session correlation dynamically.

For a REST API serving a consumer-facing application, a practical starting threshold is p95 < 300ms and p99 < 800ms for read endpoints (GET), with error rates below 0.1% at target concurrency. Write endpoints (POST/PUT) may tolerate p95 up to 500ms depending on backend complexity. These aren’t arbitrary numbers — they reflect the point at which user-perceived latency begins to degrade conversion and engagement metrics measurably. For a comprehensive look at which numbers to track and why, see this guide to the performance metrics that matter in performance engineering.

When interpreting results, HTTP status codes are diagnostic signals with specific meanings under load. A 429 (Too Many Requests) indicates your rate limiter is functioning; a 503 (Service Unavailable) indicates resource exhaustion. Conflating them in your error rate metric — as many dashboards do by default — masks the root cause entirely. RFC 9110: HTTP Semantics (IETF Standard) defines the precise semantics for each status code, and your test assertions should distinguish between them.

Choosing the Right Load Profile: When to Use Soak vs. Spike vs. Stress Testing

Selecting a load profile isn’t about running “all the tests.” It’s about matching each scenario type to the specific engineering question you need answered:

- Spike test → Will our API survive a 10x traffic surge lasting 60 seconds? Configure 100 VUs ramping to 1,000 VUs in under 5 seconds, sustained for 60 seconds, then immediate drop.

- Stress test → What is our actual maximum RPS before error rate exceeds 1%? Incrementally increase VUs in 5-minute steps until the error threshold is breached.

- Soak test → Does memory usage grow unbounded over a 4-hour sustained load? Maintain steady-state concurrency at 70% of peak capacity for 4+ hours, monitoring heap size and GC frequency.

- Baseline test → What does “normal” look like for this API? Run at average expected concurrency for 30 minutes and record p50/p95/p99 latency, throughput, and error rates as your regression benchmark.

The scenario you choose determines the failure mode you’ll discover. Running only baseline tests is like checking your car’s oil level and declaring it highway-ready without ever testing the brakes.

Configuring WebLOAD for Realistic REST Load: VU Modeling and Correlation

Effective REST load testing requires virtual user models that behave like actual users, not request cannons. In WebLOAD, set your think-time distribution to a Gaussian model with a mean of 1.5 seconds and standard deviation of 0.5 seconds rather than a fixed delay — this replicates the variance in real user behavior between page actions. For more guidance on building test scenarios that mirror real-world usage, see this guide on creating realistic load testing scenarios.

For session correlation, dynamic values like CSRF tokens, session IDs, and pagination cursors must be extracted from responses and injected into subsequent requests. WebLOAD’s AI-accelerated correlation engine auto-detects these dynamic parameters and parameterizes them across the virtual user session, eliminating the most time-consuming part of script maintenance. When an API response structure changes — a field name update, a new header — the self-healing scripting capability adapts without requiring a full script rewrite, which matters significantly in CI/CD environments where API contracts evolve weekly.

GraphQL Under Load: Query Complexity, the N+1 Problem, and Demand Control Testing

GraphQL’s single-endpoint architecture means your load test can’t rely on per-URL metrics. The same /graphql endpoint can serve a 2ms introspection query or a 3-second deeply nested query — and your load test must exercise both.

The GraphQL Foundation’s official performance documentation identifies the core risk: “Depending on how a GraphQL schema has been designed, it may be possible for clients to request highly complex operations that place excessive load on the underlying data sources during execution… Certain demand control mechanisms can help guard a GraphQL API against these operations, such as paginating list fields, limiting operation depth and breadth, and query complexity analysis” [2].

Here’s what that looks like in practice: a query fetching 100 users and their associated orders, without DataLoader batching, generates 101 database calls per request. At 50 concurrent virtual users, that’s 5,050 simultaneous DB queries — enough to saturate a typical connection pool at 100 connections within milliseconds, producing a cascade of 503s that looks like an API failure but is actually a data layer failure.

Your GraphQL load test suite should include: (1) a simple, shallow query at high concurrency to measure baseline throughput; (2) a deeply nested query at moderate concurrency to validate demand control limits; (3) a mutation-heavy workload to test write-path performance; and (4) a mixed workload reflecting actual client query distribution from production logs. The Official GraphQL Specification (GraphQL Foundation) provides the normative reference for query execution semantics.

gRPC Performance Testing: Low-Latency Validation, Protobuf Serialization, and Streaming Channels

gRPC operates in a fundamentally different performance regime. Protobuf binary encoding typically reduces payload size by 60–80% compared to equivalent JSON payloads, and HTTP/2 multiplexing eliminates head-of-line blocking across concurrent streams. A well-configured gRPC service should achieve p99 latency under 50ms for simple unary RPC calls on well-provisioned infrastructure, compared to p99 of 100–150ms for equivalent REST/JSON endpoints.

The gRPC project documentation notes that the protocol is purpose-built for “low latency, highly scalable, distributed systems” with production deployment at Google, Square, Netflix, CoreOS, Docker, and CockroachDB [3]. This isn’t a niche protocol — it’s the backbone of inter-service communication in many of the world’s largest distributed systems.

Load testing gRPC requires three capabilities your tool must support natively: (1) Protobuf message serialization and deserialization — sending JSON to a gRPC endpoint and measuring the response time is measuring transcoding overhead, not gRPC performance; (2) HTTP/2 stream-level concurrency modeling — gRPC multiplexes multiple RPCs over a single TCP connection, so your VU model must track concurrent streams per connection, not just concurrent connections; (3) bidirectional streaming validation — for streaming RPCs, you need to measure message delivery latency within an open stream, not just connection establishment time.

RadView’s platform provides native Protobuf support and HTTP/2 stream management, enabling gRPC load tests that measure actual protocol-level performance rather than protocol-translation artifacts.

WebSocket and Streaming API Testing: Persistent Connections, Message Frame Rates, and SSE Validation

WebSocket and Server-Sent Events (SSE) testing is a fundamentally different discipline from request/response load testing. 10,000 simultaneous WebSocket connections each sending 1 message per second generates 10,000 msg/s of sustained throughput from a single connection pool — a load profile where connection teardown and re-establishment overhead is zero, but memory consumption per connection is constant and cumulative. For a deeper dive into WebSocket testing tools and techniques, see Essential Tools for Testing WebSocket Applications.

Per the W3C WebSocket API Official Specification, connection upgrade handshakes carry HTTP overhead only once per connection lifecycle. Your load test must separately measure upgrade handshake time (typically 50–150ms) and sustained message delivery latency (target: under 50ms per frame for real-time applications).

For SSE validation, test reconnection behavior explicitly: after a 30-second server-side stream interruption, the client should reconnect within 5 seconds with the correct Last-Event-ID header and resume from the correct position in the event stream. No competitor in the current SERP addresses WebSocket or SSE performance testing methodology — this is a documented gap that engineering teams encounter daily when building real-time features.

Auth Token Management Under Load: The Security Problem Your Load Test Is Probably Ignoring

OAuth 2.0 token handling is the single most common source of false failures in API load tests, yet it receives almost zero coverage in performance testing literature. RFC 6749 (the OAuth 2.0 Authorization Framework) specifies: “Refresh tokens are credentials used to obtain access tokens… Refresh tokens MUST be kept confidential in transit and storage, and shared only among the authorization server and the client to whom the refresh tokens were issued” [4]. That MUST-level security requirement doesn’t pause during load tests.

When 500 virtual users all start a 30-minute soak test with tokens that expire after 1 hour, and your test has a 45-minute ramp-down phase, approximately 40% of VUs will hit token expiration mid-test. Without proactive refresh logic, those VUs begin returning 401s — which your results dashboard reports as API failures, inflating your error rate metric and masking real performance data.

The Token Expiration Cascade: Why Your 401 Errors Aren’t What You Think

A token expiration cascade has a distinctive diagnostic signature: a sudden step-change in 401 error rate at a specific elapsed time — not a gradual increase correlated with VU ramp-up. If your 401 spike happens at T+55 minutes and your tokens have a 1-hour TTL, that’s your signal. A genuine API failure under load would show error rates growing proportionally with concurrent users.

In WebLOAD’s analytics dashboard, you can isolate HTTP 401 responses by elapsed time and overlay them against VU count. If the 401 curve is flat during ramp-up and then steps up vertically at a fixed time offset, you’re looking at token expiration, not API degradation. This distinction matters: one requires a script fix, the other requires an infrastructure investigation.

Proactive Refresh Patterns and the Mutex Lock: Preventing Token Refresh Storms

Set your proactive refresh trigger at 80% of the token’s TTL. For a 3,600-second (1-hour) access token, trigger refresh at T+2,880 seconds — leaving a 720-second safety window. This follows the intent of RFC 6749 Section 1.5, which establishes the refresh token lifecycle as a proactive mechanism, not a reactive one [4].

For high-concurrency tests with 1,000+ VUs, implement a token pool of 10–20 pre-refreshed tokens and distribute them across VU groups. The critical implementation detail: use a mutex lock around the refresh call so that when the first VU triggers a refresh, subsequent VUs wait for the new token rather than each independently hitting the authorization server. Without this lock, 1,000 VUs simultaneously requesting fresh tokens creates a thundering-herd condition on your auth server — the exact production failure you’re trying to prevent. For strategies on managing high virtual user counts effectively, see this guide on how to load test concurrent users.

WebLOAD’s JavaScript scripting engine supports this pattern natively through shared variables and synchronization primitives, enabling token pool management that preserves the client-token binding integrity RFC 6749 requires.

Secure Token Storage in Test Scripts: What Not to Do (And the Right Approach)

Hardcoding a bearer token directly in your test script — Authorization: Bearer eyJhbGciOiJSUzI1NiJ9... — and committing it to your Git repository exposes a valid credential to anyone with repo access. If that token has a 24-hour TTL and your repo is even semi-public, you have a real security incident window. RFC 6749 Section 10.4 is explicit: “Refresh tokens MUST be kept confidential in transit and storage” [4]. NIST SP 800-204: Security Strategies for Microservices and API Systems extends this principle to the broader API infrastructure context.

The correct pattern: inject tokens at runtime via environment variables, sourced from your secrets manager (HashiCorp Vault, AWS Secrets Manager, or equivalent). Your test script should reference ${AUTH_TOKEN} as a variable, resolved at execution time, never stored in the script file itself. In CI/CD pipelines, configure your pipeline’s secrets management to inject the token into the test runner’s environment at execution time and revoke it after the run completes.

Rate Limiting Under Load: Testing Patterns, Adaptive Strategies, and Avoiding Cascading Failures

Rate limiting is the most misunderstood component in API load testing. Teams implement a rate limit configuration value, add it to a wiki page, and never validate that it actually behaves correctly under bursty traffic. Rate limiting tests are counterintuitive — you’re deliberately trying to trigger 429s, not avoid them. The question isn’t whether your API returns 429 under limit; it’s whether clients handle 429 gracefully, implement backoff correctly, and whether cascading 429s from upstream services trigger downstream failures in your microservices mesh. Embedding these validations into your delivery pipeline is essential — learn more about integrating performance testing in CI/CD pipelines.

Zoom’s developer community documented this challenge extensively: rate limiting under data-intensive workloads creates consistency issues when retry logic and rate limit windows interact unpredictably [5].

Four Rate Limiting Algorithms: Which One Are You Actually Testing?

Your rate limit test design depends on which algorithm your API gateway implements. Each has distinct boundary conditions:

| Algorithm | Burst Behavior | Boundary Risk | Key Test Case |

|---|---|---|---|

| Fixed Window | Allows 2x burst at window boundary | Two N-request bursts straddling the minute mark both succeed | Send N requests at T-1s and N requests at T+1s |

| Sliding Window | Smoother; averages across window | Higher computational overhead under high RPS | Sustained high-RPS for 5 minutes to validate window slides correctly |

| Token Bucket | Allows burst up to bucket capacity | Refill rate determines sustained throughput | Send N+1 simultaneous requests where N = bucket size; request N+1 must receive 429 |

| Leaky Bucket | Enforces constant output rate | Queued requests can timeout under sustained burst | Send 3x capacity burst and measure queue drain time and timeout rate |

A fixed-window rate limiter set to 1,000 requests/minute will allow a burst of 2,000 requests if they straddle a window boundary — 1,000 at 11:59:59 and 1,000 at 12:00:00. A token bucket limiter with a 1,000 token capacity and 16.7 token/second refill rate will reject the second burst immediately. Your load test must exercise the boundary condition to validate which behavior your implementation actually delivers.

Validating Rate Limit Behavior in Your Load Test Suite

Rate limit validation requires specific assertions that go beyond simple pass/fail:

- HTTP 429 response rate equals the expected reject percentage at the configured limit ± 2%

Retry-Afterheader is present in all 429 responses with a valid value- After the

Retry-Afterwindow expires, retry requests succeed with HTTP 200 X-RateLimit-Remainingheader accurately reflects remaining quota- No 503 errors appear during rate-limited conditions (a 503 during rate limiting indicates the limiter itself is failing, not that traffic exceeded limits)

A 429 response WITHOUT a Retry-After header is a bug, not a feature — and your load test should catch it. Include rate limit validation as a mandatory CI/CD quality gate: any API gateway configuration change that modifies rate limit parameters should trigger the rate limit test suite automatically. This prevents the silent regression that occurs when a configuration change alters rate limit behavior without anyone re-validating the boundary conditions.

Diagnosing API Performance Bottlenecks: A Root-Cause Framework

When a load test reveals degradation, the question isn’t “is it slow?” — it’s “where is the constraint, and what’s the first remediation action?” Most teams resort to guesswork. This framework eliminates it. For a structured methodology on identifying and resolving performance constraints, see Test & Identify Bottlenecks in Performance Testing.

The Six Bottleneck Categories: How to Read Your Load Test Results Like a Performance Engineer

Each of the six bottleneck categories has its metric signature and diagnostic starting points:

- Network layer: If TTFB is high (>200ms) but server processing time is low (<50ms), and DNS resolution accounts for >15% of total response time at high concurrency, the bottleneck is infrastructure — not application code. First action: check DNS caching, CDN routing, and geographic latency between load generators and target servers.

- Connection pool exhaustion: If p99 latency exceeds 2,000ms AND error rate stays below 0.5% AND CPU is under 40%, suspect connection pool starvation. Requests are queuing for available connections, not failing. First action: check connection pool queue depth and increase pool size.

- Backend logic inefficiency: If p95 latency increases linearly with VU count AND database CPU spikes proportionally, suspect N+1 queries or synchronous blocking calls. First action: profile database query counts per API call at 1 VU vs. 100 VUs.

- Authentication overhead: If latency spikes correlate with token refresh intervals (visible as periodic throughput dips every 60–120 seconds), the auth server is the constraint. First action: test with pre-authenticated tokens to isolate auth overhead from application logic.

- Payload/serialization cost: If throughput scales inversely with response body size AND network bandwidth utilization exceeds 80%, serialization and transfer cost dominate. Protobuf payloads at 3–10x compression over JSON directly reduce this bottleneck.

- Infrastructure ceiling: If CPU exceeds 85% AND all latency percentiles degrade uniformly, you’ve hit hardware limits. First action: validate whether horizontal scaling (more instances) or vertical scaling (bigger instances) is the correct investment.

From Diagnosis to Fix: Remediation Patterns for the Most Common API Performance Failures

Identification without resolution is just expensive observation. For each bottleneck category, here’s the first-order fix and expected impact:

- Connection pool exhaustion: Increasing pool size from 50 to 200 on a Node.js API server eliminated connection queue wait time and reduced p99 latency from 3,200ms to 380ms at 500 concurrent users. The fix takes 5 minutes to implement — validation via a follow-up load test using the identical scenario parameters confirms the improvement is real, not theoretical.

- Backend logic inefficiency: Adding database query batching to eliminate an N+1 pattern on a user profile endpoint reduced database calls from 47 per request to 3, dropping p95 from 1,800ms to 210ms.

- Authentication overhead: Extending JWT expiry from 60 seconds to 300 seconds (where security policy permits) reduced token refresh storms by 80% — trading a marginal security window extension for a 4x throughput improvement on the auth endpoint.

Edge Computing and Distributed Architecture: When the Bottleneck Isn’t Your API

Sometimes your API code performs perfectly in isolation — response times under 100ms, clean resource utilization — but end-user experience still degrades. The bottleneck lives in the infrastructure layer: CDN routing inefficiency adding 80–200ms of unnecessary latency, geographic distance between load generators and origin servers skewing results, load balancer misconfiguration distributing traffic unevenly across instances, or upstream third-party API dependencies (payment processors, identity providers) failing under load that your API can’t control.

Designing load tests that distinguish application-layer failures from infrastructure-layer failures requires isolating variables. Configure WebLOAD to record DNS resolution time, TCP connection time, and TTFB as separate metrics. If DNS resolution accounts for more than 15% of total response time at high concurrency, the bottleneck is infrastructure — not your application code. Netflix documented a 70% latency reduction by moving compute to edge locations closer to end users [1], eliminating geographic round-trip overhead that no amount of backend optimization could address.

The diagnostic rule: if latency increases with geographic distance from your origin but not with VU count, the problem is topology. If latency increases with VU count regardless of geography, the problem is capacity.

Embedding API Performance Testing in CI/CD Pipelines: The Shift-Left Playbook

Performance regressions caught in staging cost hours to fix. The same regressions caught in production cost outages, revenue, and customer trust. DORA’s multi-year research program — now housed within Google — consistently finds that teams with automated testing integrated into their deployment pipelines deploy more frequently with lower change failure rates [6]. Shifting performance testing left — into the CI/CD pipeline itself — converts a reactive debugging exercise into an automated quality gate.

The integration pattern follows three pipeline stages, each with a distinct test profile and pass/fail threshold. After unit tests pass, a lightweight smoke test with 50 VUs hits critical endpoints to ensure no catastrophic regressions — this runs in under 2 minutes and catches the obvious breakages. Pre-merge to main triggers a full scenario load test at 500 VUs against a staging environment that mirrors production topology at a minimum 1:4 capacity ratio. Any regression fails the build. Post-deploy to staging runs extended soak tests holding the target VU count for 2–4 hours to catch memory leaks and connection pool drift.

The pass/fail gate that matters: p95 response time must remain within 20% of the established baseline AND error rate must not exceed 0.5% at the target VU count. Hard numbers, automated enforcement, no human judgment required at 2 AM. For teams already running WebLOAD, the CLI integration enables triggering load test scenarios directly from Jenkins, GitLab CI, GitHub Actions, or Azure DevOps — with results piped back to the pipeline as structured JSON for automated gate evaluation.

The most common failure in CI/CD performance testing isn’t tooling — it’s environment parity. A staging environment with half the CPU, a quarter of the database connections, and no CDN layer in front of it will produce performance data that is directionally useful but numerically meaningless for SLA validation. If you can’t match production infrastructure, at minimum match the architecture: same number of service instances (even if smaller), same database connection pool configuration, and same load balancer algorithm. The goal is to catch regressions relative to your last baseline, not to predict absolute production performance numbers.

Frequently Asked Questions

Does my load testing tool need native protocol support for gRPC, or can I use a REST-to-gRPC proxy?

A proxy introduces serialization overhead, additional network hops, and connection management artifacts that contaminate your measurements. If your tool sends JSON to a transcoding proxy that converts to Protobuf before forwarding to the gRPC service, you’re measuring proxy performance plus gRPC performance. For meaningful results, your tool must serialize Protobuf natively and manage HTTP/2 streams directly. Otherwise, your p99 latency numbers include 15–40ms of proxy overhead that doesn’t exist in production client-to-service communication.

Is 100% endpoint coverage in load tests worth the investment?

Not always. The Pareto principle applies aggressively to API load testing: typically 10–15% of your endpoints handle 80%+ of production traffic and revenue-critical transactions. Prioritize load testing coverage based on traffic volume, business impact, and architectural complexity (e.g., endpoints that fan out to multiple downstream services). A focused test suite covering your top 15 endpoints with realistic traffic profiles will reveal more actionable bottlenecks than a shallow test across 200 endpoints at unrealistic concurrency levels.

How do I distinguish a token expiration failure from an actual authentication service degradation during a load test?

Correlate the timing of 401 errors against your token TTL and test elapsed time. Token expiration cascades produce a step-function error pattern at a predictable offset (e.g., T+55 minutes for 60-minute tokens). Auth service degradation produces 401 rates that increase proportionally with VU count, often accompanied by rising latency on the auth endpoint itself. Monitor your authorization server’s response time as a separate metric during load tests — if auth response time degrades from 15ms to 800ms as VU count increases, you have an auth infrastructure bottleneck, not a token lifecycle issue.

Should GraphQL load tests use the same concurrency model as REST load tests?

No. REST load tests model concurrency as parallel requests across multiple endpoints. GraphQL concurrency is better modeled as parallel queries of varying complexity against a single endpoint. Your VU mix should include lightweight queries (simulating list views) and heavyweight queries (simulating detail pages with nested relationships) in proportions that match your production query log distribution. Without this mix, you’ll either overestimate throughput (all lightweight queries) or overestimate latency (all heavyweight queries).

What’s the minimum viable rate limit test that catches the most common deployment regressions?

A boundary-burst test combined with a header-validation assertion. Send two request bursts at the window boundary (for fixed-window) or N+1 simultaneous requests (for token bucket), and assert: (1) the expected number of 429s are returned, (2) Retry-After is present, and (3) post-wait retries succeed. This three-assertion test catches the three most common regressions: limit misconfiguration, missing response headers, and broken recovery behavior. Run it on every API gateway config change.

What tool can I use to soak test APIs?

Use a load testing tool that supports long-duration sustained execution with memory-stable load generators, such as WebLOAD, JMeter, k6, or Gatling. The key requirements for soak testing APIs are stable memory footprint over 4–24 hour runs, native HTTP/REST and gRPC protocol support, and the ability to monitor server-side resource drift (heap growth, connection pool exhaustion) alongside client-side latency.

Soak testing APIs reveals failure classes that shorter load tests miss entirely: gradual memory leaks, connection pool saturation, disk fill from excessive logging, and database lock accumulation. Configure the test at 60–70% of peak capacity for at least 4 hours and track memory growth rate, p99 latency drift, and error rate per hour. JMeter’s GUI mode is known to leak past 4 hours, so run it headless for long soaks. k6 and Gatling have lower memory footprints but narrower protocol coverage; enterprise tools like WebLOAD add native server-side monitoring integration that makes drift detection easier.

References and Authoritative Sources

- Zuplo. (N.D.). Solving Poor API Performance: Tips. Zuplo Learning Center. Retrieved from https://zuplo.com/learning-center/solving-poor-api-performance-tips — referencing Netflix’s edge computing microservices architecture achieving approximately 70% API performance improvement.

- GraphQL Foundation. (N.D.). Performance. GraphQL Official Documentation. Retrieved from https://graphql.org/learn/performance/

- gRPC Authors / Cloud Native Computing Foundation. (N.D.). FAQ | gRPC. gRPC Official Documentation. Retrieved from https://grpc.io/docs/what-is-grpc/faq/

- Hardt, D., Ed. (2012). RFC 6749: The OAuth 2.0 Authorization Framework. Internet Engineering Task Force (IETF). Retrieved from https://www.rfc-editor.org/rfc/rfc6749.txt

- Zoom Developer Forum. (N.D.). Challenges with API Rate Limiting and Data Consistency. Zoom Developer Forum. Retrieved from https://devforum.zoom.us/t/challenges-with-api-rate-limiting-and-data-consistency/101401

- DORA (DevOps Research and Assessment). (N.D.). Test Automation. DORA / Google. Retrieved from https://dora.dev/capabilities/test-automation/