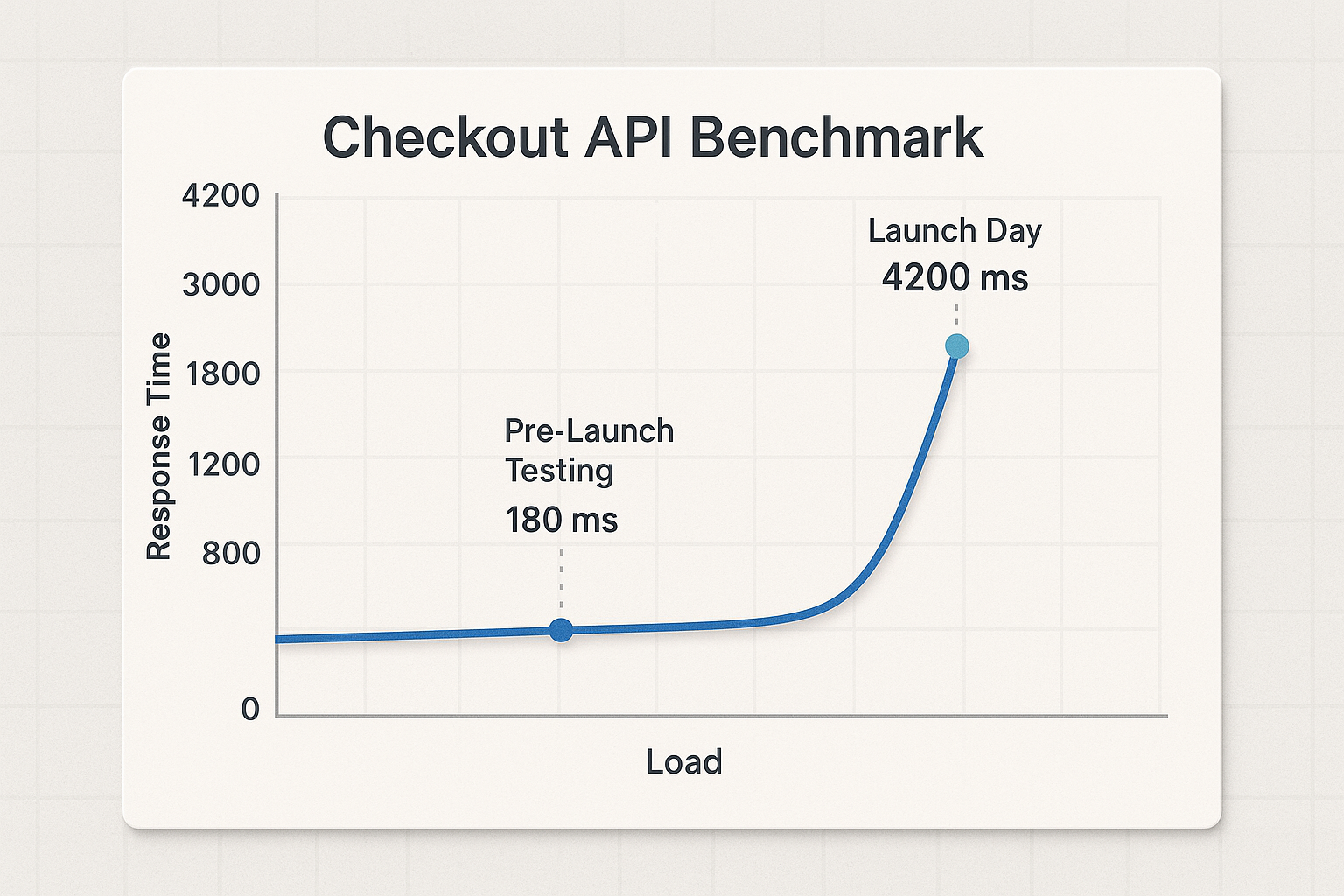

Picture this: a checkout API that cruised at 180ms response times during QA sign-off buckles to 4,200ms under 3,000 concurrent users on launch day. Transactions fail. Revenue evaporates. The post-mortem reveals a database query that ran fine at low concurrency but triggered lock contention at scale – an issue a single well-designed load test would have caught weeks earlier.

Most engineering teams understand they need performance testing. The confusion lies in which methodology to apply, how to interpret the results, and – most critically – what to do once a bottleneck surfaces. Most guides stop at definitions. This one goes through to the fix list.

What you’ll walk away with: a practical framework for selecting the right test type based on your specific risk scenario, a real-world walkthrough from test plan to prioritized fix list, a bottleneck diagnosis playbook covering the five most common failure categories, and the evidence-backed business case that gets leadership to fund continuous performance testing. Let’s get into it.

- What Is Performance Testing — And Why Your Team Can’t Afford to Skip It

- The Complete Performance Testing Methodology Map: Which Test Type Do You Actually Need?

- A Real-World Performance Testing Walkthrough: From Test Plan to Actionable Results

- Identifying and Resolving Software Bottlenecks: The Diagnosis-to-Fix Playbook

- Frequently Asked Questions

- Conclusion

- References and Authoritative Sources

What Is Performance Testing — And Why Your Team Can’t Afford to Skip It

Performance testing is the disciplined practice of measuring how a system behaves under realistic load conditions, response time, throughput, resource consumption, and stability, and using those measurements to prevent failures before users encounter them. For a deeper dive into choosing the right testing strategy, check our Complete Guide to Capacity Testing.

The Google SRE Book puts it precisely: “A performance test ensures that over time, a system doesn’t degrade or become too expensive” [1]. That framing matters because it repositions performance testing from a pre-launch gate to a continuous lifecycle signal. A system that needed 8 GB of memory six months ago may now need 32 GB. A 10ms response time can silently creep to 50ms, then 100ms, without anyone noticing until users start abandoning.

The reliability math reinforces the investment. Google’s SRE team demonstrates that “it’s possible for a testing system to identify a bug with zero MTTR… The more bugs you can find with zero MTTR, the higher the Mean Time Between Failures (MTBF) experienced by your users” [1]. Catching a performance regression in CI before it reaches production means the outage never happens, that’s measurable MTBF improvement with zero recovery cost.

For further depth on the SRE reliability framework, Google’s Site Reliability Engineering (SRE) Book is the canonical reference.

The Four Pillars: What Performance Testing Actually Measures

Four dimensions define system performance under load:

Response time — how long individual requests take. Averages are misleading. The SRE Workbook recommends multi-threshold latency measurement: “90% of requests are faster than 100 ms, and 99% of requests are faster than 400 ms” [3]. If your p90 is 95ms but your p99 is 6,000ms, 1 in 100 users is having a terrible experience – and your average will hide it completely. For an in-depth guide on effective performance metrics, see The Performance Metrics That Matter in Performance Engineering.

Throughput — requests processed per unit time. A system handling 1,200 requests/second at 100 concurrent users that drops to 400 req/s at 500 users has a throughput degradation curve that signals resource contention.

Resource utilization — CPU, memory, disk I/O, network bandwidth, and connection pools on the server side. When CPU exceeds 80% sustained, response time typically becomes non-linear. When connection pools exhaust, requests queue and timeout.

Stability — the system’s ability to maintain consistent performance over time without crashes, memory leaks, or degraded throughput. A system that holds steady for 10 minutes but degrades after 4 hours has a stability defect no short test will reveal.

The Business Case: Translating Performance Failures Into Dollars and Decisions

Engineering teams struggle to justify performance testing investment because the cost of not testing is invisible – until it isn’t. Google SRE Chapter 3 provides a concrete calculation framework [2]:

“Proposed improvement in availability target: 99.9% → 99.99% / Proposed increase in availability: 0.09% / Service revenue: $1M / Value of improved availability: $1M × 0.0009 = $900.”

Scale that to a $10M-revenue service, and the same 0.09% improvement is worth $9,000. For a $100M service, common in e-commerce and SaaS, it’s $90,000. That’s the value of a single nine of availability, and performance testing is how you verify you’re achieving it.

The error budget model sharpens this further: “If SLO violations occur frequently enough to expend the error budget, releases are temporarily halted while additional resources are invested in system testing” [2]. Performance test results directly govern release velocity. The SRE Workbook adds that “bad pushes cost twice as much error budget as database failures” [3] – meaning performance regression testing in CI/CD pipelines delivers higher ROI than many infrastructure investments. Explore effective methods to integrate performance testing in development pipelines in Integrating Performance Testing in CI/CD Pipelines.

Performance Testing vs. Functional Testing: Where the Lines Are (and Why Both Teams Need to Be in the Room)

| Criterion | Functional Testing | Performance Testing |

|---|---|---|

| Core question | Does the feature work correctly? | Does it hold up under load? |

| Primary owner | QA engineers, developers | Performance engineers, SREs, DevOps |

| When it runs | Every commit (unit/integration) | Pre-release gates + continuous in CI/CD |

| Failure signal | Incorrect output, exception | Latency breach, throughput drop, resource exhaustion |

| Typical duration | Seconds to minutes | Minutes to hours (soak tests: 4–24 hours) |

The Google SRE Book positions performance tests within a maturity hierarchy – unit → integration → system → smoke → performance [1]. In mature organizations, these aren’t sequential. Performance tests run in parallel with functional tests, and results feed directly into release decisions. When QA owns performance testing in isolation and developers don’t see results until release week, the feedback loop is too slow to be actionable.

The Complete Performance Testing Methodology Map: Which Test Type Do You Actually Need?

Before memorizing test type definitions, start with the engineering question you need answered. As the Google SRE Book states, “Engineers use stress tests to find the limits on a web service” [1] – each methodology targets a specific class of risk. Choosing the wrong test type wastes cycles and produces data that doesn’t represent your actual exposure.

Load Testing: Validating Normal and Peak Capacity Before It Becomes a Crisis

Engineering question: Can the system handle expected and peak user volumes within SLO thresholds?

Scenario: An e-commerce checkout API expecting 3,000 concurrent users during a holiday sale. Test configuration: ramp from 0 to 3,000 users over 15 minutes, sustain for 60 minutes. Pass criteria drawn from SLO definitions: p90 response time < 200ms, p99 < 500ms, error rate < 0.1%, server CPU < 75%.

If the test shows p99 at 480ms and CPU at 72%, the system passes. If p99 hits 1,100ms at 2,400 users, you’ve identified your capacity ceiling – and you have time to fix it before users find it for you. For the full SLI/SLO definition framework, see Google SRE Workbook Chapter 2 on Implementing SLOs.

Stress Testing: Finding the Breaking Point So Your Users Never Do

Engineering question: At what load level does the system fail, and does it recover gracefully?

Scenario: Start at 100 concurrent users, add 100 every 60 seconds. Monitor continuously. At 800 users, CPU hits 95% on the application server, error rate exceeds 5%, and p99 latency spikes to 8,000ms. The test continues to 1,000 users, the system starts returning 503s. Load is reduced to 200 users. Recovery time: 45 seconds before response times normalize.

The critical output isn’t just the breaking point (800 users) – it’s the recovery behavior. A system that requires a manual restart after overload is a fundamentally different risk than one that self-recovers in under a minute. Always document both.

Spike, Soak, and Scalability Testing: The Tests Most Teams Skip (and Regret)

Spike testing answers: Can the system survive sudden, extreme load jumps? Scenario: 200 concurrent users jump to 2,000 in 30 seconds – simulating a flash sale or viral social post. If the auto-scaler takes 90 seconds to provision new instances but traffic arrives in 30, the gap is your exposure window.

Soak/endurance testing answers: Does the system degrade over extended operation? Scenario: 500 steady-state users for 8 hours. Monitor memory usage per hour. If memory grows from 2.1 GB to 6.8 GB over 8 hours without releasing, you have a leak that no 10-minute load test will ever reveal. The Google SRE Book’s warning about systems becoming “incrementally slower without anyone noticing” [1] describes exactly the failure class soak tests are designed to catch. Learn more about soak testing in How Soak Testing Can Reduce Your Risk.

Scalability testing answers: Does adding infrastructure actually improve performance proportionally? Scenario: measure throughput on 2 application server instances (baseline: 1,800 req/s), then scale to 4 instances. If throughput only reaches 2,400 req/s instead of the expected ~3,600, something – a shared database, a load balancer configuration, a single-threaded service – is bottlenecking horizontal scaling.

Performance Engineer’s Perspective: Soak tests get cut most often because they take time. But a 4-hour soak test that reveals a memory leak is worth more than a dozen 10-minute load tests that miss it. Budget the time.

Choosing the Right Test: A Practical Decision Framework for Your Scenario

| Your Scenario | Test Type | Primary Metric to Watch | Pass/Fail Threshold Example |

|---|---|---|---|

| Validating launch readiness | Load test | p99 latency, error rate | p99 < 500ms, errors < 0.1% |

| Preparing for seasonal peak (Black Friday) | Load + spike test | Throughput under peak, recovery time | Sustain 5,000 users, spike to 8,000, recover < 60s |

| Diagnosing a slow API endpoint | Stress test | Response time vs. concurrency curve | Identify concurrency threshold where p99 exceeds SLO |

| Investigating suspected memory leak | Soak test | Memory growth rate over hours | Memory delta < 5% over 8-hour run |

| Planning infrastructure scaling | Scalability test | Throughput per added instance | Linear or near-linear throughput gain per node |

| Hardening a new microservice | Stress + soak | Breaking point + stability over time | Graceful degradation at 150% capacity, stable for 4+ hours at 100% |

Select your test type based on which SLI – latency, availability, or throughput – you need to validate against your defined SLO threshold. And the most effective testing programs combine types: a load test followed by a spike test on the same release gives a far more complete risk picture than either alone.

A Real-World Performance Testing Walkthrough: From Test Plan to Actionable Results

Let’s walk through a complete engagement: testing a checkout API for an e-commerce platform expecting 3,000 concurrent users during a promotional event. Test window: 90 minutes. The goal isn’t just to run a test – it’s to produce a prioritized list of engineering actions.

Step 1–2: Define Your SLAs and Design a Load Model That Reflects Reality

The SRE Workbook states: “An SLO sets a target level of reliability for the service’s customers. Above this threshold, almost all users should be happy with your service” [3]. Start there.

SLO definitions for this test:

- Checkout API: p90 < 300ms, p99 < 800ms

- Availability: > 99.9% (no more than 3 failed requests per 3,000)

- Error rate: < 0.1%

- Server CPU at peak: < 75%

Load model design – traffic mix based on production analytics:

| User Journey | % of Traffic | Think Time | Key Transactions |

|---|---|---|---|

| Browse catalog | 50% | 8–12s between pages | Search, filter, product view |

| Add to cart | 30% | 5–8s | Cart add, cart view, update quantity |

| Checkout + payment | 20% | 10–15s | Address entry, payment submit, confirmation |

SLI selection should match the journey: the checkout flow is latency-dominated (users abandon if payment confirmation takes too long), while the catalog browse is throughput-dominated (the system must serve high request volume without queuing).

Step 3–4: Environment Setup, Test Execution, and What to Watch in Real Time

Environment parity is non-negotiable. A test run against a 2-node environment when production runs on 8 nodes produces confidence you haven’t earned. Match instance types, database configurations, CDN settings, and network topology as closely as possible. Where full parity isn’t achievable, document the delta and account for it in analysis. For practical insights on preparing your environment, explore 6 Tips for Building a Better Load Testing Environment.

WebLOAD’s integrated analytics dashboard consolidates the monitoring stack – reducing the toolchain complexity of assembling separate load generators, time-series databases, and visualization layers – while supporting protocol-level scripting for multi-step user journeys with session parameterization.

Five real-time metrics to watch during execution (and their investigation thresholds):

- p99 response time — investigate if exceeding SLO by >20% (e.g., >960ms against an 800ms target)

- Active virtual users — confirm ramp profile is executing as designed

- HTTP error rate % — investigate immediately if >0.5%

- Application server CPU % — investigate if sustained >80%

- Memory utilization (MB) — investigate if growth rate exceeds 100MB/hour during sustained load

When p99 starts climbing while p50 remains flat, you’re watching a bottleneck form in real time – the majority of requests are fine, but a growing tail is hitting a contended resource.

Step 5: Interpreting Results and Turning Data Into Prioritized Engineering Actions

This is the step every competitor guide omits. Three questions structure your analysis:

Where did the system degrade? At 2,000 concurrent users, p99 latency spiked from 320ms to 1,200ms – exceeding the 800ms SLO. Error rate remained below 0.1%.

Why did it degrade? Correlating request-level latency with server metrics: database node CPU hit 98%, and query wait time accounted for 82% of total response time. The application server CPU remained at 45%, the app layer wasn’t the constraint.

What’s the highest-priority fix? The database bottleneck. Profiling revealed a missing index on the orders table’s user_id column, causing full table scans under concurrent checkout load. After adding the index and rerunning the test: p99 dropped to 340ms at 3,000 concurrent users. SLO met. Ship it.

The SRE Workbook’s error budget framing governs what happens next: if the fix consumed significant engineering time and other SLO violations are pending, “releases are temporarily halted while additional resources are invested in system testing” [2]. Results drive release decisions – not opinions.

Performance Engineer’s Perspective: The most common mistake is treating performance test output as a report to file. Results only have value when they produce a specific, prioritized action – “add index to orders.user_id” beats “investigate database performance” every time.

Identifying and Resolving Software Bottlenecks: The Diagnosis-to-Fix Playbook

Software bottlenecks restrict throughput by creating resource contention at a single point in the processing chain. A database query that runs in 5ms for 10 users can take 2,000ms for 1,000 users due to lock contention – and this class of issue only surfaces under load. No amount of functional testing will find it.

Here are the five most common bottleneck categories and their diagnostic signatures:

Phase 1 — Detection: The Metric Signatures That Tell You a Bottleneck Is Forming

Stop watching averages. The SRE Workbook makes the case definitively: “If 90% of users’ requests return within 100 ms, but the remaining 10% take 10 seconds, many users will be unhappy” [3]. Percentile divergence – p99 spiking while p50 stays flat – is the earliest bottleneck signal.

Five detection signatures to configure before every test run:

| Signature | What It Indicates | Instrumentation |

|---|---|---|

| p99 rising, p50 stable | Resource contention affecting tail requests | Application-level latency histograms |

| Error rate spike at specific concurrency | Capacity ceiling reached | HTTP status code monitoring |

| Memory growth without plateau | Memory leak (object retention, unclosed connections) | Heap/RSS monitoring at 1-minute intervals |

| Queue depth increasing linearly | Thread pool or connection pool saturation | Middleware/app server metrics |

| CPU >90% on one node, <40% on others | Unbalanced load distribution or single-point bottleneck | Per-node infrastructure monitoring |

Three common bottleneck categories with resolution patterns:

Database bottlenecks: The most frequent offender. Detection: query wait time dominates response time; database CPU spikes while app CPU idles. Root cause examples: missing indexes, N+1 query patterns, lock contention on hot tables. Resolution: query optimization, index creation, read replica routing. Verification: retest at the same concurrency and confirm p99 improvement.

Thread/connection pool exhaustion: Detection: response times plateau, then cliff, all requests slow simultaneously rather than a tail distribution. Root cause: pool size undersized for concurrency, or slow downstream calls holding connections too long. Resolution: increase pool size (with monitoring for memory impact), add circuit breakers, optimize downstream call latency.

Memory leaks: Detection: memory grows linearly during a soak test without reaching a plateau. Root cause: unclosed database connections, cached objects growing unbounded, event listener accumulation. Resolution: heap analysis to identify retaining references, connection pool lifecycle fixes, bounded cache configuration.

RadView’s platform accelerates the diagnosis phase through correlation capabilities that link request-level latency degradation directly to server-side resource metrics, helping teams identify which resource is contended without manually cross-referencing dashboards. For more on identifying bottlenecks effectively, see Test & Identify Bottlenecks in Performance Testing.

For peer-reviewed research on performance analysis methodologies, Carnegie Mellon SEI’s Software Engineering Research provides deep foundational context.

Frequently Asked Questions

Is 100% performance test coverage worth the investment?

Not always. Covering every endpoint and user journey with dedicated performance tests produces diminishing returns. Focus on the 20% of transactions that drive 80% of business value and risk, typically authentication, checkout/payment flows, search, and any endpoint with database write operations. A targeted load test on 5 critical API endpoints at realistic concurrency delivers more actionable data than a broad test across 50 endpoints at unrealistic volumes.

How do I convince leadership to fund continuous performance testing when nothing is broken yet?

Use the SRE cost-per-nine framework [2]. Calculate your service’s revenue, multiply by the availability improvement you’re targeting, and compare against the cost of the testing program. For a $10M-revenue service, improving from 99.9% to 99.99% is worth $9,000 in prevented downtime value – per year, compounding. Frame it as insurance with measurable ROI, not a QA overhead.

Should performance tests run in production or a staging environment?

Both, for different purposes. Staging environments (matched to production topology) are where you run destructive tests – stress tests, spike tests, and failure-mode exploration. Production is where you run synthetic monitoring and lightweight canary tests to validate that deployed performance matches pre-release benchmarks. Never run stress tests in production unless you have traffic isolation and circuit-breaking in place.

What’s the minimum soak test duration to catch memory leaks reliably?

Four hours is the practical minimum for most applications; 8–12 hours catches slower leaks. Monitor memory at 1-minute intervals and calculate the growth rate per hour. If memory grows by more than 5% of baseline per hour without plateauing, you likely have a leak. Some leaks only manifest after garbage collection cycles stabilize, which in JVM-based applications can take 60–90 minutes.

Can AI replace human judgment in performance test analysis?

Not today, but it’s reducing toil significantly. AI-assisted analysis can flag anomalous metric patterns, correlate latency spikes with resource contention, and suggest probable root causes faster than manual dashboard review. The human role shifts from “find the problem” to “validate the finding and decide the fix.” WebLOAD incorporates AI-assisted correlation to accelerate this workflow, but the final prioritization – which fix ships first, which risk is acceptable – remains an engineering decision.

Conclusion

Performance testing succeeds when it produces a prioritized action list, not a dashboard screenshot. The methodology you choose – load, stress, spike, soak, or scalability – should be driven by the specific risk you’re mitigating, validated against SLO thresholds drawn from real business requirements, and followed by a diagnosis-to-fix workflow that turns data into deployed improvements. The teams that treat performance testing as a continuous engineering signal rather than a pre-launch checkbox are the ones shipping reliably at scale.

Performance benchmarks, latency thresholds, and cost-benefit figures cited in this article are illustrative examples based on documented engineering principles and publicly available research. Actual results will vary based on application architecture, infrastructure configuration, and workload characteristics. All tool-specific guidance reflects capabilities at time of publication; consult current product documentation for the latest features.

References and Authoritative Sources

- Perry, A. & Luebbe, M. (N.D.). Chapter 17 – Testing for Reliability. In B. Beyer, C. Jones, J. Petoff, & N. R. Murphy (Eds.), Site Reliability Engineering: How Google Runs Production Systems. Google/O’Reilly Media. Retrieved from https://sre.google/sre-book/testing-reliability/

- Alvidrez, M. & Roth, M. (N.D.). Chapter 3 – Embracing Risk. In B. Beyer, C. Jones, J. Petoff, & N. R. Murphy (Eds.), Site Reliability Engineering: How Google Runs Production Systems. Google/O’Reilly Media. Retrieved from https://sre.google/sre-book/embracing-risk/

- Thurgood, S., Ferguson, D., Hidalgo, A., & Beyer, B. (N.D.). Chapter 2 – Implementing SLOs. In B. Beyer, N. R. Murphy, D. K. Rensin, K. Kawahara, & S. Thorne (Eds.), The Site Reliability Workbook. Google/O’Reilly Media. Retrieved from https://sre.google/workbook/implementing-slos/