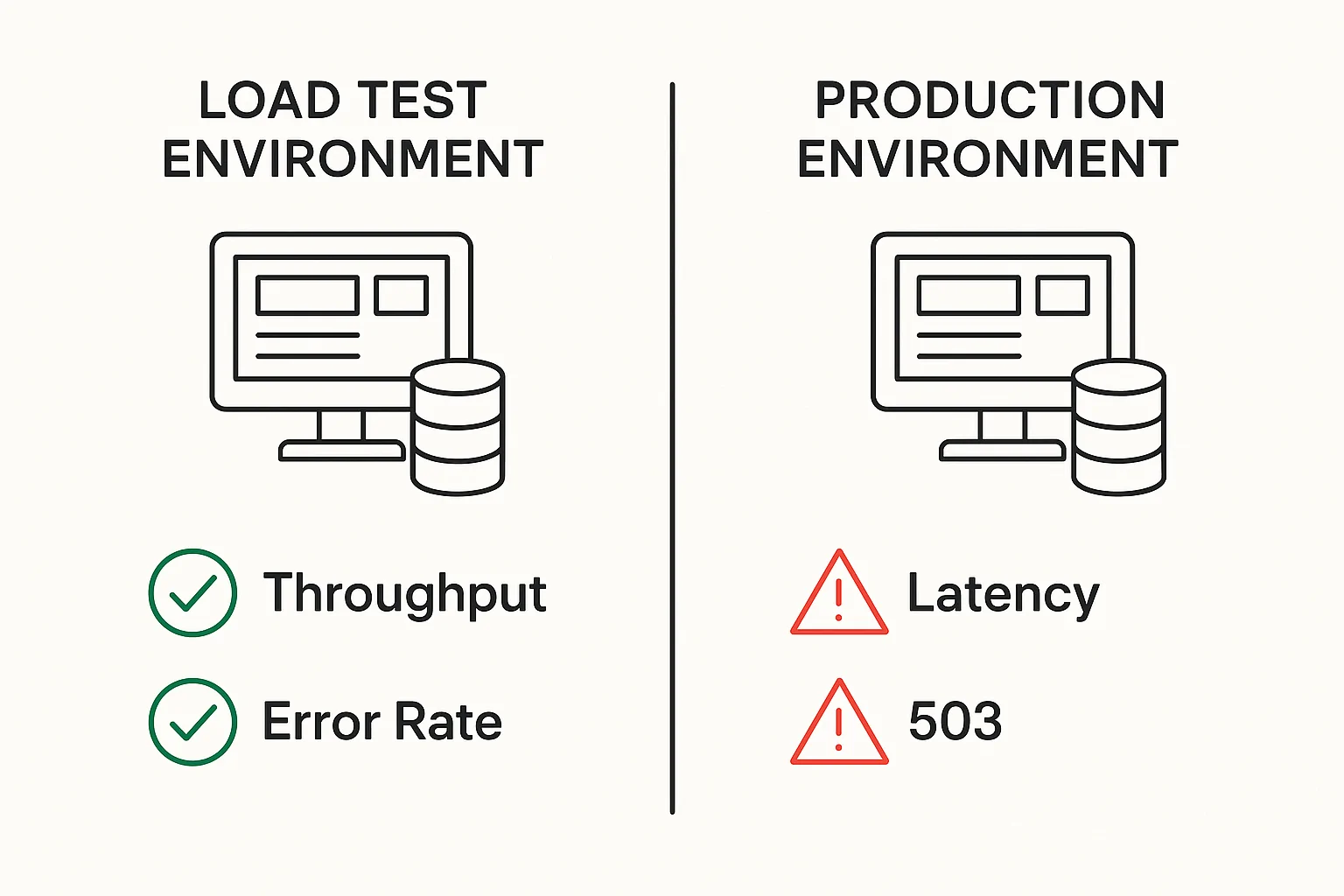

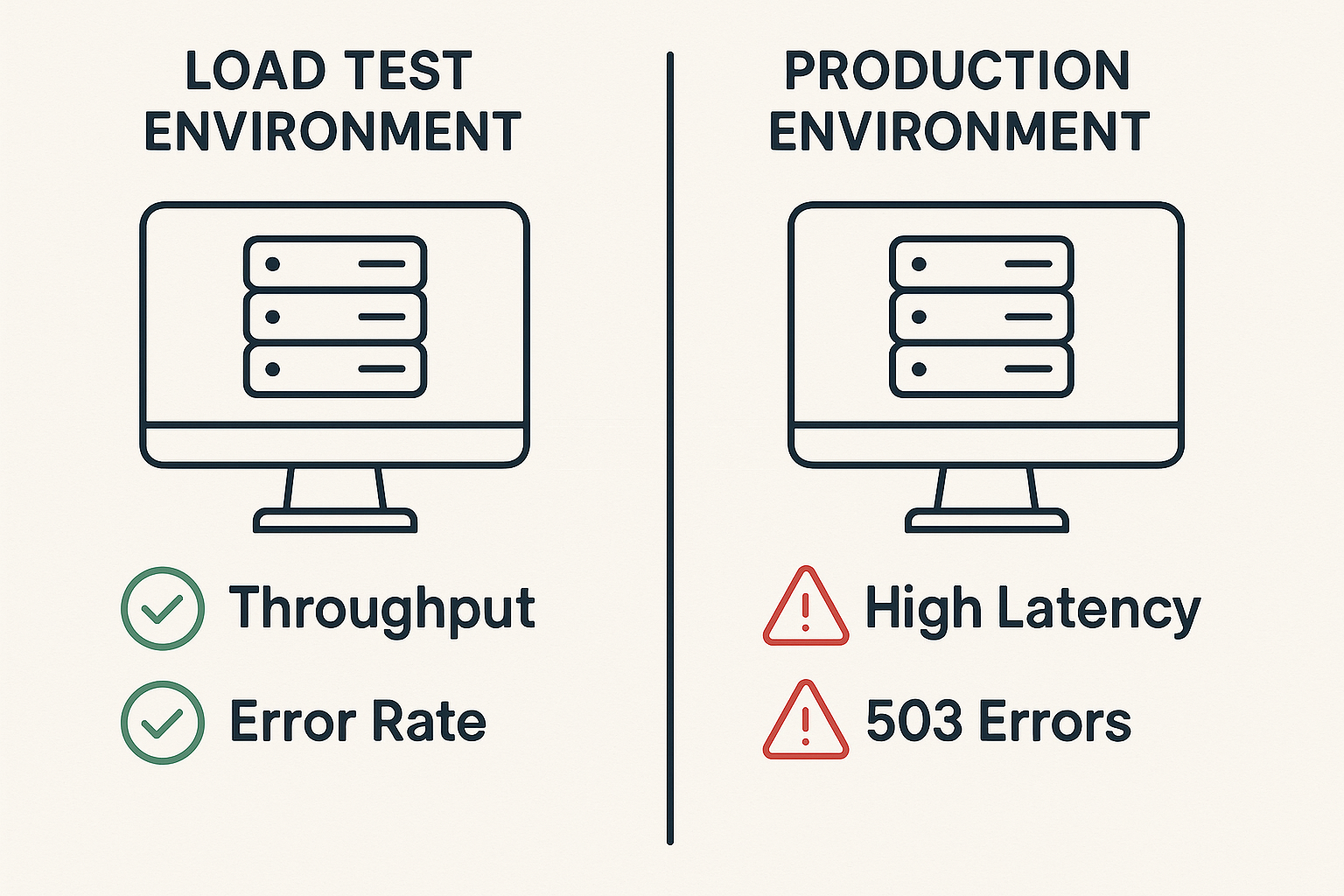

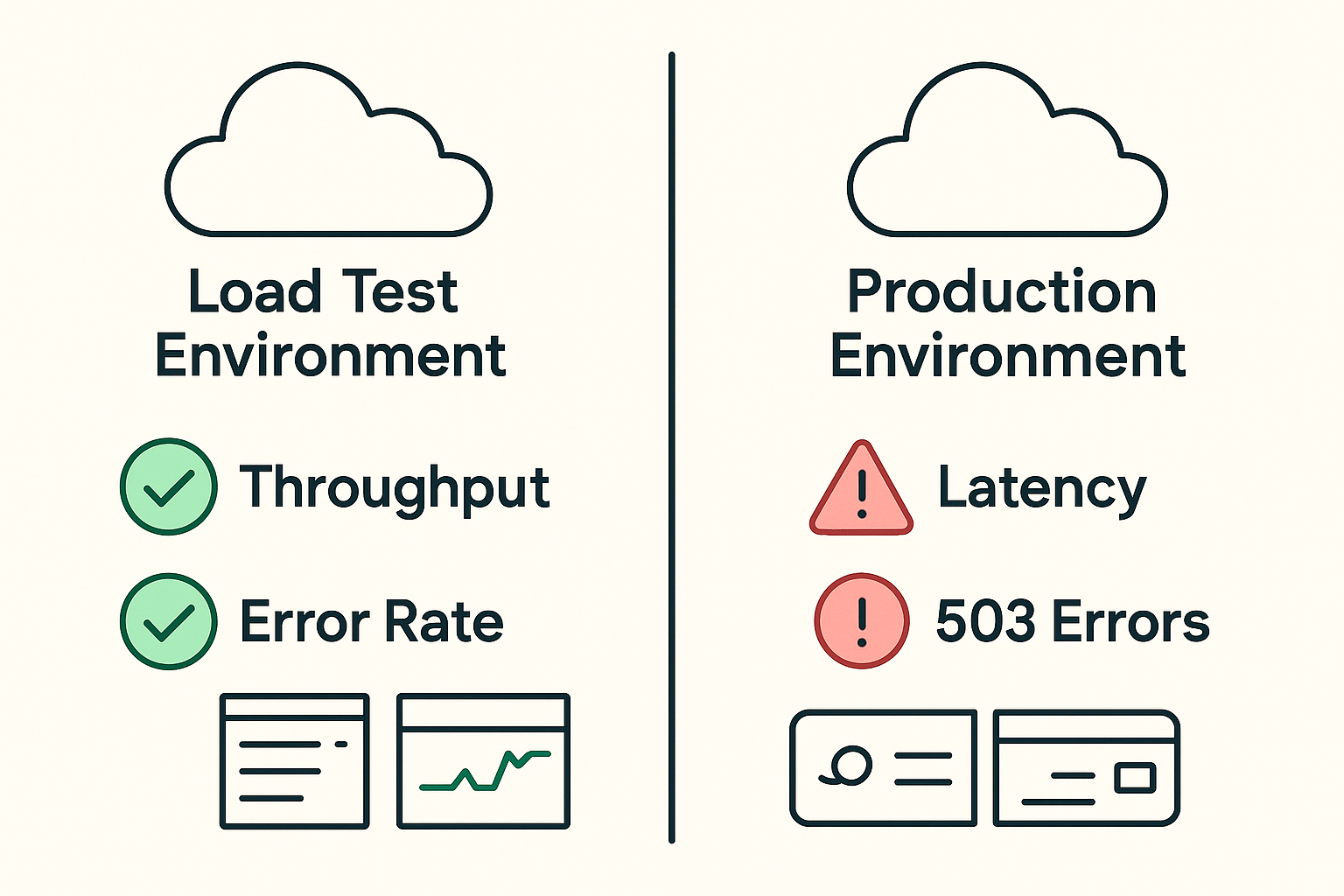

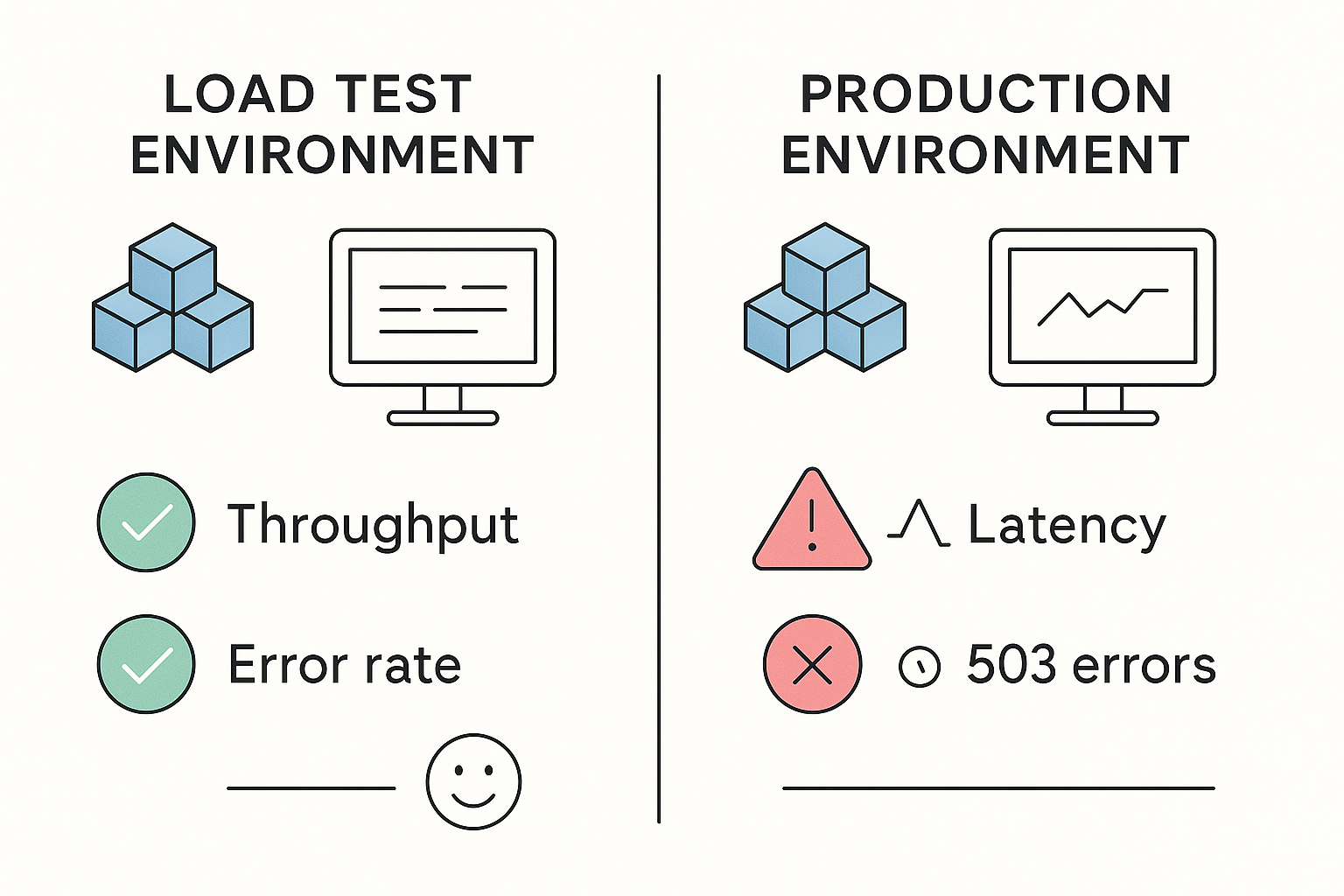

You’ve seen it happen. The load test dashboard is green across the board — throughput looks healthy, error rates are near zero, response times are well within SLA. The team ships with confidence. Then real users arrive, and within 90 minutes, p99 latency climbs past 4 seconds, the checkout service starts throwing 503s, and an engineer is pulling an emergency rollback at 2 AM.

The test passed. Production didn’t.

This gap between what load tests reveal and what production actually experiences is not a tooling problem — it’s a methodology problem. According to a landmark NIST study, inadequate software testing infrastructure costs the U.S. economy approximately $59.5 billion annually, with over half of all software defects going undetected until they reach downstream production environments [1]. A peer-reviewed survey of 252 software testing professionals found that 89.3% of organizations skip critical pre-production testing combinations entirely [3]. These are not edge cases. They are the industry default.

If you’re a QA lead, performance engineer, SRE, or DevOps manager who has lived through the scenario above, this article is your remediation playbook. We’re not going to simply list mistakes — you can find shallow listicles anywhere. Instead, we’ll break down the five most costly load testing errors with root-cause analysis, quantified business impact, and step-by-step fixes you can implement in your next sprint. Where applicable, we’ll show how modern tooling — specifically WebLOAD by RadView — operationalizes each fix at the platform level.

For those looking for guidance on how to conduct a load test effectively, understanding the fundamentals is crucial. Here’s what we’ll cover: unrealistic workload models, environment misconfiguration, missing correlation and hardcoded data, tests that are too short to surface real failures, and skipping baseline comparisons. Let’s get into it.

- Why Load Testing Mistakes Are So Costly (And So Common)

- Mistake #1: Using an Unrealistic Workload Model That Doesn’t Reflect Production Traffic

- Mistake #2: Running Tests in a Poorly Configured or Non-Production-Like Environment

- Mistake #3: Ignoring Correlation and Hardcoded Data in Test Scripts

- Mistake #4: Running Tests That Are Too Short and Missing Steady-State Behavior

- Mistake #5: Skipping Baseline Tests and Running Comparisons Without a Control

- Frequently Asked Questions

- Conclusion

- References and Authoritative Sources

Why Load Testing Mistakes Are So Costly (And So Common)

Before we walk through specific mistakes, it’s worth understanding why flawed load tests are uniquely expensive compared to other testing failures — and why even experienced teams keep making them.

The Hidden Price Tag: What a Single Bad Test Run Costs Your Team

A load test that produces misleading results doesn’t just waste the hours spent running it. It creates a false confidence signal that propagates through the entire release pipeline. Engineering signs off. Product ships. And when production degrades, the cost multiplies: emergency hotfixes consume senior engineer time at 3–5x the cost of planned work, SLA breach penalties kick in for enterprise contracts, and user trust erodes in ways that don’t recover quickly.

The NIST study quantified this multiplier effect directly: identifying and correcting defects during software development accounts for approximately 80% of total development costs, and that percentage climbs steeply when defects escape to production [1]. At hyperscale, Meta’s ServiceLab team reported that performance regressions caught through pre-production testing each year amount to the capacity of more than one million machines [2]. Even a 0.01% regression on half a million machines equates to over 50 lost machine-equivalents — translate that to a mid-size enterprise running a few hundred cloud instances, and a single missed bottleneck can represent tens of thousands of dollars in wasted infrastructure per month.

Why These Mistakes Keep Happening: The Systemic Root Causes

Load testing mistakes persist not because engineers are careless, but because of structural forces working against test fidelity. The Sophocleous & Kapitsaki survey found that 39.7% of participants use only half of their system requirements to design test cases [3] — meaning the majority of organizations are systematically under-testing before they even begin execution. Combine this with rushed sprint timelines that compress test windows, over-reliance on tool defaults that don’t match production reality, and the absence of a documented, repeatable testing process, and you get a recipe for recurring failures.

For those exploring different performance testing methodologies, it’s beneficial to understand the 4 types of load testing and when each should be used. CMU Software Engineering Institute: Testing Recommendations for Reliability and Performance specifically addresses why non-deterministic environments produce unreliable results. The root causes are systemic, which means the fixes must be systemic too.

Mistake #1: Using an Unrealistic Workload Model That Doesn’t Reflect Production Traffic

This is the single most consequential load testing mistake, and it’s the one most likely to produce a test that “passes” while hiding real capacity problems. An unrealistic workload model means your virtual users don’t behave like real users — they hit endpoints too uniformly, skip branching logic, ignore geographic latency, and execute transactions at machine speed rather than human speed.

Those aiming to avoid these pitfalls might benefit from exploring best practices for testing web applications to ensure they reflect real-world scenarios. The Meta ServiceLab team — publishing peer-reviewed research at USENIX OSDI 2024 — stated it directly: “Performance data from Google’s Gmail shows that workloads change constantly, both QPS and response size. Hence we need to do real production traffic record and replay” [2]. They also found that “the top reason for false negatives is that a newly introduced feature is not tested since the requests for replaying were recorded when this feature does not exist” [2]. Static workload models create systematic blind spots.

What ‘Realistic’ Actually Means: The 4 Dimensions of a Production-Accurate Workload Model

A workload model that reflects production reality must account for four dimensions simultaneously:

- Concurrency levels matching real peak traffic. Model peak concurrency at 120–150% of your observed production peak to account for organic traffic spikes. If your analytics show 2,000 concurrent sessions during peak hours, your test should ramp to at least 2,400–3,000.

- Representative user journeys. Your transaction mix should mirror actual navigation paths. If 60% of production traffic hits the product catalog, 25% reaches the cart, and only 8% completes checkout, your virtual user mix must reflect those ratios — not a uniform distribution across all endpoints.

- Realistic think time distributions. Real users pause between actions. A product browsing phase typically takes 5–20 seconds; cart review takes 10–30 seconds. A flat 1-second think time — or worse, zero think time — inflates effective VU load by an order of magnitude and overwhelms server-side resources in ways production traffic never would.

- Geographic and device-mix distribution. If 40% of your users are on mobile connections from Southeast Asia and 30% are on desktop from North America, your test should replicate those latency profiles and connection characteristics.

WebLOAD by RadView operationalizes all four dimensions through real browser-based virtual users that execute JavaScript, handle dynamic content rendering, and support parameterized user journey scripting with variable think time distributions.

Think Time and Pacing: The Two Knobs Most Teams Set Wrong

Think time (the delay between a user’s page interactions) and pacing (the interval between a virtual user’s transaction repetitions) are the two most frequently misconfigured scripting parameters. Teams either omit think time entirely — producing a stress test when they intended a load test — or use a single flat value that doesn’t reflect the variance in real user behavior.

Consider a login-to-checkout flow: a realistic model includes 5–8 seconds on product search, 15–20 seconds reviewing product details, and 3–5 seconds confirming the cart. Replace all of these with a flat 1-second delay, and you’ve effectively multiplied your concurrent load by 5–20x — your 500-VU test is now hammering the application like 5,000+ users would. The bottlenecks you find won’t match production, and the bottlenecks you miss will.

A zero-second think time on a 60-second user journey effectively multiplies perceived VU load by 60x. That’s not a load test; it’s a denial-of-service simulation.

How to Build a Production-Accurate Workload Model: A Step-by-Step Framework

- Extract traffic data from production logs or APM tools. Pull at least two weeks of access logs (Apache, NGINX, or your CDN’s analytics) to identify peak concurrency, request distribution by endpoint, and diurnal traffic patterns.

- Identify top user journeys by transaction volume. Rank the 5–8 most common navigation paths and their relative frequency. Map each path to a scripted virtual user scenario with the correct transaction mix ratio.

- Parameterize think times from session recordings. Use real user monitoring (RUM) data or session replay tools to extract actual inter-action delays. Apply these as randomized distributions (e.g., a normal distribution with a mean of 12 seconds and a standard deviation of 4 seconds for product browsing) rather than fixed values.

- Model geographic and device distribution. Configure load generators in regions matching your user base. WebLOAD by RadView supports distributed load generation across cloud regions, allowing you to replicate geographic latency profiles without deploying dedicated infrastructure in each location.

- Validate with a baseline comparison run. Before full-scale execution, run a small-scale validation test (50–100 VUs) and compare response time distributions against production APM data. If the median and p95 don’t align within 15–20%, your model needs recalibration.

Meta’s ServiceLab team follows a production traffic record-and-replay methodology at hyperscale [2]. Enterprise teams can approximate this approach using log-based workload extraction and parameterized scripting — the key is grounding every model assumption in observed data, not engineering intuition.

Mistake #2: Running Tests in a Poorly Configured or Non-Production-Like Environment

Environment misconfiguration is insidious because it produces results that look valid. Response times are consistent, error rates are low, throughput curves are smooth. The problem is that the environment itself is distorting every measurement — and you won’t discover this until production reveals what the test environment was hiding.

For further insights on building reliable environments, RadView provides 6 tips for building a better load testing environment. The Meta ServiceLab research documented that uncontrolled test environment variance — CPU architecture, kernel version, datacenter region — introduces noise that masks regressions [2]. If your test environment doesn’t match production topology, your results measure the test environment’s behavior, not your application’s.

The Cloud Environment Trap: Why Burst Credits and Shared Resources Corrupt Your Results

Cloud infrastructure introduces environment pitfalls that don’t exist on bare metal. AWS T2 and T3 instances use a CPU burst credit mechanism: they accumulate credits during idle periods and spend them during high-utilization phases. A 10-minute load test may run entirely on burst credits, showing stable response times. A 45-minute test on the same instance will exhaust those credits, and CPU performance drops to baseline — often 20–40% of burst capacity.

This is a well-documented failure pattern: during pre-Black Friday load testing, a test appeared stable until burst credits depleted mid-run, masking a real capacity constraint that would have surfaced under sustained production traffic. EBS I/O credits exhibit the same pattern, and RDS instances don’t always expose their remaining I/O credit balance in monitoring dashboards.

Mitigation: Use compute-optimized, fixed-performance instances (e.g., C-family on AWS) for load generators. If you must use burstable instances, pre-warm them under sustained load for at least 30 minutes before capturing any measurement data.

Environment Standardization Checklist: 8 Checks Before Every Test Run

Use this checklist before every load test execution. Each item has a measurable pass/fail criterion:

- Load generator CPU headroom: Generator CPU utilization must stay below 70% during peak test phase. FAIL if sustained above 70% for more than 60 seconds — generator saturation contaminates response time measurements.

- Network topology parity: Load generators must connect to the application under test (AUT) through the same network hops (load balancer, WAF, CDN) that production traffic traverses. FAIL if any production network layer is bypassed.

- OS and runtime version match: AUT server OS, JVM/runtime version, and web server configuration must match production within one minor version. FAIL on major version mismatch.

- Shared infrastructure isolation: No other teams, CI pipelines, or batch jobs should run against the test environment during execution. FAIL if any non-test workload consumes more than 5% of shared database or compute resources.

- Data volume consistency: Test database must contain a representative data volume (minimum 80% of production row counts for primary tables). FAIL if data volume is below 50% — query execution plans differ dramatically on small datasets.

- Monitoring agent overhead: Verify that APM agents and logging collectors consume less than 3% of CPU and 2% of memory on the AUT. FAIL if monitoring overhead exceeds these thresholds.

- Geographic proximity of generators to AUT: Load generators should be deployed in the same region as the AUT, or in regions matching real user traffic distribution. WebLOAD by RadView’s distributed generation architecture supports multi-region deployments for this purpose. FAIL if generator-to-AUT round-trip time exceeds production user latency by more than 20%.

- Third-party dependency behavior: External APIs, payment gateways, and CDNs must be either real (with permission) or virtualized with realistic latency. FAIL if third-party calls are mocked with zero latency.

The Meta ServiceLab team validates environment consistency using A/A testing (running the same code version against two identical environments to detect baseline drift) [2]. For enterprise teams, running a 10-minute A/A comparison before each major test cycle catches environment anomalies before they contaminate results.

Isolating the Application Under Test: Why Shared Environments Destroy Test Accuracy

Shared staging environments are a persistent source of unreliable results. When a CI pipeline runs database schema migrations concurrently with your load test, the migration’s table locks can spike p99 latency by 300–500ms — creating a false bottleneck signal that doesn’t exist in isolated production conditions. Similarly, another team’s QA regression suite consuming database connection pool slots can starve your test’s virtual users of connections, producing timeout errors that reflect environment contention rather than application capacity limits.

Remediation strategies:

- Reserve dedicated test windows with infrastructure access controls that prevent concurrent workloads.

- Use infrastructure-as-code to spin up ephemeral, isolated test environments on demand — tear them down after each test cycle.

- If full isolation isn’t possible, instrument your monitoring to tag and filter resource consumption by workload source, so you can identify and discount interference in post-run analysis.

Mistake #3: Ignoring Correlation and Hardcoded Data in Test Scripts

When a test script replays recorded HTTP requests with hardcoded session tokens, CSRF tokens, ViewState values, or order confirmation IDs, every request after the first iteration uses an expired or invalid identifier. The server rejects the request — but the failure mode is often subtle: an HTTP 403 here, a redirect to a login page there, a silent 200 response that returns an error page instead of the expected content.

The result? Your test reports a 95% success rate, but 80% of those “successful” responses are actually error pages that your script isn’t validating. The real success rate is closer to 20%.

What Is Correlation and Why Auto-Correlation Isn’t Enough

Correlation means capturing a dynamic, server-generated value from one response (e.g., a CSRF token embedded in a hidden form field) and inserting it into subsequent requests that require it. Every modern web application generates session tokens, anti-forgery tokens, transaction IDs, and timestamps that change per session or per request. If your script doesn’t extract and replay these dynamically, it’s submitting stale credentials on every iteration.

Auto-correlation features in testing tools scan responses for known patterns and attempt to create extraction rules automatically. This works well for standard frameworks — ASP.NET ViewState, common CSRF meta tags — but fails silently for custom parameter names, JSON Web Tokens embedded in API response bodies, or application-specific correlation IDs in non-standard locations.

WebLOAD by RadView provides both automatic correlation rule suggestions for recognized patterns and a manual correlation rule editor for custom parameters, with a request/response log viewer that highlights dynamic values changing between iterations.

The Correlation Audit: How to Find and Fix Hardcoded Values in Existing Scripts

If you have existing test scripts that may contain correlation gaps, use this audit process:

- Run a two-iteration test with full request/response logging enabled. Execute the script with a single virtual user for exactly two complete iterations, capturing every request header, body, and response.

- Diff the requests between iterations. Compare outgoing request bodies and headers between iteration 1 and iteration 2. Any value that should have changed (session IDs, tokens, timestamps) but didn’t is a hardcoded correlation candidate.

- Apply tool-level correlation rules for known patterns. Use your testing tool’s correlation library to auto-extract standard parameters (CSRF tokens, ASP.NET ViewState, JSESSIONID). In WebLOAD, the correlation engine flags these automatically during script recording.

- Create manual extraction rules for custom parameters. For application-specific dynamic values (e.g., a JSON field like “orderId”: “ORD-7821943”), create a regex or boundary-based extraction rule that captures the value from the response and injects it into subsequent requests.

- Validate with a multi-user smoke test. Run 10–20 VUs for 5 minutes and verify that the error rate stays below 1%. Before correlation fixes, a typical symptom is 87% of VUs failing with HTTP 403 after iteration 1. After implementing proper CSRF token correlation, expect a sustained 99%+ success rate across 500 VUs over 30 minutes.

Test Data Management: Why Using the Same User Record for Every Virtual User Breaks Your Test

Even with perfect correlation, using a single shared username/password or a single product SKU across all virtual users creates a fundamentally distorted test. When 500 virtual users all log in as user ID 1001, the database caches that user’s record in memory after the first query. Every subsequent lookup is a hot-cache hit — response times appear 40–60% faster than production reality, where thousands of distinct users force cold-cache lookups across a distributed dataset.

Shared test data also creates artificial contention: all VUs updating the same order record can trigger row-level locks and deadlocks that wouldn’t occur with distributed data access patterns.

Fix: Create a test data pool with at least one unique record per virtual user. For a 1,000-VU test, seed 1,000+ unique user accounts, product SKUs, and transaction records. Use parameterized data feeds in WebLOAD to assign each VU a unique data slice from a CSV or database source.

Mistake #4: Running Tests That Are Too Short and Missing Steady-State Behavior

A 5-minute load test is a snapshot. It captures initial system behavior — warm caches, fresh connection pools, empty garbage collection queues. It misses everything that matters for sustained production reliability: memory leaks that surface after 30 minutes, connection pool exhaustion that begins at the 20-minute mark, thread buildup from slow third-party integrations, and garbage collection pauses that spike latency every 15 minutes under sustained allocation pressure.

The failure pattern earlier documented this directly: short tests ran entirely within AWS burst credit windows, producing stable metrics that collapsed once credits depleted under sustained load. Performance engineering practitioners from HP’s R&D team have emphasized that ramp-up and ramp-down phases must be isolated from steady-state measurement to avoid contaminating results with transient system behavior.

Minimum duration guidance:

| Application Type | Minimum Steady-State Duration | Why |

|---|---|---|

| Stateless API | 15–20 minutes | Connection pool cycling, GC behavior |

| Stateful web application | 30–45 minutes | Session accumulation, memory leaks |

| Database-intensive workflow | 45–60 minutes | Query plan cache invalidation, I/O saturation |

| Soak/endurance validation | 4–8 hours | Slow leaks, log rotation, cert renewal |

For more in-depth coverage on long-duration tests, read about endurance testing in software testing. The CMU Software Engineering Institute: Testing Recommendations for Reliability and Performance explicitly recommends soak testing under sustained load as a prerequisite for production readiness.

Structure every test with three phases:

- Ramp-up (5–10 minutes): Gradually increase VU count to target concurrency. Do not include this phase in performance measurements.

- Steady-state (minimum durations per table above): Hold target concurrency constant. This is your measurement window.

- Ramp-down (5 minutes): Gradually reduce VUs. Monitor for resource release patterns (connection pool cleanup, memory deallocation).

Mistake #5: Skipping Baseline Tests and Running Comparisons Without a Control

Without a baseline, you have no way to determine whether your test results represent acceptable performance, a regression, or an improvement. Teams that skip baseline testing end up comparing results against intuition (“that feels slow”) or against arbitrary thresholds disconnected from actual system behavior.

A proper baseline test establishes your application’s performance fingerprint under a known, controlled load — median response time, p95/p99 latency, error rate, throughput, and resource utilization (CPU, memory, disk I/O, network) — against a specific code version, environment configuration, and data state.

For further insights about performance metrics, be sure to study the performance metrics that matter in performance engineering. How to establish and maintain a baseline:

- Run a baseline test against a stable, released code version with the same workload model, environment, and data state you’ll use for subsequent tests. Record all key metrics.

- Store baseline results in version control alongside the code commit hash, environment spec, and test configuration.

- Before every subsequent test, run a short (10-minute) comparison at baseline load levels. If p95 latency deviates more than 10% from the stored baseline without a code change, investigate environment drift before proceeding.

- Update the baseline deliberately when you ship a major architectural change, not after every minor release.

Meta’s ServiceLab team uses A/A testing — running identical code versions against controlled environments — to detect measurement system drift before attributing any change to a code regression [2]. You don’t need hyperscale infrastructure to adopt this principle: a 10-minute A/A comparison before each test cycle catches environment anomalies that would otherwise contaminate your results.

Frequently Asked Questions

How long should a load test run to produce reliable results? At minimum, maintain steady-state load for 15–20 minutes for stateless APIs and 30–45 minutes for stateful web applications. Soak tests for memory leak and resource exhaustion detection should run 4–8 hours. Always exclude ramp-up and ramp-down phases from your measurement window.

What’s the difference between think time and pacing in load test scripts? Think time simulates the delay between a user’s interactions within a single transaction (e.g., browsing a product page for 15 seconds before clicking “Add to Cart”). Pacing controls the interval between a virtual user completing one full transaction and starting the next. Both must be set from real user behavior data, not tool defaults.

How do I know if my test environment is distorting results? Run an A/A test: execute the same test twice against the same code version and environment within a short window. If p95 latency varies by more than 10% between runs, your environment has uncontrolled variance — investigate resource contention, burst credit depletion, or shared infrastructure interference before trusting any subsequent test results.

Can AI help reduce load testing errors? AI-assisted workflows can automate correlation detection (flagging dynamic parameters that scripts miss), predict resource exhaustion patterns before they surface in test results, and recommend workload model adjustments based on production traffic drift. WebLOAD by RadView integrates AI-assisted analysis capabilities that reduce manual scripting effort and catch anomalies that human review might miss. That said, human review remains necessary for interpreting results in business context and validating that test scenarios match evolving user behavior.

What metrics should I prioritize when analyzing load test results? Focus on p95 and p99 response times (not averages — averages hide tail latency problems), error rate by error type (distinguish HTTP 4xx from 5xx), throughput under sustained load, and server-side resource utilization (CPU, memory, active connections, disk I/O). Apdex scores provide a useful single-number summary but should always be investigated alongside the underlying distribution.

Conclusion

Every mistake covered here — unrealistic workload models, environment misconfiguration, missing correlation, tests that are too short, and absent baselines — shares a common trait: the test appears to work. Dashboard metrics look reasonable. The green light gets given. And then production tells the real story.

The fix isn’t more tools or longer test runs in isolation. It’s a systematic approach: ground your workload models in production data, standardize and isolate your test environments, audit scripts for correlation gaps, run tests long enough to surface steady-state degradation, and always measure against a controlled baseline.

Use the environment standardization checklist and correlation audit process from this article as starting points for your next test cycle. If you’re working with WebLOAD by RadView, many of these practices — distributed load generation, real browser-based virtual users, automatic correlation detection, and AI-assisted analysis — are built into the platform’s workflow. But regardless of tooling, the methodology matters more than the product. Get the process right, and your load tests will finally tell you what production is actually going to do.

- Results from load testing practices described herein will vary based on system architecture, team maturity, tooling configuration, and organizational context. The statistics cited (e.g., NIST cost figures, Meta ServiceLab regression data) are sourced from their respective publications and should be interpreted within their original research contexts. No guarantee of specific performance outcomes is implied.

References and Authoritative Sources

- Tassey, G. (2002). The Economic Impacts of Inadequate Infrastructure for Software Testing (NIST Planning Report 02-3). National Institute of Standards and Technology / Research Triangle Institute. Retrieved from https://www.nist.gov/document/samate-document-greg-tasseys-summary-pdf-nists-2002-report-economic-impacts-inadequate

- Chow, M., Wang, Y., Tang, C., et al. (2024). ServiceLab: Preventing Tiny Performance Regressions at Hyperscale through Pre-Production Testing. Proceedings of the 18th USENIX Symposium on Operating Systems Design and Implementation (OSDI ’24). Retrieved from https://www.usenix.org/system/files/osdi24-chow.pdf

- Sophocleous, R. & Kapitsaki, G.M. (2020). Examining the Current State of System Testing Methodologies in Quality Assurance. Agile Processes in Software Engineering and Extreme Programming (XP 2020), Springer. Retrieved from https://pmc.ncbi.nlm.nih.gov/articles/PMC7251618/