At 2:14 AM on a Tuesday, a payment processing platform started dropping transactions. Not all at once – just a trickle at first. Error rates crept from 0.3% to 1.2% over four hours, then spiked to 18% by morning. The root cause? A memory leak that only surfaced after 14 hours of sustained traffic. A 30-minute load test had given the team a green light a week earlier. The system passed every check at expected concurrency. What nobody tested was what happened when that concurrency ran continuously for half a day.

This scenario illustrates why understanding the different types of load testing isn’t academic – it’s the difference between shipping with confidence and shipping with crossed fingers. Most teams know they should be testing under load. Fewer teams understand that “load testing” is actually four distinct practices, each answering a fundamentally different engineering question about system behavior.

This guide breaks down all four types – load testing, stress testing, capacity testing, and soak (endurance) testing – with the specific metrics, load profiles, duration guidelines, and real-world scenarios you need to choose the right test for the right moment. You’ll also find a side-by-side comparison table and practical configuration guidance for running each type.

- What Is Load Testing (and Where Do the 4 Types Come From)?

- Type 1: Load Testing – Validating Normal-Day Performance

- Type 2: Stress Testing – Finding the Breaking Point

- Type 3: Capacity Testing – Planning for Growth

- Type 4: Soak Testing (Endurance Testing) – Catching What Time Reveals

- All 4 Types at a Glance: Comparison Table

- Frequently Asked Questions

- References and Authoritative Sources

What Is Load Testing (and Where Do the 4 Types Come From)?

The confusion starts with the term itself. “Load testing” functions as both a specific test type AND a loose umbrella term that people use interchangeably with “performance testing.” This ambiguity leads teams to run one kind of test while thinking they’ve covered all their bases.

Performance testing is the parent category – any test that measures how a system behaves under specified conditions. Load testing, stress testing, capacity testing, and soak testing are all children of that category, each probing a different dimension of system behavior.

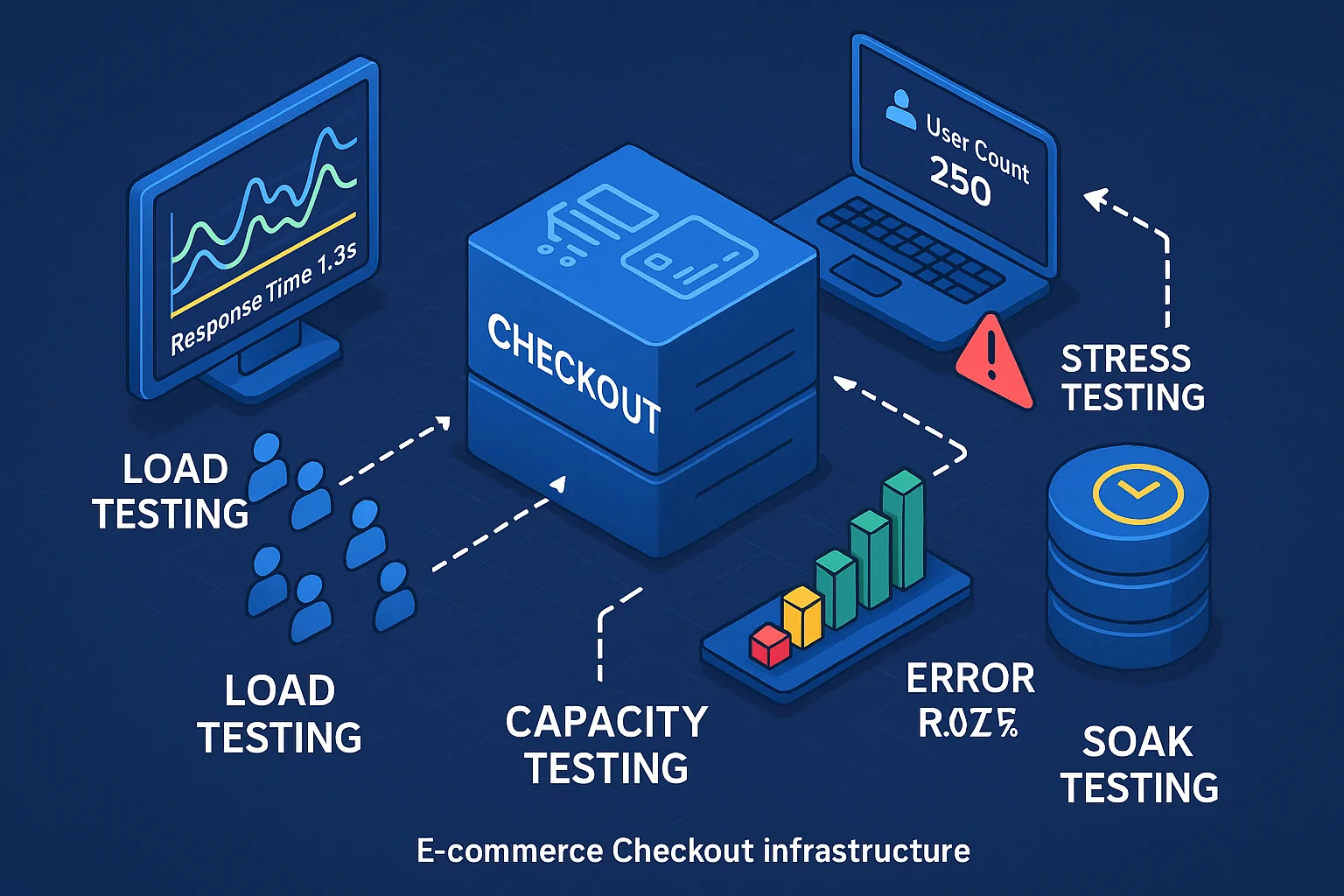

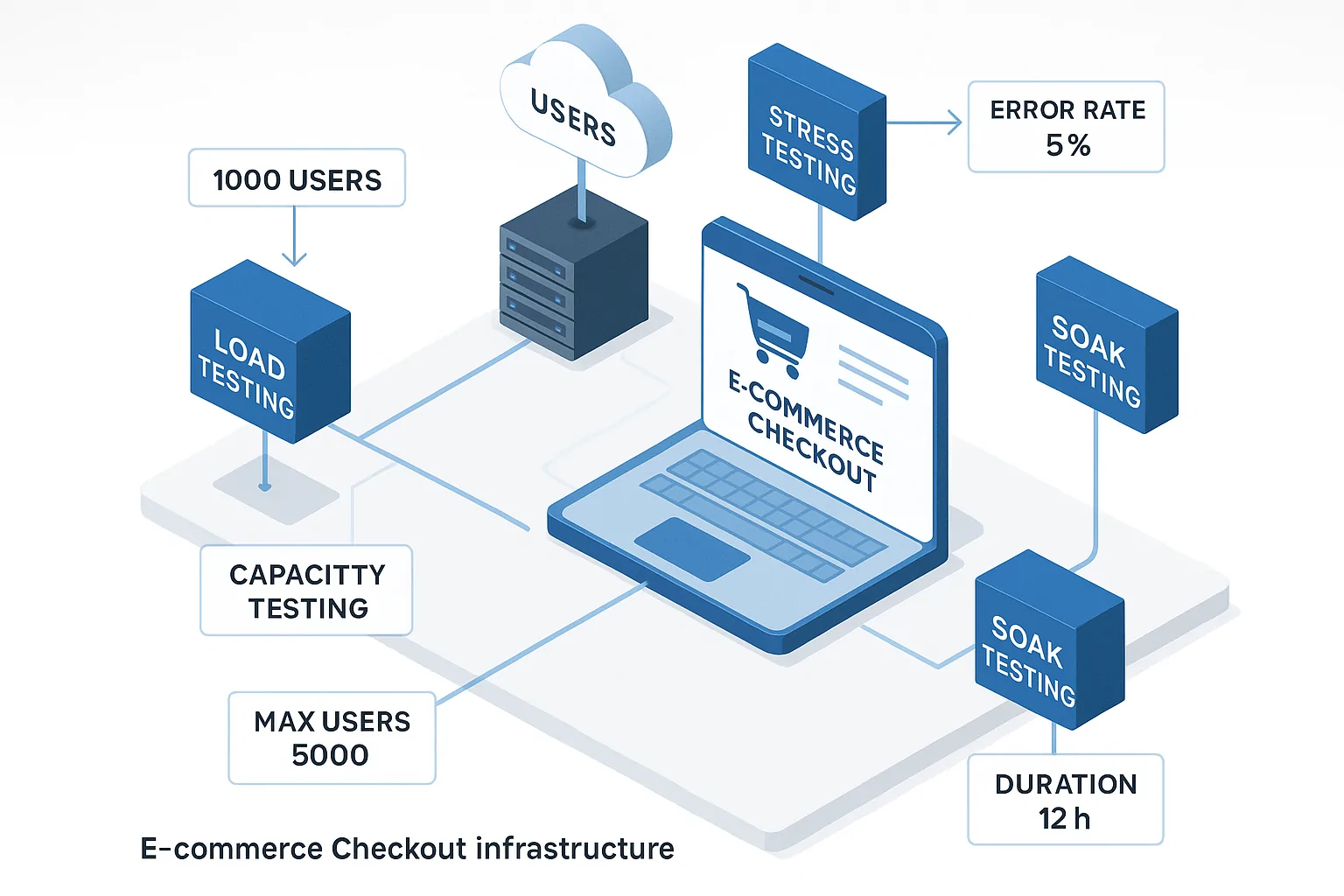

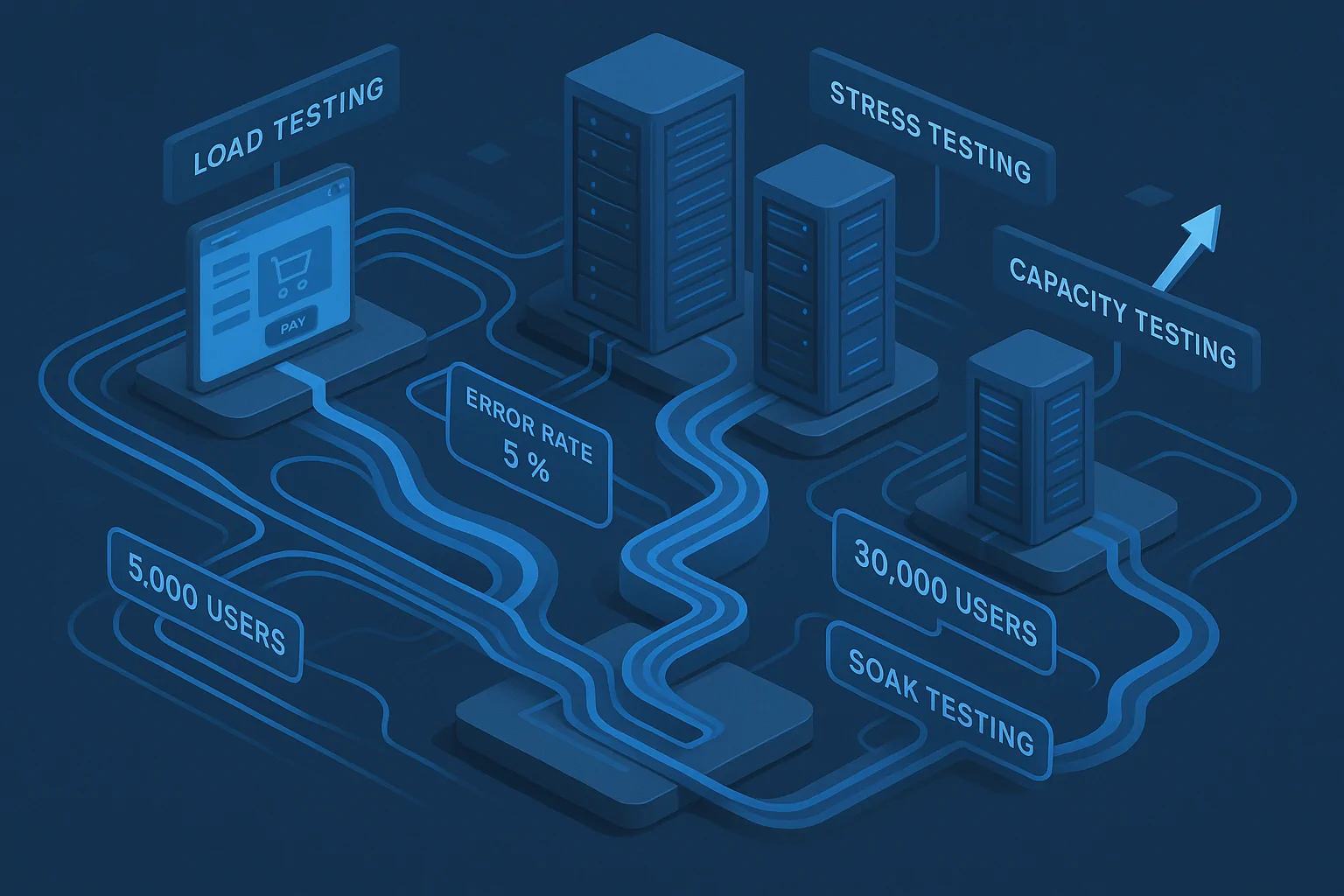

Consider a single e-commerce checkout flow. That one system component could require all four test types: a load test confirming it handles the expected 2,000 concurrent holiday shoppers, a stress test finding that it crashes at 4,500, a capacity test determining the maximum users achievable before checkout latency exceeds the 3-second SLA, and a soak test validating that it survives a 72-hour flash sale without degradation.

For a broader overview of how these types fit together, see this explanation of the different types of performance testing.

Load Testing vs. Performance Testing: Clearing Up the Terminology

Think of performance testing as the folder and load, stress, soak, and capacity as the files inside it. Performance testing might measure a single API endpoint’s response time in isolation (unit level), while load testing simulates 500 concurrent users hitting that endpoint to observe system-wide behavior under realistic demand. The Google SRE Book reinforces this layered hierarchy – unit tests, integration tests, system-level tests, and stress tests each operate at different scales and answer different questions.

For standards-level definitions of the timing and resource metrics that underpin all performance testing, the W3C Web Performance Working Group maintains the canonical specifications (Navigation Timing, Resource Timing, User Timing) that modern testing tools use to measure and report results.

The Four Questions That Define the Four Test Types

Every test type maps to a single engineering question:

| Question | Test Type | Trigger Scenario |

|---|---|---|

| Can my system handle expected traffic? | Load Test | Pre-release validation before a weekly sale |

| Where does my system break? | Stress Test | Preparing for an unpredictable viral event |

| How many users can I serve before needing more infrastructure? | Capacity Test | Planning next quarter’s cloud budget |

| Does my system degrade after running for hours or days? | Soak Test | Launching a 48-hour live streaming event |

Type 1: Load Testing – Validating Normal-Day Performance

Load testing in its specific sense validates that your system meets its SLAs under expected, realistic traffic volumes. You’re not trying to break anything – you’re confirming that everything works as promised when the anticipated number of users shows up. For a foundational walkthrough of the process, our beginner’s guide to load testing covers the core concepts and step-by-step setup.

A common SLA target might be p95 response time under 500ms and p99 under 1,000ms at peak expected concurrency. A passing load test means these thresholds hold steady across the test duration. AWS recommends teams “automatically carry out load tests as part of your delivery pipeline, and compare the results against pre-defined KPIs and thresholds” which is echoed in the AWS Well-Architected Framework – making load testing a continuous practice, not a one-time event.

What Load Testing Detects (and What It Misses)

Load testing surfaces response time degradation at expected concurrency, connection pool contention under simultaneous user sessions, inefficient database queries that only manifest when multiple users hit the same table, memory consumption trends under realistic call patterns, and third-party API latency when downstream services receive production-level request volumes.

Key metrics to monitor during a load test:

- Transactions per second (TPS): Should remain stable as concurrency ramps to expected levels

- Mean and p99 response time: Target varies by system, but p99 > 2× p95 signals outlier problems

- Error rate: Target below 1% for most web applications; below 0.1% for payment flows

- Server CPU utilization: Investigate at sustained >70%; critical at >85%

- Active database connections vs. pool limit: If you’re at 80% of pool capacity under normal load, you have no headroom

The Google SRE Book notes that performance tests detect incremental system degradation “without anyone noticing (before it gets released to users)” – which is exactly why these tests belong in CI/CD pipelines as regression guards, not just pre-launch rituals.

When to Run a Load Test: Triggers and Timing

Here’s a concrete decision checklist – run a load test when:

- Pre-release gate: Every release candidate build before production deployment

- Infrastructure change: After scaling instances, migrating databases, or changing CDN configurations

- Significant code refactor: Any change touching >15% of the codebase or modifying data access patterns

- Anticipated traffic event: Product launches, marketing campaigns, seasonal peaks – at least 2 weeks before the event

- CI/CD smoke check: A 15-minute baseline load test on every merge to main; full-duration test on release candidates

Distinguish between a baseline load test (establishing normal behavior for future comparison) and a regression load test (confirming a specific change didn’t degrade performance). The DORA 2023 Accelerate State of DevOps Report found that “teams that improve their software delivery performance without corresponding levels of operational performance end up having worse organizational outcomes”. Embedding load tests in CI/CD is how you prevent that disconnect – our guide on integrating performance testing in CI/CD pipelines covers the practical implementation details.

Running a Load Test with WebLOAD: Load Profile and Key Settings

For a standard load test, configure a realistic ramp pattern rather than instantly throwing all virtual users at the system. A practical example: ramp from 0 to 500 virtual users over 5 minutes using a linear scheduler, hold at 500 for 20 minutes, then ramp down over 3 minutes. Set an automatic test failure if error rate exceeds 1% or p95 response time exceeds the SLA threshold.

Performance Engineer’s Perspective: Don’t skip the ramp-down phase. How your system recovers after peak load – connection pool drainage, cache state, thread cleanup – is just as telling as how it performs at peak. A system that takes 4 minutes to return to baseline response times after load removal has a resource cleanup problem worth investigating.

WebLOAD supports this through configurable load profiles including linear and goal-oriented scheduling, with hybrid cloud and on-premises load generation so teams aren’t locked into a single deployment model.

Type 2: Stress Testing – Finding the Breaking Point

Stress testing deliberately pushes a system beyond its normal operating limits. Where load testing asks “are we good?”, stress testing asks “where do we break, and how badly?”

The Google SRE Book frames the core question: “How many queries a second can be sent to an application server before it becomes overloaded, causing requests to fail?” The AWS Well-Architected Framework reinforces this by flagging as an anti-pattern the failure to test beyond expected load. Stress testing is the explicit corrective.

Consider a payment processing API that handles 800 TPS cleanly but begins throwing 503 errors at 1,100 TPS and fully crashes at 1,400 TPS. Without stress testing, the team discovers this cliff edge in production – during the one event that actually drives 1,200 TPS. With stress testing, they add circuit breakers and graceful degradation logic months before it matters.

Stress Testing vs. Load Testing: The Critical Difference

Load Testing: Operates within expected bounds, confirming the system functions as intended.

Stress Testing: Pushes beyond those bounds to find the system’s breaking point.

The analogy: load testing is maintaining highway speed to confirm cruise control; stress testing is flooring the accelerator to find the redline.

Key Metrics and Recovery Behavior to Monitor During a Stress Test

- CPU saturation: Investigate at sustained >90%; critical at 100%

- Memory swap usage: Indicates insufficient RAM

- Thread pool exhaustion: Alert when active threads exceed pool size +20%

- Garbage collection pause frequency (JVM): Pauses >500ms more than twice per minute

- Connection timeout rates: Differentiate between upstream and downstream timeouts

- Recovery time after load removal: SLA target: full service restored within 120 seconds

Performance Engineer’s Perspective: The recovery curve is the most under-watched metric in stress testing. I’ve seen systems that technically survive peak load but take 8 minutes to drain their error queue afterward – which is effectively an outage for late-arriving users. Always measure recovery, not just survival.

Running a Stress Test with WebLOAD: Pushing Beyond the Limit

Configure a staircase load profile for stress testing: start at 200 virtual users, add 200 every 5 minutes, and continue until error rate exceeds 5% or p99 response time exceeds 10 seconds. Unlike a load test where you’d abort at threshold violation, in stress testing the system continues executing and logging the exact virtual user count at each failure threshold crossing. Understanding performance breakpoint test profiles can help you design these staircase patterns to pinpoint the exact inflection where degradation begins.

RadView’s platform supports this through configurable staircase schedulers and Goal-Oriented Testing mode, where you can define a performance ceiling target and let the tool automatically increment load until that target is breached – capturing the precise capacity-to-failure delta. For the full SRE-authoritative perspective on stress testing methodology, Google’s SRE Book, Chapter 17 provides foundational guidance.

Type 3: Capacity Testing – Planning for Growth

Capacity testing determines the maximum load your system handles while still meeting its performance SLAs. It’s not about finding where the system breaks (stress testing does that) – it’s about finding the highest acceptable operating point so you can make infrastructure decisions with real numbers.

A SaaS platform currently supports 1,500 concurrent users with p95 latency under 400ms. Capacity testing reveals degradation begins at 2,200 users. The team now knows their current infrastructure provides 47% headroom above current peak – sufficient for 6 months of projected growth at their current trajectory, after which they need to scale horizontally. That’s a capacity test delivering a budget conversation, not just a technical finding. For a deeper dive into methodology and best practices, see our complete guide to capacity testing.

Performance Engineer’s Perspective: Capacity testing answers the question your CFO is actually asking: how much more infrastructure do we need to buy, and when? It’s the bridge between performance engineering and budget planning.

Capacity Testing vs. Stress Testing: Same Direction, Different Destination

Both push load beyond normal levels – which is why teams conflate them. The critical difference is the stopping condition. In a stress test, you continue past 2 seconds p95 response time to observe crash behavior. In a capacity test, 2 seconds p95 IS the stopping condition – you record the virtual user count at that exact moment as your system’s capacity ceiling.

AWS’s anti-pattern warning about testing “only to your expected load and not beyond” applies directly here: capacity testing is the practice of testing beyond expected load specifically to find the SLA boundary – an AWS-recommended practice, not an edge case.

When Capacity Testing Matters Most: The Scenarios That Demand It

Capacity testing should be mandatory in these scenarios:

- Pre-growth events: Before an IPO, major product launch, or geographic expansion – a streaming service expecting 3× user growth after international launch needs to know its current ceiling

- Feature impact assessment: A new real-time notification feature is projected to add 400 WebSocket connections per 1,000 users; capacity testing reveals whether existing infrastructure absorbs that or requires a dedicated connection tier

- Infrastructure migration validation: Moving from self-managed servers to a container orchestration platform – capacity test the new environment to confirm it matches or exceeds the previous ceiling

- Contractual SLA guarantees: Enterprise customers require documented capacity commitments (e.g., “guaranteed support for 5,000 concurrent sessions with <600ms p95 latency”)

For authoritative cloud performance measurement standards, NIST Cloud Computing Performance Standards provides the benchmarking framework context.

Capacity Testing with WebLOAD: Goal-Oriented Testing and Scaling Validation

WebLOAD’s Goal-Oriented Testing feature aligns particularly well with capacity testing. Define a performance goal – maintain p95 response time ≤ 600ms – and the platform automatically increments virtual users in configurable steps (e.g., 100 VUs per step), records response metrics at each plateau, and flags the capacity ceiling at the step where the target is first violated. In one example configuration, Goal-Oriented Testing identified a ceiling at 2,400 concurrent users, giving the infrastructure team an exact scaling trigger point instead of a guess.

The hybrid cloud architecture – combining on-premises and cloud-based load generators – enables high-VU capacity tests without requiring dedicated hardware for peak virtual user counts, keeping testing costs proportional to actual infrastructure investment.

Type 4: Soak Testing (Endurance Testing) – Catching What Time Reveals

Soak testing runs a system at sustained, realistic load for an extended period – hours or days – to detect problems invisible to shorter tests. It’s the most commonly skipped test type and the one responsible for the most painful production incidents. For a detailed look at planning and executing these extended-duration tests, see our guide on endurance testing in software testing.

An application server consumes 2.1 GB RAM at launch and peaks at 2.4 GB during a 30-minute load test – well within the 4 GB limit. A 12-hour soak test reveals memory growing to 3.8 GB with no plateau, confirming a leak that would cause an out-of-memory crash after approximately 18 hours of continuous production traffic. That 30-minute test didn’t lie – it just didn’t have enough time to tell the whole truth.

The Google SRE Book‘s zero-MTTR principle applies directly: a memory leak caught in soak testing has zero mean time to repair – you fix it before deployment. The same leak caught via a 3 AM production alert has the MTTR of however long it takes your on-call engineer to diagnose and redeploy.

Performance Engineer’s Perspective: I recommend a minimum 4-hour soak for any system that runs continuously in production. For streaming platforms, payment processors, or anything with a 72-hour uptime SLA, run it for the full duration you’re promising your customers.

The Failure Modes Only Soak Testing Catches

These failure modes are invisible to tests lasting under an hour:

- Memory leaks: Heap allocations that aren’t freed accumulate over thousands of request cycles. A leak of 50 KB per request is undetectable in a 1,000-request test but consumes 5 GB after 100,000 requests

- Database connection pool exhaustion: Connections that aren’t properly returned to the pool – often due to exception-path code that skips the

finallyblock – accumulate until none are available. At 1 leaked connection per 10,000 transactions, you hit a 200-connection pool limit after 2 million transactions (roughly 6–8 hours at moderate throughput) - Session object bloat: HTTP sessions or cached user objects that grow unbounded when session cleanup routines fail silently under sustained load

- GC pressure escalation (JVM): Garbage collection pause frequency and duration trends that only become problematic after thousands of GC cycles, when the old generation fills and full GC pauses exceed 2 seconds

- Log and temp file growth: Disk consumption from verbose logging or temp file creation that doesn’t hit storage limits during short tests but fills a 50 GB partition in 16 hours of production traffic

How Long Is Long Enough? Soak Test Duration Guidelines

System Risk Profile | Minimum Soak Duration | Rationale

| System Risk Profile | Minimum Soak Duration | Rationale |

|---|---|---|

| Internal tools, low-traffic admin panels | 4 hours | Catches obvious leaks and connection pool issues |

| Customer-facing web applications | 8–12 hours | Simulates a full business day; surfaces most memory and session issues |

| Payment processors, healthcare platforms | 24 hours | Matches regulatory and SLA expectations for continuous availability |

| Streaming, real-time data, 72h+ SLA systems | 48–72 hours | Tests the actual uptime commitment you’re selling to customers |

A soak test has run “long enough” when memory consumption, active connections, and response time all reach a visible plateau. If any metric shows a continuous upward trend at hour 8, extending to 12 or 24 hours isn’t optional – it’s the only way to determine whether the trend stabilizes or leads to failure.

All 4 Types at a Glance: Comparison Table

| Attribute | Load Testing | Stress Testing | Capacity Testing | Soak Testing |

|---|---|---|---|---|

| Goal | Validate SLA compliance at expected load | Find breaking point and failure behavior | Determine max load within SLA thresholds | Detect time-dependent degradation |

| Load level | Expected peak concurrency | Beyond expected; escalating to failure | Incrementally beyond expected until SLA breach | Expected (sustained for extended duration) |

| Typical duration | 15–60 minutes | 30–90 minutes (escalating) | 30–120 minutes (stepped) | 4–72 hours |

| Load profile | Linear ramp → hold → ramp-down | Staircase or exponential escalation | Goal-oriented stepped increments | Flat sustained load |

| Key unique metric | p95/p99 latency at target VU count | Breaking point VU/TPS + recovery time | Max VUs within SLA (capacity ceiling) | Memory/resource trend over hours |

| What it detects | Response time issues, query bottlenecks, error rates under normal load | Crash thresholds, cascading failures, recovery behavior | Infrastructure scaling trigger points, headroom percentages | Memory leaks, connection pool exhaustion, GC degradation, log growth |

| What it misses | Failure behavior beyond normal load | Long-duration issues, SLA compliance at normal levels | Failure behavior past the ceiling, time-dependent issues | Performance at load levels above or below the sustained test level |

| When mandatory | Every release; CI/CD pipeline gate | Before unpredictable traffic events; after architecture changes | Before growth events; for SLA documentation | Before any extended uptime commitment |

Frequently Asked Questions

Is running all four test types on every release realistic?

Not for most teams, and that’s fine. Run load tests on every release (automate these in CI/CD). Run stress and capacity tests quarterly or before major infrastructure changes. Run soak tests before any release with extended uptime commitments or after changes to memory management, session handling, or database connection code. Prioritize based on what changed, not a rigid schedule.

Can a single test combine stress and capacity testing goals?

Technically, a stepped load escalation test produces data for both – you’ll see the SLA breach point (capacity ceiling) and the failure point (stress result) in the same run. However, the analysis mindset differs. Capacity testing cares about the moment SLA thresholds are crossed; stress testing cares about what happens after. Combining them in one execution is efficient, but treat the analysis as two separate exercises with distinct reports and action items.

Is it worth soak testing for 72 hours if our deployment pipeline ships updates daily?

This is a common rationalization for skipping soak tests, and it’s often wrong. Daily deployments don’t restart all system components – connection pools, cached sessions, and JVM heap state frequently persist across deployments. A 72-hour soak test validates the cumulative behavior of everything that isn’t restarted. If your deployment truly recreates every process and connection from scratch, a shorter soak may suffice – but verify that assumption first.

How do I convince stakeholders to invest time in testing types beyond basic load testing?

Lead with the DORA 2023 finding: teams that invest in delivery speed without equivalent operational reliability investment achieve worse organizational outcomes. Then present the cost asymmetry: a memory leak caught in a 12-hour soak test costs one engineering day to fix. The same leak discovered via a 3 AM production crash costs incident response time, customer trust, potential SLA penalties, and a post-mortem that consumes a full team’s week.

What’s the minimum infrastructure needed to run meaningful stress or capacity tests?

Your test environment must mirror production topology – same service mesh, same database engine, same CDN layer – even if individual components are scaled down proportionally. A half-scale replica with proportionally adjusted VU targets produces reliable ratios. A fundamentally different architecture (e.g., single-node test vs. multi-node production) produces misleading data regardless of how many virtual users you throw at it.

References and Authoritative Sources

- Perry, A. & Luebbe, M. (2017). Chapter 17: Testing for Reliability. In Beyer, B., Jones, C., Petoff, J., & Murphy, N.R. (Eds.), Site Reliability Engineering: How Google Runs Production Systems. O’Reilly Media / Google. Retrieved from https://sre.google/sre-book/testing-reliability/

- Amazon Web Services. (2024, November 6). PERF05-BP04: Load Test Your Workload. AWS Well-Architected Framework, Performance Efficiency Pillar. Retrieved from https://docs.aws.amazon.com/wellarchitected/latest/performance-efficiency-pillar/perf_process_culture_load_test.html

- DeBellis, D., Harvey, N., McGhee, S., Farley, D., Winer, J., et al. (2023). Accelerate State of DevOps Report 2023. DORA (DevOps Research and Assessment), Google Cloud. Retrieved from https://dora.dev/research/2023/dora-report/2023-dora-accelerate-state-of-devops-report.pdf