Your failover plan looks great on paper, until the moment it needs to work. And the uncomfortable truth is that most failover plans have never been validated under conditions that resemble a real outage.

Failover testing is the practice of deliberately simulating system, component, or network failures to verify that backup resources activate correctly, services recover within defined time limits, and data integrity is maintained throughout the transition. It’s the difference between hoping your redundancy works and proving it does.

Google’s SRE discipline quantifies why this matters: “The more bugs you can find with zero MTTR, the higher the Mean Time Between Failures (MTBF) experienced by your users” [1]. Zero-MTTR detection, catching failures within the testing pipeline itself, before production is affected, is only possible when failover testing is embedded as a repeatable, instrumented process, not an annual checkbox exercise.

This guide exists because three problems keep surfacing across QA and SRE teams: (1) there’s no standardized, repeatable testing framework most teams can point to; (2) failover and load testing are run in isolation, missing the critical intersection where systems break under stress; and (3) the boundary between failover testing and disaster recovery testing remains fuzzy, creating dangerous coverage gaps. You’ll walk away with failover architecture decision criteria, a five-phase test process, a phase-gated checklist template, the metrics that matter, and a concrete methodology for validating failover behavior under realistic traffic conditions.

- Failover Testing vs. Disaster Recovery Testing: Why the Distinction Matters

- Failover Architectures Explained: Active-Passive, Active-Active, and N+1

- The Step-by-Step Failover Test Process: From Failure Simulation to Recovery Validation

- Failover Testing Checklist: Pre-Test, During-Test, and Post-Test

- References and Authoritative Sources

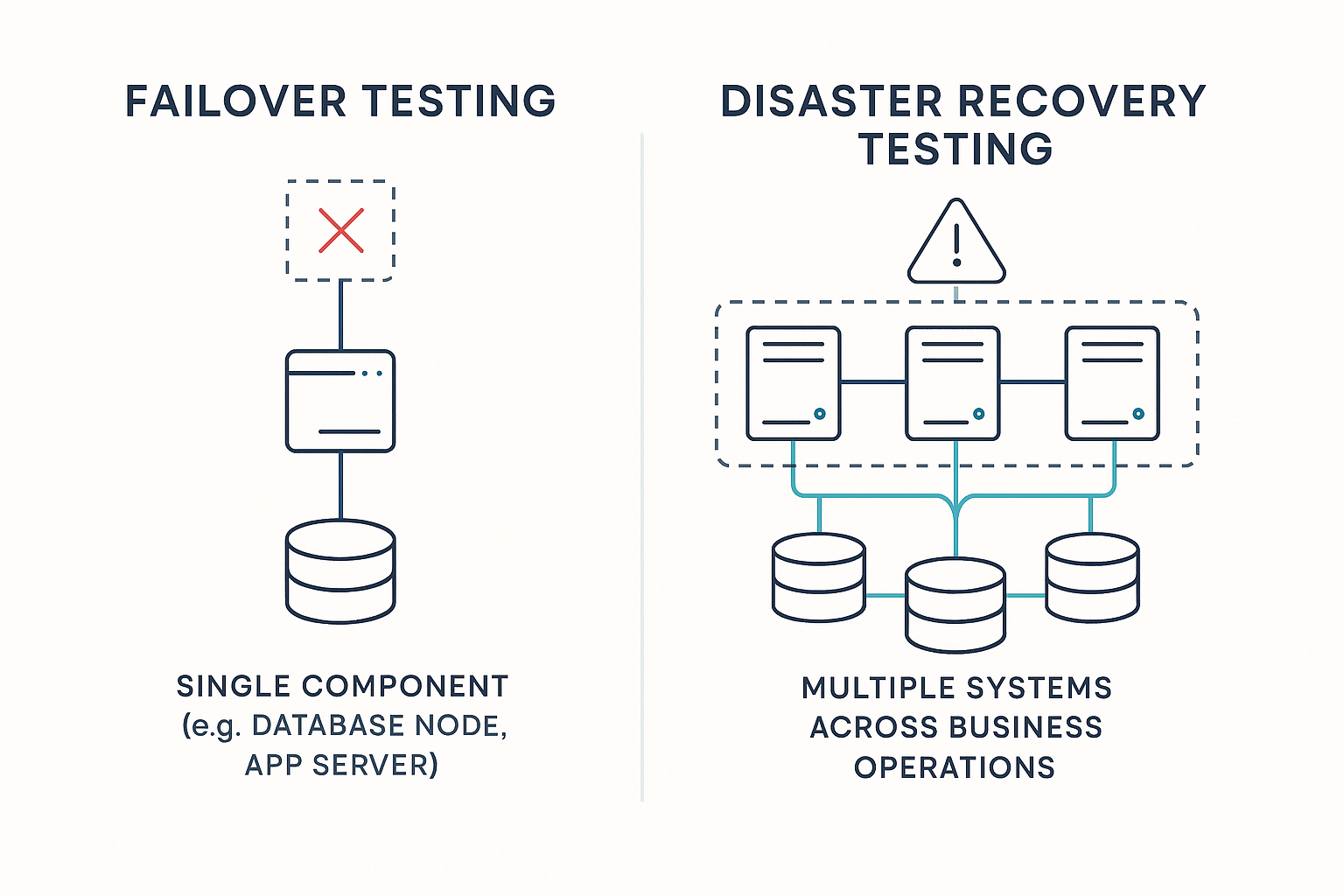

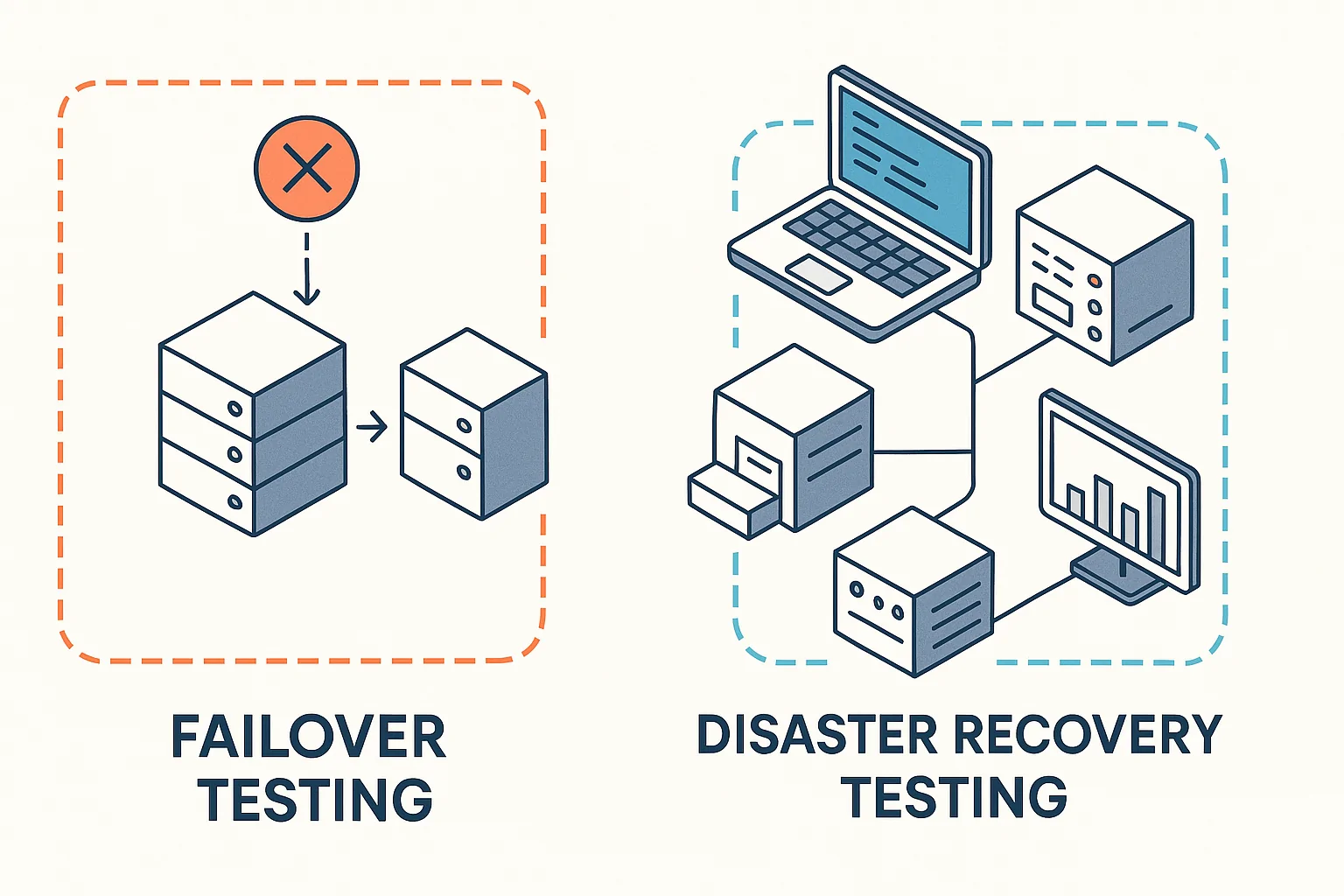

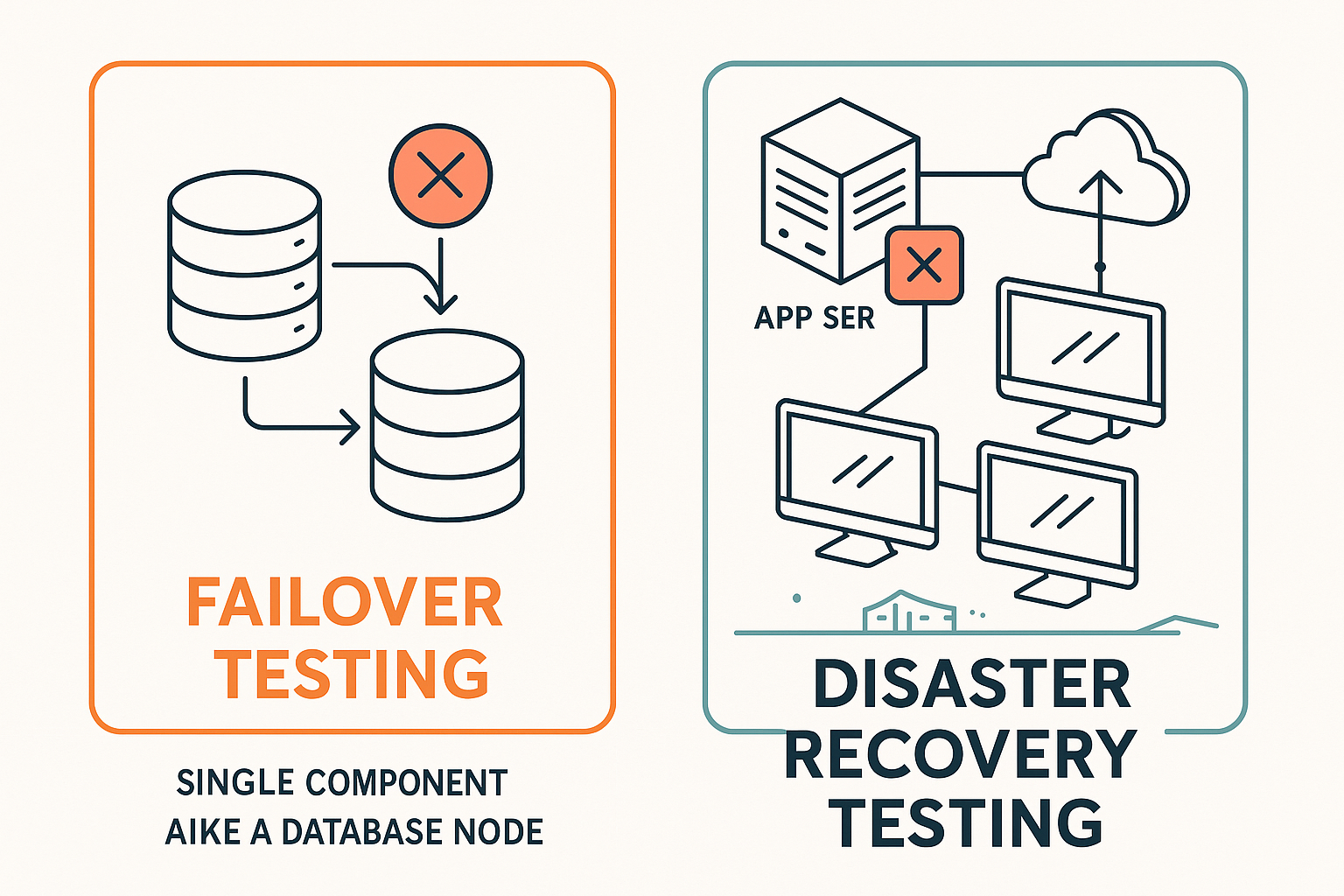

Failover Testing vs. Disaster Recovery Testing: Why the Distinction Matters

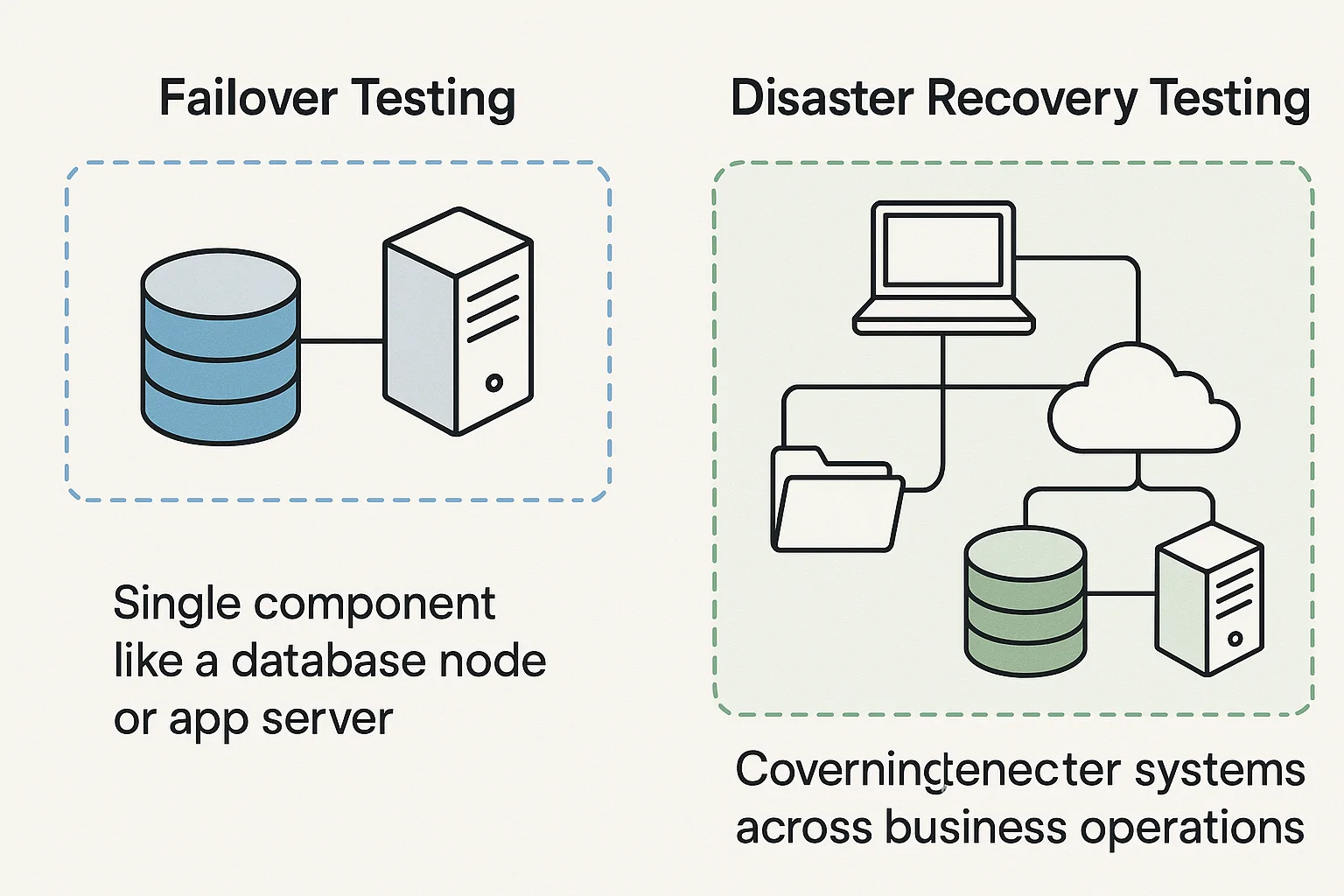

Teams routinely conflate these two disciplines and end up with coverage gaps in both. The distinction is precise and consequential:

| Attribute | Failover Testing | Disaster Recovery (DR) Testing |

|---|---|---|

| Scope | Single component or service layer (database node, app server, load balancer) | End-to-end business operations across multiple systems |

| Trigger | Automated health-check failure or manual switchover command | Declared disaster event (site loss, regional outage, ransomware) |

| Time Horizon | Seconds to low minutes (RTO typically < 5 min) | Minutes to hours (RTO typically 1–24 hours depending on tier) |

| Success Metric | Backup resource active, traffic rerouted, data integrity confirmed within RTO/RPO | Full business process restored, communication protocols executed, third-party SLAs met |

NIST SP 800-34 Rev. 1 draws this line explicitly: contingency planning spans multiple tiers, from component-level recovery to full organizational continuity, and each tier demands its own validation [2]. A database node that fails over in 12 seconds can still violate a 15-minute RPO if asynchronous replication lagged by 20 minutes before the failure occurred. That’s a failover success and a DR failure simultaneously.

For the full contingency planning framework, see the NIST Contingency Planning Guide for IT Systems (SP 800-34).

What Failover Testing Actually Covers (and What It Doesn’t)

Failover testing validates automatic or manual switchover to redundant resources at the component or service layer. Its scope includes:

- Database node failover: Primary PostgreSQL or MySQL instance to a hot standby via streaming replication

- Application server cluster: Health-check-driven removal and replacement of a failed app server behind a load balancer

- Network path redundancy: BGP route failover or VRRP/HSRP switchover between primary and backup network paths

- Load balancer failover: Active load balancer transferring VIP ownership to a standby unit

What’s explicitly out of scope: full business process restoration workflows, end-user communication trees, third-party vendor SLA enforcement, and regulatory notification procedures. Those belong to DR testing.

Microsoft’s Azure Well-Architected Framework defines recoverability as “the ability to restore normal operations after a disruption within agreed recovery time (RTO) and recovery point (RPO) targets” [3]. Failover testing validates that individual components meet their component-level RTO/RPO. DR testing validates that the aggregate system meets the business-level recovery window.

For teams building resilient system architectures from the ground up, the NIST Framework for Developing Cyber-Resilient Systems provides the systems-engineering perspective on redundancy verification.

When You Need Both: Combining Failover and DR Testing in Your Resilience Program

Failover testing should be a prerequisite gate for DR testing, not an afterthought woven into a DR drill.

Consider this scenario: a team runs individual failover tests on six microservices, each achieving an RTO of 45 seconds. All pass. During a full DR drill, however, the services must recover in sequence due to dependency chains, and the aggregate recovery takes 6.5 minutes, violating a 2-minute composite SLA. Each component passed; the system failed.

NIST SP 800-34 Rev. 1 addresses this directly by requiring organizations to evaluate the “interrelationships between contingency planning, organizational resiliency, and the system development life cycle” [2]. Component-level validation without system-level integration testing creates a false sense of readiness.

SRE Perspective: Google’s SRE discipline treats testing in isolation as a known failure mode. The system-level view, where component interactions create emergent failure modes invisible at the unit level, is always required [1].

Failover Architectures Explained: Active-Passive, Active-Active, and N+1

Meaningful failover tests require understanding what you’re testing against. Here’s the decision-grade comparison:

| Attribute | Active-Passive | Active-Active | N+1 Redundancy |

|---|---|---|---|

| Typical RTO | 15–120 seconds | < 5 seconds (near-zero with session persistence) | 10–60 seconds |

| RPO Risk | Moderate (replication lag dependent) | Low (both nodes write simultaneously) | Moderate (spare node sync state dependent) |

| Traffic Distribution | 100% to primary; 0% to standby | Split across all nodes | Even across N nodes; spare idle or warm |

| Failover Trigger | Health-check timeout → promote standby | Load balancer removes failed node from pool | Orchestrator activates spare into pool |

| Primary Test Complexity | Medium: verify promotion, DNS/routing update, data sync | High: validate data consistency, conflict resolution, capacity absorption | Medium: verify spare readiness, pool rebalancing, alerting on redundancy breach |

Active-Passive Failover: The Reliable Workhorse and Its Hidden RTO Risk

In active-passive, one primary node handles all traffic while a standby replicates data but serves zero live requests until failover triggers. Example: a primary PostgreSQL instance with streaming replication to a hot standby, where failover fires when the primary stops responding to health checks for more than 10 seconds.

The hidden RTO inflation comes from compounding delays most teams don’t account for:

- Health-check interval: 10 seconds

- Detection propagation to HA manager: 5 seconds

- Standby promotion and readiness confirmation: 10 seconds

- DNS TTL expiry for clients caching the old IP: 30 seconds

Minimum observable RTO: ~55 seconds, even though the standby “activated” in 10 seconds. DNS TTL is the single most commonly overlooked RTO inflator in active-passive topologies. Teams that set TTLs to 300 seconds (a common default) and don’t test with real client behavior will see RTO numbers in testing that bear no resemblance to production.

Split-brain risk also demands explicit testing: if the primary comes back online before the HA manager has fully fenced it, both nodes may accept writes simultaneously. Prevention mechanisms like STONITH (Shoot The Other Node In The Head) or I/O fencing must be verified during the test, not assumed to work based on configuration alone.

Active-Active Failover: Higher Availability, Higher Testing Complexity

In active-active, all nodes serve live traffic simultaneously. When one fails, remaining nodes absorb its share. The near-zero RTO is attractive, but testing complexity increases sharply because you must validate capacity absorption, data consistency, and conflict resolution simultaneously.

Here’s the scenario that exposes the gap: three-node active-active cluster, each handling 60% of its rated capacity. One node fails. The remaining two must absorb 50% more traffic each, jumping to 90% capacity. Your test must validate: zero 5xx errors during redistribution, p99 latency stays under 500ms, and zero data loss on in-flight write operations to the failed node.

This is precisely where load testing becomes non-negotiable. Microsoft’s Azure Well-Architected Framework states it directly: “Ensure that your graceful degradation implementation and scaling strategies are effective by performing active malfunction AND simulated load testing” [3].

Engineering Insight: Active-active architectures often look clean in tests that trigger failover at idle. The real test is triggering failover when your nodes are already at 60%+ capacity, that’s where cascading failures, connection pool exhaustion, and conflict resolution bugs surface.

N+1 Redundancy: The Pragmatic Middle Ground for Scalable Systems

N+1 maintains one spare node for every N active nodes, common in web server farms, API gateway pools, and microservice clusters. The spare should be warm (pre-provisioned, receiving health-check traffic) rather than cold (requiring boot and configuration).

Test scenario: five-node API cluster (N=5, spare=1). Primary test: terminate one node; verify spare activates within 15 seconds and receives routed traffic within 30 seconds. Secondary test: terminate two nodes simultaneously to document the breach-of-redundancy behavior and confirm monitoring alerts fire correctly within 60 seconds.

N+1 does not protect against simultaneous multi-node failure. Your test plan must explicitly verify what happens when redundancy is breached, not to prove the system survives, but to prove the alerting and escalation pathways work when it doesn’t.

For the systems-engineering perspective on verifying redundancy under realistic conditions, see the NIST Framework for Developing Cyber-Resilient Systems.

The Step-by-Step Failover Test Process: From Failure Simulation to Recovery Validation

Here’s the five-phase process, designed for repeatability and audit documentation.

| Phase | Objective | Key Actions | Success Criterion | Owner |

|---|---|---|---|---|

| 1. Define Triggers & Criteria | Establish what constitutes failure and success | Document trigger conditions, set RTO/RPO thresholds, assign roles | All criteria reviewed and signed off by stakeholders | QA Lead / SRE |

| 2. Simulate Failure | Inject a realistic failure under load | Execute failure injection while load test is active | Failure detected by monitoring within TTD threshold | Performance Engineer |

| 3. Measure Recovery | Capture time-series metrics through the event | Record TTD, TTA, RTO, RPO, error rate, throughput delta | All metrics captured with < 1-second granularity | SRE / Monitoring |

| 4. Validate Data Integrity | Confirm no data loss or corruption | Run transaction log comparison, checksums, smoke tests | Row count delta ≤ in-flight transactions; checksum match = 100% | DBA / QA Lead |

| 5. Failback & Document | Restore original topology and record findings | Execute failback, verify replication re-sync, publish test report | Failback completes within RTO; report submitted to stakeholders | SRE / QA Lead |

Phase 1. Identify Failover Triggers and Define Your Pass/Fail Criteria

Before any failure injection, document these trigger types:

- Process death: Primary database or application process terminates unexpectedly

- Health-check timeout: Consecutive failed health probes exceed configured threshold

- Network partition: Loss of connectivity between nodes or between node and load balancer

- Resource exhaustion: CPU > 95% sustained, memory OOM, or disk full

- Manual administrative action: Operator-initiated failover for maintenance

Then define measurable pass/fail criteria:

| Metric | Target | Failure Condition |

|---|---|---|

| RTO | ≤ 30 seconds | First successful request on backup > 30 seconds post-failure |

| RPO | ≤ 5 seconds of data | Last replicated transaction > 5 seconds behind failure timestamp |

| Error rate during switchover | ≤ 0.1% of requests | Error rate exceeds 0.1% in the failover window |

Google SRE’s zero-MTTR concept applies here: “It’s possible for a testing system to identify a bug with zero MTTR” when system-level tests detect the same problem monitoring would detect [1]. Precise trigger definition is what enables this, vague triggers produce vague results.

Practical warning: Health-check timeouts set too aggressively cause false-positive failovers under temporary load spikes. Set thresholds based on observed p99 latency under peak load, not best-case averages.

Phase 2. Simulate the Failure: Techniques for Realistic Failure Injection

Failure injection ranges from simple to sophisticated:

- Process kill:

sudo systemctl stop postgresql, verify the HA manager detects failure within the configured check interval - Network partition:

iptables -A INPUT -s <peer_node_ip> -j DROP, simulate a partial network split - Resource exhaustion:

stress-ng --cpu 8 --timeout 120s, drive CPU to saturation and observe health-check behavior - Cloud-native fault injection: AWS Fault Injection Simulator or Azure Chaos Studio for managed infrastructure experiments

The critical requirement: run failure injection while a realistic load profile is active. WebLOAD’s spike testing profiles allow you to ramp from baseline (e.g., 50 virtual users) to peak (500 virtual users) over 60 seconds, establishing the traffic floor before failure injection begins. This approach, closely related to chaos testing methodologies, exposes RTO drift under concurrency, health-check flapping caused by resource contention, and connection pool exhaustion on the surviving nodes, none of which appear in idle-state tests.

Engineering Insight: The most dangerous failure mode you haven’t tested is the one that happens at 2 AM when your system is already at 80% capacity. Build your test to replicate that reality.

Phase 3. Measure Recovery Time and Capture Failover Metrics

Capture these metrics with sub-second granularity during the event window:

- Time to Detection (TTD): Elapsed time from failure injection to first monitoring alert

- Time to Activation (TTA): Elapsed time from detection to backup node accepting traffic

- RTO (actual): Elapsed time from failure injection to first successful health-check response on backup

- RPO (actual): Delta between last confirmed replicated transaction timestamp and failure injection timestamp

- Error rate: Count of 5xx/4xx responses during the transition window as a percentage of total requests

- Throughput degradation: Percentage drop in requests-per-second during the transition vs. pre-failure baseline

Google SRE frames the business case: “The MTTR measures how long it takes the operations team to fix the bug… The more bugs you can find with zero MTTR, the higher the MTBF experienced by your users” [1]. Precise measurement is what converts a failover test from an exercise into an engineering improvement cycle, and understanding the performance metrics that matter is foundational to interpreting your results correctly.

RadView’s platform captures these metrics natively during load-driven failover scenarios, correlating application-layer response times with infrastructure-layer events in a single timeline, which eliminates the manual log-stitching that slows down post-test analysis.

Phase 4. Validate Data Integrity Post-Failover

This is the most commonly skipped step and the one with the highest consequences. A failover that restores service in 10 seconds but silently loses 3 minutes of order data is worse than a 5-minute outage, at least the latter is visible.

Three validation methods:

- Transaction log comparison: Query the last committed transaction ID on the failed node’s WAL (Write-Ahead Log) and compare it to the first replayed transaction on the backup. Expected delta: ≤ number of in-flight transactions at time of failure.

- Checksum validation:

SELECT COUNT(*), MD5(CAST(array_agg(id ORDER BY id) AS text)) FROM orderson both nodes immediately post-failover. Committed row checksum match should be 100%. - Application-layer smoke tests: Execute a defined set of critical read/write operations (create order, read user profile, update inventory) and verify correctness.

In active-active architectures, also audit conflict resolution logs for any writes that required automatic resolution, these indicate potential silent data inconsistency.

NIST SP 800-34 Rev. 1 positions validation procedures as a compliance requirement within contingency plan testing, not an optional enhancement [2].

Phase 5. Failback, Document, and Iterate

Failback, returning the system to its original primary configuration, is itself a high-risk operation. A system that fails over cleanly may fail back catastrophically if replication diverged during the test.

Before initiating failback: verify replication lag between the backup node and the restored primary is < RPO threshold, confirmed via replication status query. Do not initiate failback if lag exceeds the threshold, data divergence causing split-brain on restoration is a documented failure mode in both on-prem and cloud HA clusters.

Post-test documentation must include: actual vs. expected values for each metric, deviations and root cause hypotheses, configuration changes made during the test, and specific action items with owners and deadlines. Feed findings back into runbook updates and CI/CD pipeline gates.

SRE Perspective: Failback is where overconfident teams get hurt. Treat it as a separate test event, not a cleanup step.

Failover Testing Checklist: Pre-Test, During-Test, and Post-Test

This section delivers the single most-requested artifact from the target audience: a structured, repeatable checklist they can actually use. Organized into three phase-gated sections (pre-test, during-test, post-test), each with specific, actionable checklist items. Present this as a practical template, explicitly invite readers to adapt it for their environment. The tone here is direct and functional: numbered lists, no metaphors, no fluff. This section’s value is in its completeness and immediate usability.

Pre-Test Checklist: Setting Up for a Valid, Safe Test

- Confirm test environment isolation, no shared resources with production traffic

- Capture baseline metrics: current throughput (TPS), p95/p99 latency, error rate, replication lag

- Verify standby replication lag ≤ RPO threshold (target: ≤ 5 seconds) via replication status dashboard

- Confirm monitoring and alerting is active and will capture the full test window with ≤ 1-second granularity

- Document rollback procedure and verify it has been tested independently within the past 30 days

- Notify all stakeholders (ops, DBA, network, application owners) with test window, scope, and expected impact

- Configure load test profile: ramp from baseline TPS to peak TPS over 60 seconds; confirm virtual user count, ramp rate, and transaction mix match production traffic patterns, for guidance on designing these profiles, see this guide on creating realistic load testing scenarios

- Validate that infrastructure configuration (instance types, network ACLs, health-check intervals) matches production within documented deviations

- Verify test scope sign-off from QA lead and SRE on-call

Practical tip: Run the load test in isolation for 10 minutes before failure injection to establish a clean baseline. Anomalies in baseline performance will corrupt your RTO/RPO measurements if not caught first.

References and Authoritative Sources

- Perry, A. & Luebbe, M. (2017). Chapter 17 – Testing for Reliability. In Beyer, B., Jones, C., Petoff, J., & Murphy, N.R. (Eds.), Site Reliability Engineering: How Google Runs Production Systems. O’Reilly Media / Google, Inc. Retrieved from https://sre.google/sre-book/testing-reliability/

- Swanson, M., Bowen, P., Phillips, A., Gallup, D., & Lynes, D. (2010). Contingency Planning Guide for Federal Information Systems (NIST Special Publication 800-34 Rev. 1). National Institute of Standards and Technology. Retrieved from https://nvlpubs.nist.gov/nistpubs/Legacy/SP/nistspecialpublication800-34r1.pdf

- Microsoft. (N.D.). Architecture strategies for designing a reliability testing strategy – RE:08. Azure Well-Architected Framework, Microsoft Learn. Retrieved from https://learn.microsoft.com/en-us/azure/architecture/framework/resiliency/testing

Frequently Asked Questions

What’s the difference between failover testing and disaster recovery testing?

Failover testing validates that a system automatically switches to a redundant component when a primary fails — usually at the service, database, or datacenter level. Disaster recovery testing is broader, covering full business continuity including data restoration, communications, and recovery point/time objectives (RPO/RTO). Failover is one component of DR.

How often should failover testing be performed?

For critical production systems, at minimum quarterly with documented results. High-availability financial or healthcare systems often run failover drills monthly. Chaos engineering tools like Gremlin or AWS Fault Injection Simulator enable continuous low-impact failover validation in non-production environments.

Can I test failover in production without impacting users?

Yes, with careful scope control. Techniques include: testing during low-traffic windows, using canary deployments to fail over a small user percentage first, implementing feature flags to revert quickly, and pairing with synthetic monitoring to catch any user-visible impact. Fully transparent failover is the goal and is achievable for well-architected systems.

What metrics matter most during a failover test?

Mean time to detect (MTTD) — how long until the system notices the failure; mean time to failover (MTTF) — how long until traffic routes to the backup; user-visible error rate during transition; data consistency checks post-failover; and rollback time if the failover itself must be reversed.

Does every service in my system need automated failover?

No. Prioritize based on business impact and failure blast radius. Revenue-critical paths (payment, authentication, core APIs) warrant full automated failover. Internal dashboards, batch reporting, and low-traffic admin interfaces may only need documented manual procedures. Spending failover engineering effort where business impact is low is poor capital allocation.