A flash sale goes live. Concurrent users spike 8x in 90 seconds. The checkout service starts returning 503 errors — not because of a bug in the code, but because nobody knew the system could only sustain 3,800 concurrent sessions before the database connection pool ran dry. Eleven minutes of downtime. Revenue lost. Incident review scheduled.

This scenario isn’t hypothetical. It’s the predictable outcome of a team that ran load tests but never ran a capacity test. They confirmed the system performed well at expected traffic — and never bothered to find its ceiling.

As Mike Ulrich writes in the Google SRE Book, “Understanding the behavior of the service under heavy load is perhaps the most important first step in avoiding cascading failures” [1]. That understanding is exactly what capacity testing produces: a quantified ceiling, a named bottleneck, and a concrete infrastructure decision — not a vague “it seems fine.”

This guide gives you the end-to-end playbook. You’ll walk away with a precise methodology for identifying system ceilings, diagnosing resource exhaustion patterns (CPU, memory, thread pools, DB connections, network I/O), translating capacity test results into cloud auto-scaling policies, and choosing tooling that makes the process repeatable. Every section is built for engineers who already know performance testing basics and need the practitioner-grade depth that shallow overviews never deliver.

- What Is Capacity Testing in Software Engineering? (And Why It’s Not What You Think)

- The Capacity Testing Methodology: A Step-by-Step Engineering Playbook

- Phase 1: Test Planning — Defining Scope, SLAs, and Success Criteria Before You Write a Single Script

- Phase 2: Workload Modeling — Calculating Concurrent Users and Designing Realistic Load Profiles

- Phase 3: Environment Configuration — Why “Close Enough” Test Environments Produce Misleading Ceilings

- Phase 4: Execution and Monitoring — What to Watch While the Load Is Running

- Phase 5: Analysis and Reporting — Turning Raw Numbers Into Infrastructure Decisions

- Identifying Your System’s Ceiling: Resource Exhaustion Patterns Every Engineer Should Know

- CPU Saturation: The Ceiling Most Teams Hit First (and How to Spot It Early)

- Memory Exhaustion and GC Pressure: The Slow Collapse That Capacity Tests Catch and Load Tests Miss

- Database Connection Pool Limits: The Hidden Ceiling That Causes Most Checkout and API Failures

- Thread Pool Depletion and Network I/O Saturation: Two More Ceilings Your Tests Must Cover

- Capacity Testing in the Age of Cloud Auto-Scaling: Don’t Let Your Infra Outrun Your Tests

- Frequently Asked Questions

- References and Authoritative Sources

What Is Capacity Testing in Software Engineering? (And Why It’s Not What You Think)

Capacity testing determines the maximum workload a software system can sustain while still meeting defined performance SLAs — for example, p99 response time under 200ms with an error rate below 0.1%. Its output isn’t a pass/fail binary. It’s a quantified ceiling with a named first bottleneck: “This system supports 4,200 concurrent sessions; beyond that threshold, thread pool exhaustion causes p99 latency to exceed 500ms.”

Alex Perry and Max Luebbe frame it directly in the Google SRE Book: “Engineers use stress tests to find the limits on a web service. Stress tests answer questions such as: How full can a database get before writes start to fail? How many queries a second can be sent to an application server before it becomes overloaded, causing requests to fail?” [2]. Capacity testing operationalizes exactly these questions within a structured, repeatable methodology, aligned with the ISO 25010 quality model’s performance efficiency sub-characteristics: time behavior, resource utilization, and capacity.

The Software Engineering Definition: Capacity Testing in One Precise Sentence

Capacity testing is the process of incrementally increasing system load until a defined performance threshold is breached, in order to identify the maximum safe operating ceiling and the specific resource that becomes the first bottleneck.

A good capacity test output reads like this: “The system’s capacity ceiling is 4,200 concurrent sessions at current infrastructure configuration. Beyond 3,800 sessions, the application server thread pool reaches 100% utilization, and p99 latency degrades from 180ms to 1,400ms within 30 seconds.”

Under ISO 25010, this maps directly to the capacity sub-characteristic of performance efficiency — a system’s ability to meet simultaneously defined maximum limits. The Google SRE Book’s Chapter 17 frames this as a reliability engineering obligation, not a pre-launch checkbox [2].

Capacity Testing vs. Load Testing vs. Stress Testing: The Decision Framework Engineers Actually Need

These three test types answer fundamentally different questions. Treating them as interchangeable — a mistake most teams make — leads to running the wrong test for the wrong purpose. For a broader overview of how these different types of performance testing relate to each other, understanding their distinct goals is essential before diving into capacity-specific methodology.

| Capacity Testing | Load Testing | Stress Testing | |

|---|---|---|---|

| Question Answered | What is our system’s maximum? | Do we meet SLAs at expected peak? | How does the system fail beyond limits? |

| Load Profile Shape | Stepped ramp beyond expected peak | Sustained at target concurrency | Spike or sustained beyond ceiling |

| Primary Metric | Throughput at SLA breach point | p95/p99 latency at target load | Recovery time, failure mode |

| Expected Output | Quantified ceiling + first bottleneck | Pass/fail against SLA | Failure behavior + recovery characteristics |

The ISTQB performance testing taxonomy and the IEEE Computer Society’s Practical Performance Testing Principles both treat these as distinct tools for distinct reliability questions. Here’s the practical decision: an e-commerce team two weeks before Black Friday needs a load test to confirm SLA compliance at 10,000 expected concurrent users. But if they’ve never found their ceiling, they need a capacity test first — because Black Friday traffic doesn’t respect forecasts.

Why Software Teams Confuse These Tests — and the Production Incidents That Result

Consider a team that ran a load test at 2,000 concurrent users and measured p99 latency of 140ms. Test passed. Champagne. They shipped.

On launch day, organic traffic hit 4,100 concurrent users. At 3,800 sessions, the database connection pool — configured with a default maximum of 10 connections — saturated completely. New requests queued, then timed out. The application returned HTTP 503 errors for 14 minutes until traffic subsided naturally.

The load test was correct — the system performed well at 2,000 users. But nobody asked what happens at 3,800, or 4,000, or 4,500. That’s the question only a capacity test answers.

Ulrich’s warning applies directly: “Capacity planning reduces the probability of triggering a cascading failure… When you lose major parts of your infrastructure during a planned or unplanned event, no amount of capacity planning may be sufficient to prevent cascading failures [without prior load testing data]” [1].

The Capacity Testing Methodology: A Step-by-Step Engineering Playbook

Every competitor article analyzed for this topic stops at definitions. None provides a structured, repeatable methodology with specific inputs, outputs, and decision criteria per phase. This section fills that gap across five phases — applicable whether your stack runs on-prem, hybrid, or fully cloud-native.

Phase 1: Test Planning — Defining Scope, SLAs, and Success Criteria Before You Write a Single Script

The difference between a useful capacity test and a noisy one is the quality of upfront planning. Before scripting, lock down these artifacts:

- System Under Test (SUT) boundary: Which services, databases, and infrastructure components are in scope? A capacity test of “the checkout flow” must include the API gateway, checkout service, payment microservice, and the database — not just one component.

- SLA thresholds: Define “acceptable” in percentile terms: p95 < 300ms, p99 < 500ms, error rate < 0.5%. These should derive from your Service Level Objectives (SLOs), not arbitrary round numbers.

- Peak load scenarios: Historical peak (what you’ve measured), projected growth peak (historical × growth factor), and worst-case event peak (flash sale, viral moment, DDoS-like organic spike).

- Pass/fail criterion: “At what concurrency does the system stop meeting SLAs?” — not “does it work.”

- Environment specification: Documented infrastructure configuration (see Phase 3).

The planning checklist: (1) Test scope document, (2) SLA/SLO reference with percentile thresholds, (3) Workload model with user journey mix, (4) Environment specification with production parity notes, (5) Go/no-go criteria matrix mapping ceiling findings to release decisions.

Phase 2: Workload Modeling — Calculating Concurrent Users and Designing Realistic Load Profiles

Most teams get workload modeling wrong by guessing at concurrency numbers. Use Little’s Law instead: L = λ × W, where L is concurrent users (or sessions), λ is the arrival rate (sessions per second), and W is the average session duration (in seconds).

Worked example: your analytics show a peak arrival rate of 500 new sessions per second, and the average session lasts 8 seconds. Concurrent sessions at peak: 500 × 8 = 4,000. That’s your load test target — your capacity test must ramp well beyond it. For a deeper dive into modeling concurrent user loads and the nuances of load testing concurrent users, accurate workload modeling is the foundation of any meaningful capacity test.

Alejandro Forero Cuervo warns in the Google SRE Book: “Modeling capacity as ‘queries per second’… often makes for a poor metric” [3]. Your workload model must account for session complexity — a user browsing product pages generates different resource profiles than a user completing a checkout with payment processing. Model the realistic mix.

For the ramp-up profile, avoid jumping directly to peak. Use a stepped ramp: 25% → 50% → 75% → 100% → 125% → 150% of expected peak, holding each step for 3-5 minutes. This reveals the exact inflection point where degradation begins. A sudden jump to peak load masks gradual bottleneck onset.

Include think-time between user actions (typically 3-10 seconds between clicks) to avoid artificially synchronized request volleys that don’t represent real traffic patterns.

Phase 3: Environment Configuration — Why “Close Enough” Test Environments Produce Misleading Ceilings

A capacity test is only as valid as the environment it runs in. Testing with a 10GB dataset when production holds 500GB underestimates query latency by 3-5x in common B-tree index scenarios, because index depth and table scan behavior change dramatically with data volume.

Environment parity checklist:

- Hardware/instance tier: Same CPU, memory, and disk I/O specifications as production

- Database connection pool settings: Same max pool size, connection timeout, and idle timeout values

- Data volume: Production-representative dataset size (or a statistically proportional sample)

- Caching configuration: Same cache sizes, eviction policies, and TTLs — cold caches produce different ceilings than warm ones

- Network topology: Same load balancer configuration, inter-service latency, and geographic distribution

- Monitoring overhead: Production runs APM agents, log collectors, and metrics exporters that consume 3-8% of CPU — include them in the test environment

For practical guidance on replicating production conditions, these tips for building a better load testing environment cover the infrastructure parity decisions that directly impact the validity of your capacity ceiling findings.

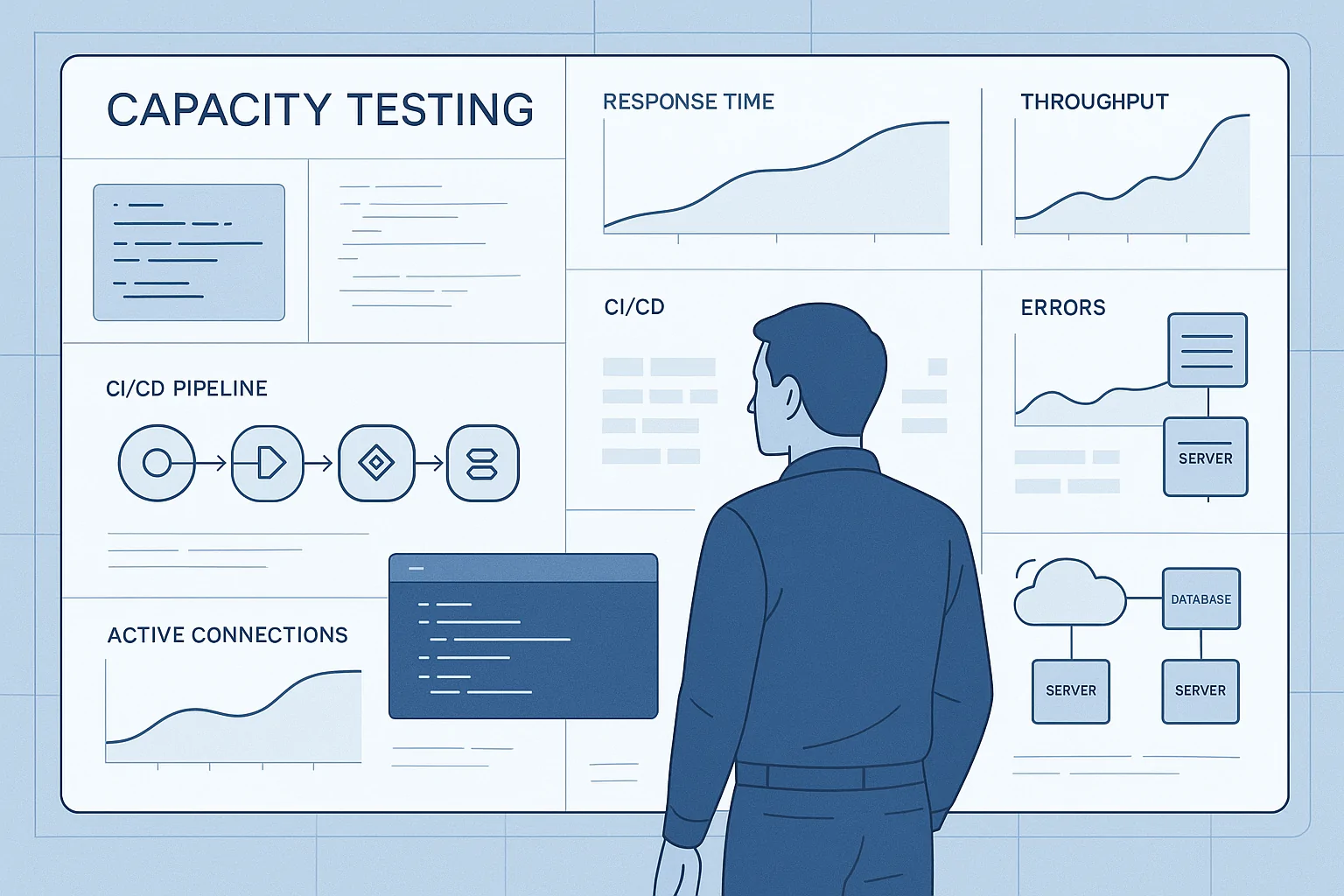

Phase 4: Execution and Monitoring — What to Watch While the Load Is Running

During execution, monitor four metric tiers simultaneously — this is where you’ll spot the ceiling as it forms:

- Application-level: Response time percentiles (p50, p95, p99), throughput (requests/sec), HTTP error rate. When p99 begins climbing disproportionately to load increases, you’ve entered the saturation zone.

- Infrastructure-level: CPU utilization (user-space vs. kernel-space — high kernel-space indicates thread contention), memory utilization, GC pause frequency and duration. CPU sustained above 85% for more than 60 seconds at a given load step signals you’re near the CPU ceiling.

- Middleware-level: Thread pool utilization (active threads / max threads), connection pool checkout rate, message queue depth. A thread pool at 95% utilization with rising queue depth means the next load increment will breach the ceiling.

- Database-level: Active connections vs. pool maximum, average query execution time, lock wait events, deadlock count.

Forero Cuervo’s guidance holds here: “A better solution is to measure capacity directly in available resources. For example, you may have a total of 500 CPU cores and 1 TB of memory reserved for a given service in a given datacenter” [3]. Monitor the actual resources, not just the application-level symptoms. Understanding which performance metrics matter most — and how to interpret them under increasing load — is what separates a diagnostic capacity test from a data-collection exercise.

The “hockey stick” pattern is your primary diagnostic signal: response times remain flat or grow linearly across early load steps, then suddenly curve upward exponentially. That inflection point is your ceiling.

Phase 5: Analysis and Reporting — Turning Raw Numbers Into Infrastructure Decisions

Identify the ceiling as the load step where p99 latency first breached your SLA threshold. Structure your capacity report around four elements:

- Quantified ceiling: “4,200 concurrent sessions at current configuration”

- First-bottleneck resource: “Thread pool exhausted at 3,800 sessions”

- Safe operating threshold: Typically 70-80% of ceiling (the Pareto principle applied to utilization margins) — in this case, 2,940-3,360 concurrent sessions

- Go/no-go recommendation: Green (ceiling > 2× expected peak), Yellow (ceiling is 1.2-2× expected peak), Red (ceiling ≤ expected peak — do not ship without remediation)

As Ulrich states: “Load testing also reveals where the breaking point is, knowledge that’s fundamental to the capacity planning process. It enables you to test for regressions, provision for worst-case thresholds, and to trade off utilization versus safety margins” [1].

If your ceiling is Yellow or Red, the report must include the specific remediation path — horizontal scaling, connection pool tuning, caching introduction, or code optimization — tied to the identified bottleneck resource. For a systematic approach to diagnosing and resolving those bottlenecks, this guide on how to test and identify bottlenecks in performance testing complements the capacity analysis workflow.

Identifying Your System’s Ceiling: Resource Exhaustion Patterns Every Engineer Should Know

The ceiling isn’t abstract — it’s always a specific resource hitting its limit first. Forero Cuervo learned this at Google: “modeling capacity as ‘queries per second’… often makes for a poor metric… A better solution is to measure capacity directly in available resources” [3]. Here are the five patterns your capacity tests must diagnose.

CPU Saturation: The Ceiling Most Teams Hit First (and How to Spot It Early)

In compute-bound applications (complex JSON serialization, cryptographic operations, image processing), CPU saturation is typically the first ceiling. Observable during a ramp: throughput plateaus, response times climb disproportionately, and CPU utilization holds at 85-95% sustained.

Watch the split between user-space CPU (your application logic) and kernel-space CPU (OS-level context switching). High kernel-space CPU at moderate load levels indicates thread contention — threads fighting over shared locks rather than doing useful work.

Concrete example: a REST API processing complex JSON payloads on a 4-core instance may reach its CPU ceiling at 2,000 req/sec while memory and network sit at 40% utilization. The fix isn’t more memory — it’s horizontal scaling or profiling the hot code path.

Memory Exhaustion and GC Pressure: The Slow Collapse That Capacity Tests Catch and Load Tests Miss

Memory exhaustion is insidious because it manifests only after sustained load — invisible in a five-minute load test but detectable in a capacity ramp with adequate hold phases at each step.

For JVM-based applications: heap utilization above 90% with full GC pauses exceeding 500ms signals a critical capacity ceiling. “GC thrashing” — where the JVM spends more time collecting memory than executing business logic — is the terminal stage. The Google SRE Book notes that “a given program may evolve to need 32 GB of memory when it formerly only needed 8 GB” [2], making this a regression that capacity tests must catch on every release.

Soak testing complements capacity testing here: soak catches slow leaks over hours; capacity ramps catch memory ceilings under concurrent load spikes.

Database Connection Pool Limits: The Hidden Ceiling That Causes Most Checkout and API Failures

This is the most underdiagnosed capacity ceiling in web applications. The mechanics: a connection pool (e.g., HikariCP, default max: 10 connections) services all database requests. When all connections are checked out, new requests queue. If the queue wait exceeds the connection timeout (typically 30 seconds), the request fails.

The sizing formula: minimum pool size = concurrent DB-bound requests × average query duration (in seconds).

Worked example: 200 concurrent checkout requests, each requiring a 50ms database query. Minimum pool: 200 × 0.05 = 10 connections — exactly HikariCP’s default, with zero headroom. The 201st concurrent request queues. At 400 concurrent requests, queue wait times double; at 600, connection timeouts begin firing.

WebLOAD can monitor JDBC connection pool utilization as a server-side metric during the capacity ramp, making pool exhaustion visible as a leading indicator before end-user requests start failing.

Thread Pool Depletion and Network I/O Saturation: Two More Ceilings Your Tests Must Cover

Thread pool depletion is common in synchronous/blocking server frameworks. Tomcat’s default maximum is 200 threads — at 200 concurrent long-running requests (e.g., each waiting on a downstream service for 2 seconds), the pool is full. Request 201 queues. Thread exhaustion (pool full) is distinct from thread contention (threads competing for a shared lock) — the former is a capacity limit, the latter is a concurrency design problem.

Network I/O saturation presents a distinctive signature: throughput plateaus at a fixed Mbps while p99 latency climbs, but CPU remains below 50%. This signals bandwidth or connection saturation, not compute saturation. Ulrich notes that “load balancing problems, network partitions, or unexpected traffic increases can create pockets of high load beyond what was planned” [1] — making network-layer capacity testing a prerequisite, not an afterthought.

Capacity Testing in the Age of Cloud Auto-Scaling: Don’t Let Your Infra Outrun Your Tests

Cloud auto-scaling doesn’t eliminate the need for capacity testing — it changes what you’re testing. Without capacity data, your auto-scaling policies are configured based on guesses: “Scale out at 70% CPU” sounds reasonable, but if your actual ceiling is database connection pool exhaustion at 60% CPU, scaling more compute instances won’t help.

Capacity testing in cloud environments answers three specific questions:

- What is the ceiling of a single instance or pod? This determines your scaling unit — the capacity each new instance adds.

- Do your scaling triggers fire before the ceiling is reached? If CPU-based scaling triggers at 70% but your ceiling is thread pool exhaustion at 55% CPU, the trigger fires too late.

- Does scaled-out capacity actually increase linearly? Shared resources (database, cache, message broker) may become the new ceiling when you add compute. Scaling from 3 to 6 application instances doubles compute but does nothing for a database connection pool that’s already saturated at 3 instances.

The methodology: run your stepped capacity ramp against a single instance to find the per-instance ceiling. Then enable auto-scaling, run the ramp again, and verify that new instances come online before the first instance breaches its SLA threshold. Measure the scaling lag — the time between trigger firing and new instance readiness. If scaling lag is 90 seconds and your traffic ramp reaches ceiling in 60 seconds, auto-scaling arrives too late.

RadView’s platform supports capacity test execution against both cloud and on-premises environments, enabling teams to validate auto-scaling behavior with the same test scripts and analytics they use for single-instance ceiling identification.

Frequently Asked Questions

- Is the 80% safe operating threshold always the right capacity target?

- Not always. The 80% guideline (operate at no more than 80% of your tested ceiling) works for steady-state traffic patterns with predictable variance. But if your traffic is spiky — think flash sales, breaking news events, or batch job overlap — you need more headroom. For systems with sub-second spike-to-peak characteristics, a 60-65% operating threshold is more appropriate because auto-scaling or manual intervention can’t respond fast enough to absorb a 40% traffic surge.

- Should capacity tests run against production or a staging environment?

- Ideally, both — for different purposes. Staging capacity tests identify ceilings and bottlenecks before code reaches production. Production capacity tests (run during low-traffic windows with traffic shadowing or synthetic load) validate that staging findings hold under real infrastructure conditions. The key requirement is environment parity: if your staging environment uses smaller instance types or a reduced dataset, your staging ceiling will be higher than production’s — giving false confidence.

- How frequently should capacity tests run — every sprint, every release, or quarterly?

- Run a baseline capacity test when infrastructure changes (new instance types, database migration, connection pool reconfiguration). Re-run when workload characteristics shift (new API endpoints, changed query patterns, added microservice dependencies). For teams shipping weekly, integrating a lightweight capacity regression test into CI/CD — ramping to 120% of the last known ceiling and checking for SLA breach point regression — catches performance degradation without requiring a full ceiling-discovery test every sprint.

- Can I model capacity purely from APM data without running a dedicated capacity test?

- APM data tells you how the system behaves at current traffic levels — it doesn’t tell you where the ceiling is. Extrapolating from production APM metrics to predict behavior at 3× current load introduces compounding errors because resource contention, GC pressure, and connection pool dynamics behave non-linearly under load. APM is excellent for monitoring and alerting; capacity testing is required for ceiling discovery. They complement, not replace, each other.

- Is 100% capacity test coverage of every microservice worth the investment?

- Rarely. Apply the Pareto principle: identify the 3-5 services on the critical user path (login, search, checkout, payment) and capacity test those thoroughly. For non-critical services, load testing at expected peak is sufficient. Full capacity test coverage across 50+ microservices produces diminishing returns and creates a testing bottleneck that slows delivery — the opposite of the goal.

References and Authoritative Sources

- Ulrich, M. (2017). Chapter 22 – Addressing Cascading Failures. In B. Beyer, C. Jones, J. Petoff, & N.R. Murphy (Eds.), Site Reliability Engineering: How Google Runs Production Systems. O’Reilly Media. Retrieved from https://sre.google/sre-book/addressing-cascading-failures/

- Perry, A., & Luebbe, M. (2017). Chapter 17 – Testing for Reliability. In B. Beyer, C. Jones, J. Petoff, & N.R. Murphy (Eds.), Site Reliability Engineering: How Google Runs Production Systems. O’Reilly Media. Retrieved from https://sre.google/sre-book/testing-reliability/

- Forero Cuervo, A. (2017). Chapter 21 – Handling Overload. In B. Beyer, C. Jones, J. Petoff, & N.R. Murphy (Eds.), Site Reliability Engineering: How Google Runs Production Systems. O’Reilly Media. Retrieved from https://sre.google/sre-book/handling-overload/