Picture this: it’s 11 PM the night before a major release, and your performance engineer discovers the legacy load testing tool can’t trigger from the GitHub Actions pipeline that the rest of the team has been using for six months. The test has to run manually, from a dedicated Windows workstation, no less, and by the time results come back, the release window has closed. The post-mortem doesn’t blame the engineer. It blames the tool.

This scenario is more common than it should be. And the stakes are concrete: BigPanda’s 2024 incident cost analysis pegged average downtime at $23,750 per minute [1]. When your load testing tool is the reason you ship blind, or don’t ship at all, the cost isn’t theoretical.

LoadRunner earned its place in enterprise performance testing over two decades. But the engineering world it was built for – waterfall releases, dedicated QA labs, weeks-long test cycles – is not the world most teams operate in today. Licensing costs have compounded, VuGen scripting remains a specialized skill, and native CI/CD integration was never a first-class design priority. The steady year-over-year growth in searches for “LoadRunner alternative” isn’t a trend; it’s a signal that teams are actively looking for tools that match how they actually build and ship software.

This guide doesn’t rehash a generic feature list. It walks through the specific capabilities, scripting flexibility, scalability architecture, CI/CD integration depth, and reporting quality, that separate modern load testing tools from legacy ones. Each section maps to a real evaluation criterion you can apply immediately, whether you’re a QA lead comparing vendors, an SRE pushing for observability integration, or a DevOps manager who needs pipeline-native performance gates.

- Why Teams Are Moving On from LoadRunner (And What They Actually Need Instead)

- Feature #1: Scripting Flexibility and Protocol Support

- Feature #2: Scalability—From 100 to 1,000,000 Virtual Users Without Breaking the Bank

- Feature #3: CI/CD and DevOps Integration—The Make-or-Break Capability for Modern Teams

- Feature #4: Reporting and Analytics That Actually Tell You Something

- Conclusion

- References

Why Teams Are Moving On from LoadRunner (And What They Actually Need Instead)

The total cost of a legacy load testing tool extends well beyond the license invoice. LoadRunner’s per-virtual-user licensing model means simulating 10,000 concurrent users can cost multiples of what consumption-based or open-source alternatives charge for equivalent capacity. But the hidden costs are often larger: engineering hours spent maintaining VuGen scripts that break with every application update, weeks of lead time to procure and configure on-premise hardware clusters for large-scale tests, and slow feedback cycles that delay release decisions.

DORA’s 2023 Accelerate State of DevOps Report found that teams using flexible cloud infrastructure achieve 30% higher organizational performance than those relying on inflexible, hardware-dependent setups [2]. When your load testing infrastructure requires manual provisioning, you’re not just paying for hardware, you’re paying for the organizational drag that hardware-dependent workflows impose on every release.

What ‘Modern’ Actually Means for a Load Testing Tool in 2026

A modern load testing tool is defined by three non-negotiable baselines:

- Native CLI execution for headless CI pipeline runs, no GUI dependency, no desktop license required on a build agent.

- Elastic scaling to at least 50,000 virtual users without manual infrastructure provisioning, spin up, run, tear down, pay only for what you used.

- Out-of-the-box integrations or documented APIs for Jenkins, GitHub Actions, and Azure DevOps, not “you can call it from a shell script” but actual plugins, result publishing, and threshold-gate logic.

Modern enterprise environments demand non-static solutions that fit into agile and DevOps workflows, as DORA’s Continuous Testing Capability research outlines.

Who This Guide Is For: Mapping Reader Roles to Evaluation Priorities

Different roles weigh these features differently. Before you read further, find your lane:

- QA Leads: Prioritize scripting flexibility and test methodology coverage (Feature #1). Your team’s adoption speed depends on it.

- SREs: Focus on reporting, real-time analytics, and threshold alerting (Feature #4). You need to know when p99 crosses your SLO during the test, not after.

- DevOps Managers: CI/CD plugin ecosystem and pipeline-native execution (Feature #3) are your top criteria. If the tool can’t run headless in your pipeline, it doesn’t exist.

- IT Architects: Evaluate pricing model and scalability architecture (Feature #2). The five-year TCO difference between per-VU licensing and consumption-based models can be six figures.

Feature #1: Scripting Flexibility and Protocol Support

Scripting is where the daily experience of using a load testing tool lives. The choice between a code-first, GUI-driven, or hybrid scripting approach determines how fast your team ramps up, how maintainable your test scripts remain over months, and how effectively you can simulate complex, multi-step user journeys.

Here’s a quick comparison across major tools:

| Capability | WebLOAD | JMeter | k6 | Gatling | LoadRunner |

|---|---|---|---|---|---|

| Scripting Language | JavaScript | Java/Groovy (JSR223) | JavaScript/TypeScript | Scala/Java/Kotlin | C, Java, VuGen |

| GUI Recorder | Yes | Yes (limited) | No | No | Yes (VuGen) |

| Protocol Breadth | HTTP/S, WebSocket, gRPC, MQTT, JDBC, SOAP, REST, and dozens more | HTTP/S, FTP, JDBC, LDAP (plugins for others) | HTTP/S, WebSocket, gRPC | HTTP/S, WebSocket, JMS | 50+ protocols |

| Version-Control Friendly | Yes (text-based scripts) | Partial (XML-based .jmx) | Yes | Yes | Partial |

Code-First vs. GUI-Driven vs. Hybrid: Which Scripting Approach Fits Your Team?

Code-first tools (k6, Gatling, Locust) treat test scripts as production code: version-controlled, peer-reviewed, and fully composable. k6’s 29.9k GitHub stars and Locust’s 27.5k stars reflect strong community adoption among developer-heavy teams [5]. The trade-off: a QA team without strong coding skills may spend weeks scripting a complex user journey that a GUI recorder could capture in hours.

GUI-driven tools lower the barrier for manual QA teams but often produce brittle, monolithic scripts that are difficult to parameterize or modularize after recording.

Hybrid tools offer both paths. WebLOAD, for example, provides a visual recorder that generates editable JavaScript, a language most web engineers already know, so teams can start with a recording and progressively enhance it with custom logic, dynamic data feeds, and conditional branching. JMeter offers a similar hybrid approach, though its XML-based test plans (.jmx files) are notably less readable in code review than text-based scripts. Apache JMeter’s official documentation explicitly recommends the best practices for performance optimization, acknowledging that scripting choice impacts test execution overhead.

Engineer’s Perspective: The real scripting question isn’t which language the tool uses – it’s how long it takes a new team member to write a maintainable script without tribal knowledge.

Protocol Coverage: Why Breadth Matters More Than You Think

If your tool only supports HTTP/S and your production architecture includes gRPC inter-service calls, WebSocket-based real-time dashboards, or MQTT telemetry from IoT devices, your load test is simulating a fictional version of your system.

Concrete protocol-to-use-case mapping:

- WebSockets: Real-time chat, live sports scores, collaborative editing. Without WebSocket support, you can’t simulate persistent bidirectional connections.

- gRPC: Microservices communication (Google, Netflix, and Spotify use it extensively). HTTP/1.1-only tools can’t replicate multiplexed HTTP/2 streams that gRPC depends on. Learn more about WebSocket in Understanding WebSockets: TCP vs. UDP Explained.

- MQTT: IoT device simulation, smart home platforms, industrial sensors, connected vehicles. Requires tools supporting lightweight pub/sub protocols.

- JDBC: Direct database load testing, validating query performance under concurrent pressure, bypassing the application layer entirely.

If your tool can’t simulate the protocol your real users generate, your load test results aren’t measuring what you think they are.

Script Maintainability and AI-Assisted Correlation: The Feature Most Comparisons Ignore

Here’s the long-term cost most evaluations miss: script maintenance. When your application changes – a new login flow, an updated session token format, a modified API response structure – dynamic correlation is what breaks first. Correlation means extracting runtime values (like a JSESSIONID or CSRF token) from one response and injecting them into subsequent requests. When done manually, it’s tedious and fragile. When the token format changes, every correlated script breaks.

AI-assisted correlation automates the detection and extraction of these dynamic values. RadView’s platform, for example, uses intelligent correlation to identify session-bound parameters during recording and automatically parameterize them, reducing the manual effort that historically consumed 20–30% of a performance test sprint.

What QA Leads Should Know: Correlation failures are one of the top three reasons load test scripts break in production-like environments. If your tool doesn’t automate this, budget significant sprint time for maintenance every release cycle.

Feature #2: Scalability—From 100 to 1,000,000 Virtual Users Without Breaking the Bank

Scalability in load testing isn’t just about hitting a number – it’s about hitting that number accurately, affordably, and repeatably.

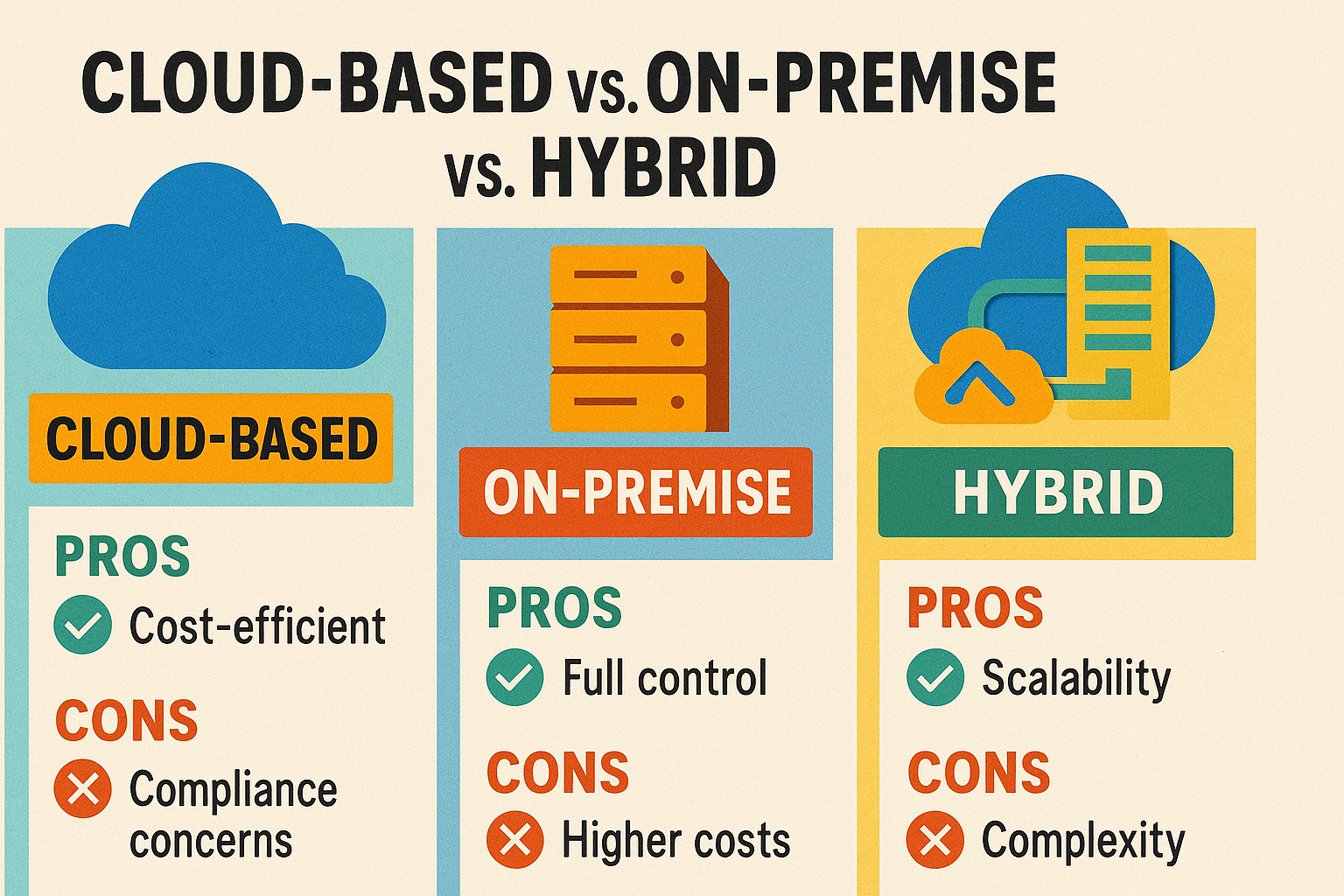

Cloud vs. On-Premise vs. Hybrid: Choosing the Deployment Model That Fits Your Reality

Each deployment model solves a different constraint:

- Pure Cloud: Best for teams with variable load testing needs, run a 200,000-VU Black Friday simulation for four hours, then pay nothing until next quarter. No hardware to maintain, no capacity to over-provision.

- On-Premise: Required when data residency rules prohibit routing test traffic through public cloud. A healthcare SaaS provider running HIPAA-governed synthetic patient data through load tests can’t use shared cloud infrastructure without significant compliance overhead.

- Hybrid: Run daily regression tests on local infrastructure; burst to cloud for peak-capacity simulations. WebLOAD supports this model natively, letting teams avoid the binary choice between cloud convenience and on-premise control.

DORA’s 2023 research confirms the strategic value here: NIST Cloud Computing Standards note the advantage of public cloud adoption increasing infrastructure flexibility, which drives higher organizational performance.

Elastic Scaling in Practice: What Happens When Your Test Peaks at 3AM?

Consider a concrete scenario: your e-commerce platform needs to validate checkout performance under 500,000 concurrent users to prepare for a flash sale. With on-premise infrastructure, you’d need to provision, configure, and network dozens of dedicated load generator machines, a process that takes weeks and costs tens of thousands in hardware that sits idle 95% of the year.

With elastic cloud scaling, you define the target VU count, specify geographic distribution (e.g., 60% US-East, 25% EU-West, 15% AP-Southeast), and the platform auto-provisions load generators, runs the test, and tears down infrastructure on completion. The entire provisioning cycle takes minutes, not weeks. You pay for four hours of compute, not twelve months of rack space.

Coordinated Omission and Result Accuracy at Scale: The Technical Trap Most Teams Fall Into

Scaling up virtual users introduces a measurement accuracy problem that most teams don’t discover until results stop matching production behavior. Apache JMeter’s official User Manual warns directly: “if you don’t correctly size the number of threads, you will face the Coordinated Omission problem which can give you wrong or inaccurate results” [4].

Here’s what happens technically: when a load generator thread is saturated, it queues outgoing requests internally. The tool measures only the server’s processing time for each request, not the time the request spent waiting in the local queue. The result: your p99 latency report shows 200ms, but actual user-perceived latency is 800ms+ because requests were waiting 600ms before they were even sent.

What QA Leads Should Know: If your load test results show stable p99 latency at scale but real users are complaining, Coordinated Omission is a likely culprit. Verify your load generators aren’t saturated before trusting aggregate latency numbers.

Feature #3: CI/CD and DevOps Integration—The Make-or-Break Capability for Modern Teams

DORA’s 2023 research established that “the effect of continuous integration on software delivery performance is mediated completely through continuous delivery.” Translation: CI without CD – and without automated testing in the pipeline – doesn’t deliver the outcomes teams expect. Performance testing that happens outside the pipeline is, by definition, disconnected from the delivery mechanism that drives organizational results.

Shift-Left Performance Testing: Why Waiting Until Pre-Production Is Too Late

A performance regression caught at the pull-request stage costs roughly one hour of developer time to investigate and fix. The same regression caught in production after a release triggers incident response, rollback coordination, stakeholder communication, and potential revenue loss during downtime, easily a 50x cost multiplier.

As Ham Vocke describes in The Practical Test Pyramid: “You put the fast running tests in the earlier stages of your pipeline… you put the longer running tests in the later stages to not defer the feedback from the fast-running tests” [3]. Applied to performance testing, this means: run lightweight API response-time checks (< 2 minutes) on every PR; run full-scale load tests nightly or pre-release.

Pipeline Integration in Practice: Jenkins, GitHub Actions, and Azure DevOps Examples

Here’s what a performance gate step looks like in GitHub Actions using k6 (a representative code-first example):

# .github/workflows/perf-gate.yml

name: Performance Gate

on: [pull_request]

jobs:

load-test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Run k6 smoke test

uses: grafana/k6-action@v0.3.1

with:

filename: tests/perf/smoke.js

env:

K6_THRESHOLDS: '{"http_req_duration{expected_response:true}":["p(99)<500"],"http_req_failed":["rate<0.01"]}'

This configuration fails the build if p99 response time exceeds 500ms or error rate exceeds 1% – automated, reproducible, and zero manual intervention.

Threshold Gates and Automated Regression Detection: Turning Test Results Into Build Decisions

Static thresholds are a starting point: “fail if p99 > 500ms.” But meaningful threshold design derives from your production SLOs, not tool defaults. For an e-commerce checkout API with a production SLO of p99 < 300ms under 1,000 concurrent users, a CI threshold of p99 < 500ms provides a 67% buffer that prevents false failures caused by test environment variability while still catching genuine regressions.

More advanced tools go beyond static thresholds to offer baseline comparison: automatically comparing the current test run’s metrics against the previous N runs and flagging statistically significant degradations. This catches slow performance drift that static thresholds miss – a 5% p99 increase per sprint that stays under the 500ms gate but compounds to a 30% degradation over six sprints.

Feature #4: Reporting and Analytics That Actually Tell You Something

A load test that produces data without insight is an expensive log file. The distinction between useful and useless reporting comes down to three questions: Can you identify the bottleneck within 60 seconds of test completion? Can you compare this run against last week’s baseline? Can you explain the results to a non-technical stakeholder in under five minutes?

Real-Time Monitoring vs. Post-Test Analysis: You Need Both

Real-time monitoring lets you observe the test as it runs: spot a sudden error rate spike at the 12-minute mark, identify that the /api/checkout endpoint is timing out, and stop the test before wasting another 48 minutes of compute on a broken scenario. Without real-time visibility, you wait for the full test to complete before discovering the first two minutes of data were the only valid portion.

Post-test analysis provides the structured breakdown: p95 and p99 latency by transaction type, throughput trends over the test duration, error categorization by HTTP status code and endpoint, and SLA breach identification. WebLOAD’s analytics dashboard surfaces these metrics with drill-down capability – click on a latency spike to see which specific transactions contributed, then correlate with server-side resource utilization.

What QA Leads Should Know: If your load test report requires 30 minutes of manual analysis before you can identify the bottleneck, your reporting tool is doing half its job. Look for tools that surface SLA breaches, error hotspots, and regression indicators automatically.

Conclusion

Evaluating a LoadRunner alternative isn’t about finding the cheapest option or the one with the longest feature list. It’s about identifying which tool fits your team’s actual workflow – how you script, how you scale, how you integrate with your pipeline, and how you communicate results.

The four features covered here – scripting flexibility with broad protocol support, elastic scalability across deployment models, native CI/CD integration with threshold gating, and reporting that surfaces actionable insights, are the capabilities that separate tools engineers adopt willingly from tools that gather shelfware. Evaluate each against your specific constraints: your team’s coding proficiency, your infrastructure model, your pipeline platform, and your SLO requirements.

Request demos. Run proof-of-concept tests against your actual application. Compare not just features on a spec sheet, but time-to-first-useful-result. The right tool pays for itself in the first release cycle where it catches something the old one would have missed.

References

- BigPanda. (2024). IT Incident Cost Analysis. As cited in Vervali Systems, “Best Load Testing Tools in 2026: Definitive Guide.” Retrieved from https://www.vervali.com/blog/best-load-testing-tools-in-2026-definitive-guide-to-jmeter-gatling-k6-loadrunner-locust-blazemeter-neoload-artillery-and-more/

- DeBellis, D., Maxwell, E., Farley, D., McGhee, S., Harvey, N., et al. (2023). Accelerate State of DevOps Report 2023. DORA / Google Cloud. Retrieved from https://dora.dev/research/2023/dora-report/2023-dora-accelerate-state-of-devops-report.pdf

- Vocke, H. (2018). The Practical Test Pyramid. martinfowler.com / Thoughtworks. Retrieved from https://martinfowler.com/articles/practical-test-pyramid.html

- Apache Software Foundation. (2024). Apache JMeter User’s Manual: Best Practices. Retrieved from https://jmeter.apache.org/usermanual/best-practices.html

- Colantonio, J. (2025). 15 Best Load Testing Tools for 2025 (Free & Open Source Picks). TestGuild. Retrieved from https://testguild.com/load-testing-tools/